Vector Database Search Hnsw Algorithm Explained

Vector Database Basics Hnsw Tigerdata I want to introduce why hnsw indexing is so useful for doing a similarity search, or approximate nearest neighbour (ann)search, in vector embedding space. Now that you’ve learned the intuition behind hnsw and how to implement it in faiss, you’re ready to go ahead and test hnsw indexes in your own vector search applications, or use a managed solution like pinecone or opensearch that has vector search ready to go!.

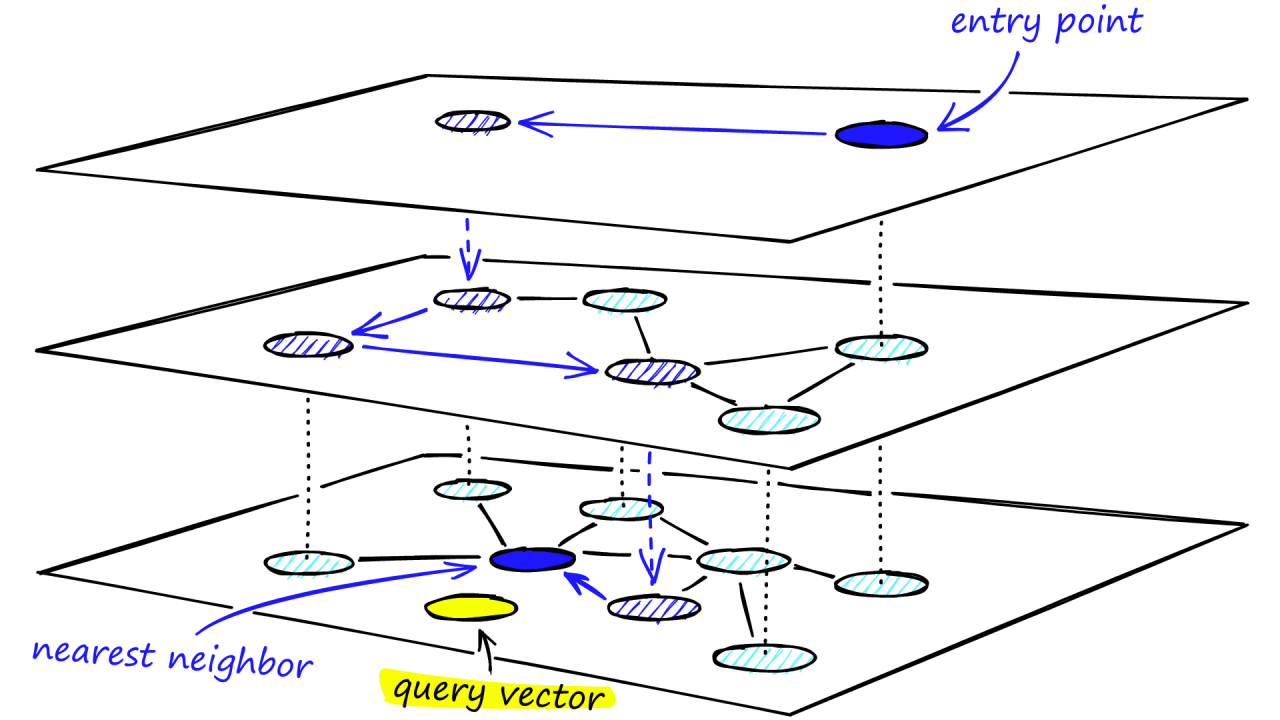

Vector Search Hnsw Explained R Computervision Hnsw organizes vectors into a layered graph where each layer helps narrow down the search, so the query vector is compared to relatively few other vectors. at each layer from top to bottom, the number of nodes increases. Understanding how the hnsw algorithm works requires a closer look at its principles, its inspiration from skip lists, and how it introduces long edges to overcome traditional graph indexing challenges. Build a vector database with hnsw: hierarchical graph structure, search algorithm, implementation details, and how hnsw enables fast similarity search. Here's how to leverage hnsw with a single line of code in each context, making your vector database more powerful and search efficient, whether in our cloud platform or using the open source version.

Vector Database Basics Hnsw Tiger Data Build a vector database with hnsw: hierarchical graph structure, search algorithm, implementation details, and how hnsw enables fast similarity search. Here's how to leverage hnsw with a single line of code in each context, making your vector database more powerful and search efficient, whether in our cloud platform or using the open source version. Hnsw is a key method for approximate nearest neighbor search in high dimensional vector databases, for example in the context of embeddings from neural networks in large language models. When hnsw indexes are used, you must enable a new memory area in the database called the vector pool. the vector pool is memory allocated from the system global area (sga) to store hnsw type vector indexes and their associated metadata. In this tutorial, we explored hierarchical navigable small worlds (hnsw), a powerful graph based vector similarity search strategy that involves multiple layers of connected graphs. This guide covers the algorithms that make that operation fast — hnsw, ivf, product quantization, lsh, and scann — along with the similarity metrics that define what “closest” means.

Vector Database Basics Hnsw Tiger Data Hnsw is a key method for approximate nearest neighbor search in high dimensional vector databases, for example in the context of embeddings from neural networks in large language models. When hnsw indexes are used, you must enable a new memory area in the database called the vector pool. the vector pool is memory allocated from the system global area (sga) to store hnsw type vector indexes and their associated metadata. In this tutorial, we explored hierarchical navigable small worlds (hnsw), a powerful graph based vector similarity search strategy that involves multiple layers of connected graphs. This guide covers the algorithms that make that operation fast — hnsw, ivf, product quantization, lsh, and scann — along with the similarity metrics that define what “closest” means.

Decoding Hnsw Unraveling The Vector Search Algorithm Behind Sota In this tutorial, we explored hierarchical navigable small worlds (hnsw), a powerful graph based vector similarity search strategy that involves multiple layers of connected graphs. This guide covers the algorithms that make that operation fast — hnsw, ivf, product quantization, lsh, and scann — along with the similarity metrics that define what “closest” means.

Vector Databases Understanding Knn And Hnsw

Comments are closed.