Variable Length Sequences In Tensorflow Part 1 Optimizing Sequence Padding

Variable Length Sequences In Tensorflow Part 1 Optimizing Sequence Padding In this three part series, we will review different strategies to handle variable length sequences in tensorflow with a focus on performance, and discuss the pros and cons of each strategy along with their implementations in tensorflow. In this project, we implement different strategies to handle variable length sequences in tensorflow with a focus on performance. we will discuss the pros and cons of each strategy along with their implementations in tensorflow.

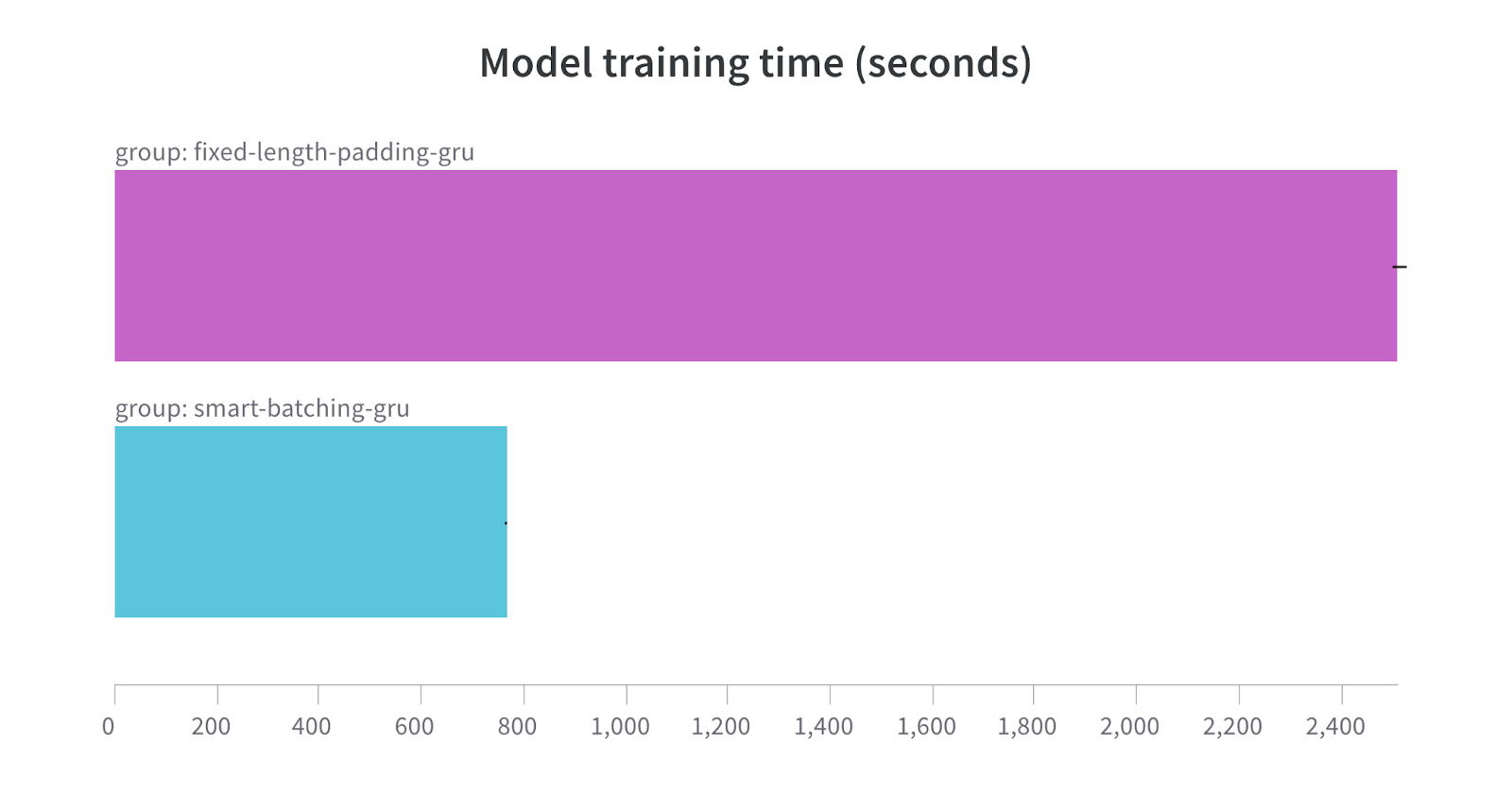

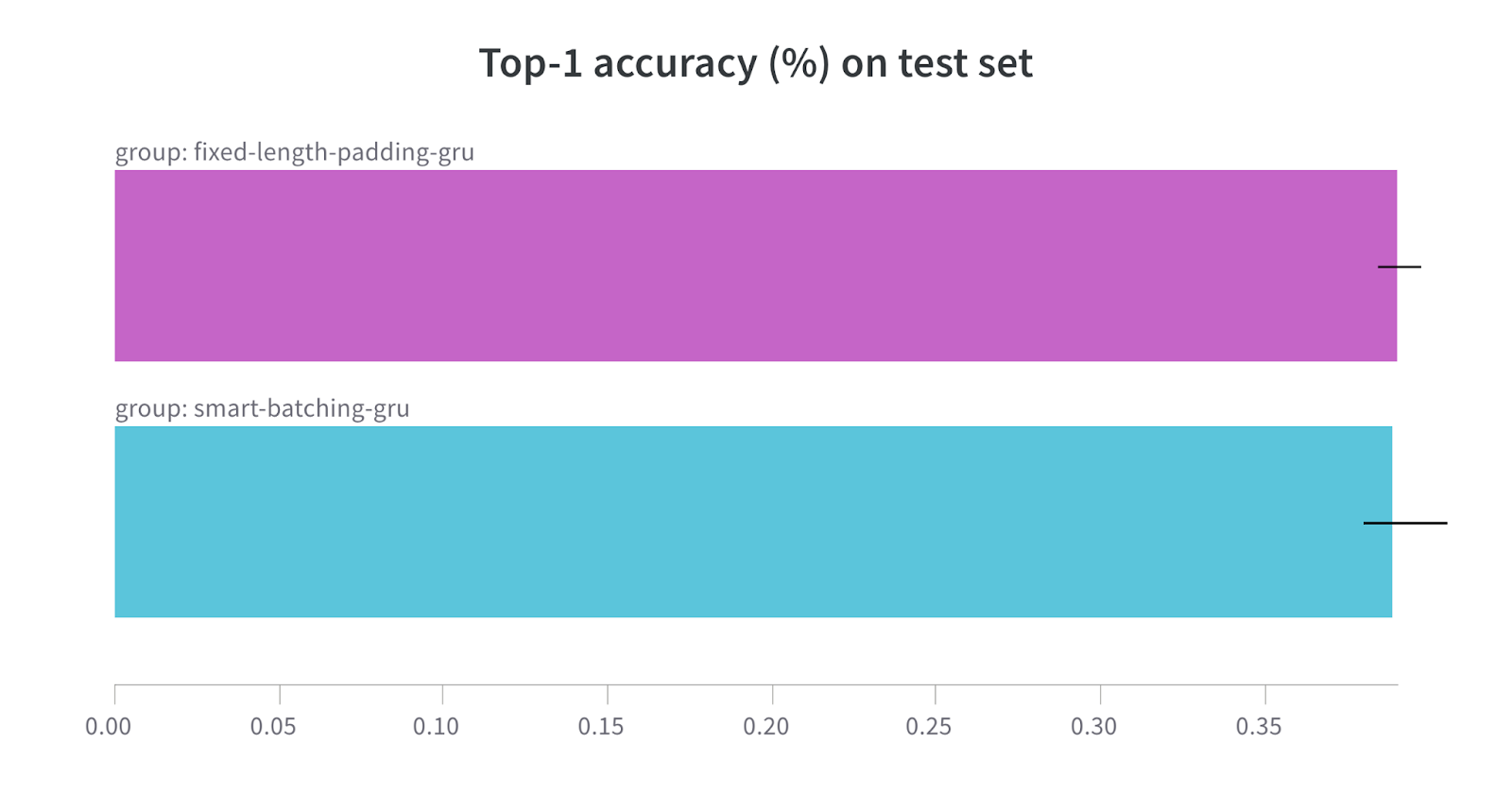

Variable Length Sequences In Tensorflow Part 1 Optimizing Sequence Padding In pursuit of doing that, today we’re sharing recipes to significantly boost the training time of sequence models in tensorflow. we tested our recipes on rnn based as well as bert based models to confirm their effectiveness. central to these recipes is the idea of dynamic padding in batches which we found to be quite non trivial in tensorflow. Sequences longer than num timesteps are truncated so that they fit the desired length. the position where padding or truncation happens is determined by the arguments padding and truncating, respectively. pre padding or removing values from the beginning of the sequence is the default. Padding variable length sequences in tensorflow: description: this query seeks guidance on padding sequences of variable lengths in tensorflow to ensure uniform dimensions within batches. You can use the ideas of bucketing and padding which are described in: sequence to sequence models. also, the rnn function which creates rnn network accepts parameter sequence length.

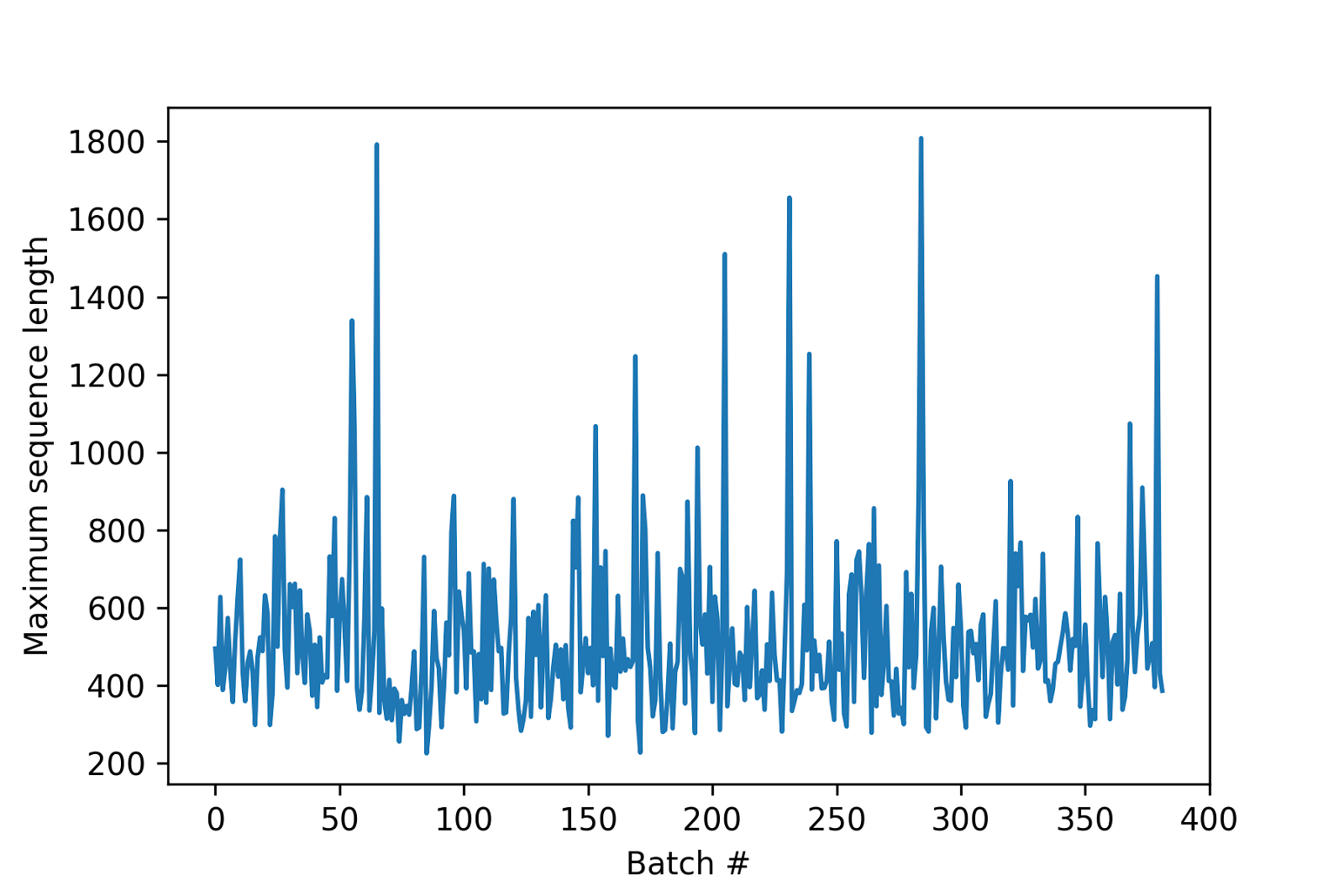

Variable Length Sequences In Tensorflow Part 1 Optimizing Sequence Padding Padding variable length sequences in tensorflow: description: this query seeks guidance on padding sequences of variable lengths in tensorflow to ensure uniform dimensions within batches. You can use the ideas of bucketing and padding which are described in: sequence to sequence models. also, the rnn function which creates rnn network accepts parameter sequence length. The standard technique to address variable length sequences is padding. padding involves adding a special, pre defined value (the "padding value") to shorter sequences until all sequences in a batch reach a common, fixed length. One common challenge in working with neural networks is handling variable length sequences and generating new sequences based on trained models. in this article, we will explore some techniques to tackle this problem using tensorflow. Rather than padding the sequences in each batch to a constant length, we pad to the length of the longest sequence in the batch. the following block of code consists of our revised collation function and updated experiments. In this colab, you learn how to use padding to make the sequences all be the same length. feel free to write your own sentences here. when creating the tokenizer, you can specify the max.

Variable Length Sequences In Tensorflow Part 1 Optimizing Sequence Padding The standard technique to address variable length sequences is padding. padding involves adding a special, pre defined value (the "padding value") to shorter sequences until all sequences in a batch reach a common, fixed length. One common challenge in working with neural networks is handling variable length sequences and generating new sequences based on trained models. in this article, we will explore some techniques to tackle this problem using tensorflow. Rather than padding the sequences in each batch to a constant length, we pad to the length of the longest sequence in the batch. the following block of code consists of our revised collation function and updated experiments. In this colab, you learn how to use padding to make the sequences all be the same length. feel free to write your own sentences here. when creating the tokenizer, you can specify the max.

Comments are closed.