Using Custom Models Vivo Ai Lab Smartbench Deepwiki

Using Custom Models Vivo Ai Lab Smartbench Deepwiki This page explains how to integrate custom test models into smartbench for evaluation. it covers the model interface requirements, the dynamic import mechanism, directory structure setup, and implementation patterns for different model types. This section covers advanced usage scenarios and extensibility features of smartbench beyond the basic three stage evaluation workflow. it addresses customization, integration of new components, multi precision evaluation setups, and error handling strategies for production deployments and research experimentation.

Evaluation Methodology Vivo Ai Lab Smartbench Deepwiki This page introduces smartbench, a benchmark system for evaluating chinese smartphone device side large language models (llms). it explains the benchmark's purpose, key features, system components, and evaluation methodology. This guide walks you through the initial setup and execution of your first evaluation with smartbench. you will learn how to install dependencies, configure the directory structure, and run the three stage evaluation pipeline to assess a device side llm model. This page provides a comprehensive guide for running smartbench evaluations on device side large language models. it covers the complete workflow from preparing test data to obtaining final scores, including command line arguments, file organization, and common usage patterns. This document describes the high level architecture of smartbench, including its three stage evaluation pipeline, data flow between components, file formats, and external dependencies.

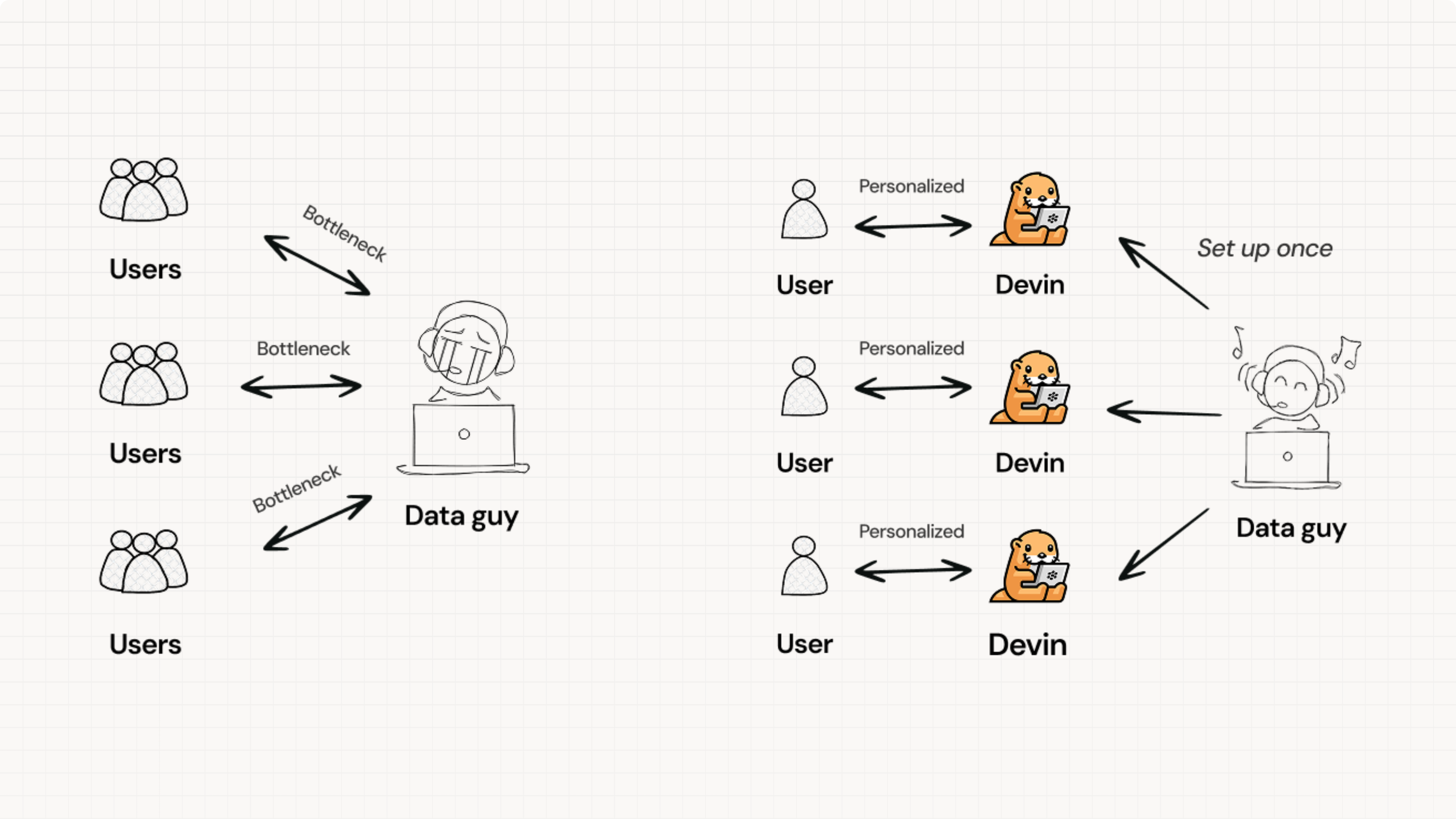

Cognition Deepwiki Ai Docs For Any Repo This page provides a comprehensive guide for running smartbench evaluations on device side large language models. it covers the complete workflow from preparing test data to obtaining final scores, including command line arguments, file organization, and common usage patterns. This document describes the high level architecture of smartbench, including its three stage evaluation pipeline, data flow between components, file formats, and external dependencies. Smartbench 是第一个专门针对中文智能手机场景下设备端大语言模型(llm)能力评估的基准。 它通过分析智能手机制造商提供的功能,将设备端 llm 功能分为五个类别,共 20 个具体任务,涵盖了文本摘要、文本问答、信息抽取、内容创作和通知管理等实际应用场景。. We test smartbench performance using both gpt 4 and qwen max as evaluators. as can be seen, during the training process from llm to multimodal models, mmlu performance improves due to the injection of new knowledge. To address these gaps, we introduce smartbench, the first benchmark designed to evaluate the capabilities of on device llms in chinese mobile contexts. Sort: recently updated vivo ai bluelm 7b chat 4bits vivo ai bluelm 7b chat 32k gptq vivo ai bluelm 7b chat 32k awq vivo ai bluelm 7b chat 32k vivo ai bluelm 7b base 32k vivo ai bluelm 7b chat.

Error Handling And Retry Mechanisms Vivo Ai Lab Smartbench Deepwiki Smartbench 是第一个专门针对中文智能手机场景下设备端大语言模型(llm)能力评估的基准。 它通过分析智能手机制造商提供的功能,将设备端 llm 功能分为五个类别,共 20 个具体任务,涵盖了文本摘要、文本问答、信息抽取、内容创作和通知管理等实际应用场景。. We test smartbench performance using both gpt 4 and qwen max as evaluators. as can be seen, during the training process from llm to multimodal models, mmlu performance improves due to the injection of new knowledge. To address these gaps, we introduce smartbench, the first benchmark designed to evaluate the capabilities of on device llms in chinese mobile contexts. Sort: recently updated vivo ai bluelm 7b chat 4bits vivo ai bluelm 7b chat 32k gptq vivo ai bluelm 7b chat 32k awq vivo ai bluelm 7b chat 32k vivo ai bluelm 7b base 32k vivo ai bluelm 7b chat.

Deepwiki Ai Tool For Deep Research And Knowledge Creation To address these gaps, we introduce smartbench, the first benchmark designed to evaluate the capabilities of on device llms in chinese mobile contexts. Sort: recently updated vivo ai bluelm 7b chat 4bits vivo ai bluelm 7b chat 32k gptq vivo ai bluelm 7b chat 32k awq vivo ai bluelm 7b chat 32k vivo ai bluelm 7b base 32k vivo ai bluelm 7b chat.

Cognition Ai Launches Deepwiki Ai Powered Platform Indexing 30 000

Comments are closed.