Understanding Ollama Models

Understanding Ollama Models Complete guide to managing ollama models. pull new models, list installed ones, update to latest versions, customize with modelfiles, and clean up disk space. Ollama enables developers to run pre trained, open weight language and multimodal models locally through a unified runtime and api. this eliminates the need for training models from scratch while reducing infrastructure complexity and compute costs, allowing rapid integration into applications.

What Is Ollama Features Models And How It Works In 2025 Thanks to ollama, anyone with a modern computer can now run sophisticated ai models locally, whether you're coding on a plane at 35,000 feet, analyzing sensitive documents that can never touch the cloud, or simply experimenting with ai without watching your api bill climb. The hard part is no longer setup. it is choosing the right model for your task, hardware, and tolerance for quantization tradeoffs. we tested 12 models across coding, rag, agent tasks, and general reasoning on real hardware (rtx 4090, rtx 3090, macbook pro m3 max, and a 16gb laptop gpu). Choosing the right ollama model requires careful consideration of your specific use case, hardware constraints, and performance requirements. this comprehensive guide provides the foundation for making informed decisions about model selection, optimization, and deployment. If you’re unsure which model to use, you can explore ollama’s model library, which provides detailed information about each model, including installation instructions, supported use cases, and customization options.

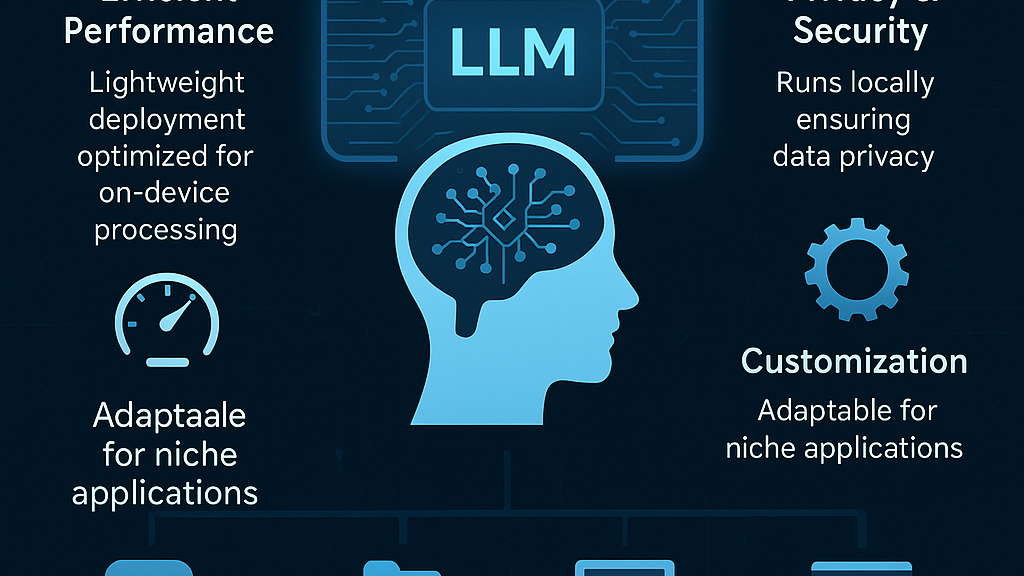

Ollama S New Engine For Multimodal Models Ollama Blog Choosing the right ollama model requires careful consideration of your specific use case, hardware constraints, and performance requirements. this comprehensive guide provides the foundation for making informed decisions about model selection, optimization, and deployment. If you’re unsure which model to use, you can explore ollama’s model library, which provides detailed information about each model, including installation instructions, supported use cases, and customization options. Ollama is the easiest way to get up and running with large language models such as gpt oss, gemma 3, deepseek r1, qwen3 and more. The definitive guide to all 100 ollama models. compare llama 3.3, deepseek r1, gemma 3, qwen3, mistral, and more. includes hardware requirements, benchmarks, use cases, and recommendations for choosing the right local ai model. This newsletter explores the inner workings of ollama, its core features, supported models, practical applications, and future potential. What is ollama? ollama is a lightweight, extensible framework for building and running language models locally. it provides a simple api for creating, running, and managing models, as well as a library of pre built models that can be easily used in various applications.

Comments are closed.