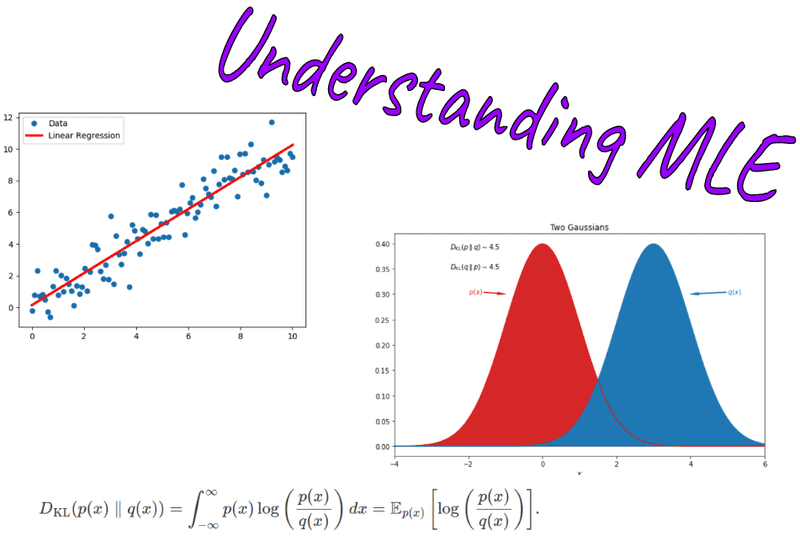

Understanding Maximum Likelihood Estimation

Understanding Maximum Likelihood Estimation In statistics, maximum likelihood estimation (mle) is a method of estimating the parameters of an assumed probability distribution, given some observed data. this is achieved by maximizing a likelihood function so that, under the assumed statistical model, the observed data is most probable. Learn what maximum likelihood estimation (mle) is, understand its mathematical foundations, see practical examples, and discover how to implement mle in python.

Topics To use a maximum likelihood estimator, first write the log likelihood of the data given your parameters. then chose the value of parameters that maximize the log likelihood function. Maximum likelihood estimation (mle) explained with key concepts, implementation steps, and applications in various fields like econometrics, machine learning, finance, and biostatistics. learn how mle works, its versatile applications, and how to implement it using python with synthetic data. But similar to ols, mle is a way to estimate the parameters of a model, given what we observe. mle asks the question, "given the data that we observe (our sample), what are the model parameters that maximize the likelihood of the observed data occurring?". In this post i will present some interactive visualizations to try to explain maximum likelihood estimation and some common hypotheses tests (the likelihood ratio test, wald test, and score test).

Probability Theory Understanding Maximum Likelihood Estimation But similar to ols, mle is a way to estimate the parameters of a model, given what we observe. mle asks the question, "given the data that we observe (our sample), what are the model parameters that maximize the likelihood of the observed data occurring?". In this post i will present some interactive visualizations to try to explain maximum likelihood estimation and some common hypotheses tests (the likelihood ratio test, wald test, and score test). Every time you fit a logistic regression, train a neural network, or run a linear model, the engine underneath is almost certainly maximum likelihood estimation (mle). it is the most widely used method for fitting statistical models, and it has a beautifully intuitive interpretation: find the parameter values that make the observed data as probable as possible. you can explore it directly with. Learn the principles of mle, its properties, and how to apply it to estimate parameters in various statistical models. Maximum likelihood estimation (mle) is a technique used for estimating the parameters of a given distribution, using some observed data. In this article, we will delve into the fundamental principles of mle, explore how likelihood functions are constructed, and discuss the practical advantages of working with their logarithmic forms.

Understanding Maximum Likelihood Estimation In Machine Learning The Every time you fit a logistic regression, train a neural network, or run a linear model, the engine underneath is almost certainly maximum likelihood estimation (mle). it is the most widely used method for fitting statistical models, and it has a beautifully intuitive interpretation: find the parameter values that make the observed data as probable as possible. you can explore it directly with. Learn the principles of mle, its properties, and how to apply it to estimate parameters in various statistical models. Maximum likelihood estimation (mle) is a technique used for estimating the parameters of a given distribution, using some observed data. In this article, we will delve into the fundamental principles of mle, explore how likelihood functions are constructed, and discuss the practical advantages of working with their logarithmic forms.

Comments are closed.