Underline Improving Embeddings Representations For Comparing Higher

Underline Improving Embeddings Representations For Comparing Higher We propose an approach for comparing curricula of study programs in higher education. pre trained word embeddings are fine tuned in a study program classification task, where each curriculum is represented by the names and content of its courses. We propose an approach for comparing cur ricula of study programs in higher education. pre trained word embeddings are fine tuned in a study program classification task, where each curriculum.

Pdf Improving Embeddings Representations For Comparing Higher We propose an approach for comparing curricula of study programs in higher education. pre trained word embeddings are fine tuned in a study program classification task, where each curriculum is represented by the names and content of its courses. Improving embeddings representations for comparing higher education curricula: a use case in computing. Improving embeddings representations for comparing higher education curricula: a use case in computing. Bibliographic details on improving embeddings representations for comparing higher education curricula: a use case in computing.

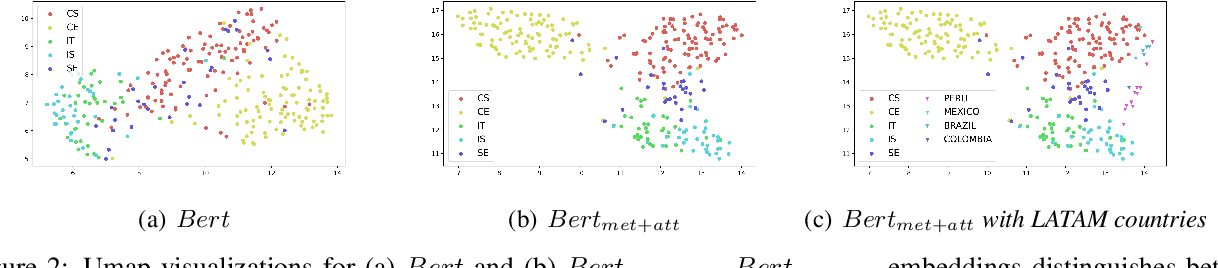

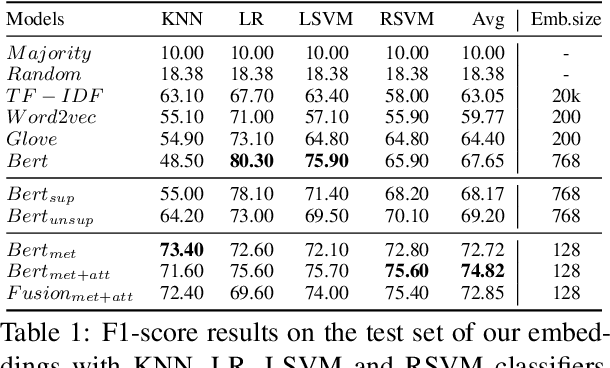

Figure 2 From Improving Embeddings Representations For Comparing Higher Improving embeddings representations for comparing higher education curricula: a use case in computing. Bibliographic details on improving embeddings representations for comparing higher education curricula: a use case in computing. This is the official repository of the emnlp2022 main conference named "improving embeddings representations for comparing higher education curricula: a use case in computing". By focusing on higher level relationships through shared class labels, our approach circumvents these limitations and offers a more flexible lens for comparing two scatter based embedding visualizations. Algorithm 1 describes how qed reduces high dimensional embeddings into useful representations through a step by step process. it begins by taking the large embeddings generated from a feature and grouping values into simple bins via quantization (refer algorithm 1, line 1, and theorem 1). In this work, we follow the current trend in nlp applications and use pre trained word embeddings, such as bert (devlin et al.,2019), to obtain better representations of textual curricula.

Table 1 From Improving Embeddings Representations For Comparing Higher This is the official repository of the emnlp2022 main conference named "improving embeddings representations for comparing higher education curricula: a use case in computing". By focusing on higher level relationships through shared class labels, our approach circumvents these limitations and offers a more flexible lens for comparing two scatter based embedding visualizations. Algorithm 1 describes how qed reduces high dimensional embeddings into useful representations through a step by step process. it begins by taking the large embeddings generated from a feature and grouping values into simple bins via quantization (refer algorithm 1, line 1, and theorem 1). In this work, we follow the current trend in nlp applications and use pre trained word embeddings, such as bert (devlin et al.,2019), to obtain better representations of textual curricula.

Underline Leveraging The Structure Of Pre Trained Embeddings To Algorithm 1 describes how qed reduces high dimensional embeddings into useful representations through a step by step process. it begins by taking the large embeddings generated from a feature and grouping values into simple bins via quantization (refer algorithm 1, line 1, and theorem 1). In this work, we follow the current trend in nlp applications and use pre trained word embeddings, such as bert (devlin et al.,2019), to obtain better representations of textual curricula.

Investigating Aspect Features In Contextualized Embeddings With

Comments are closed.