Tutorial On Apache Airflow Architecture Task Life Cycle Components

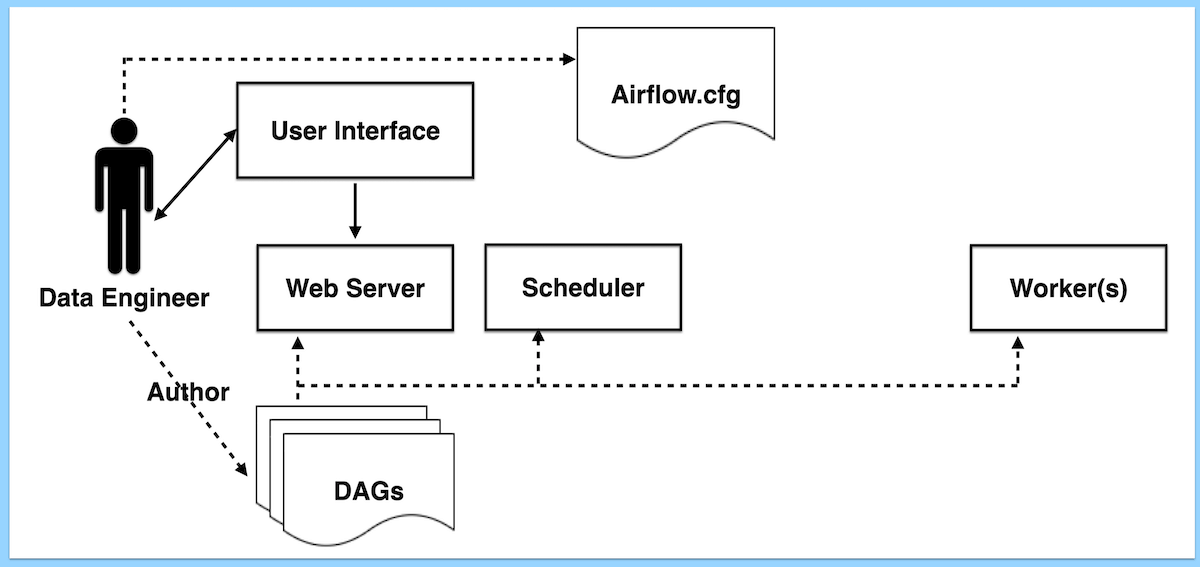

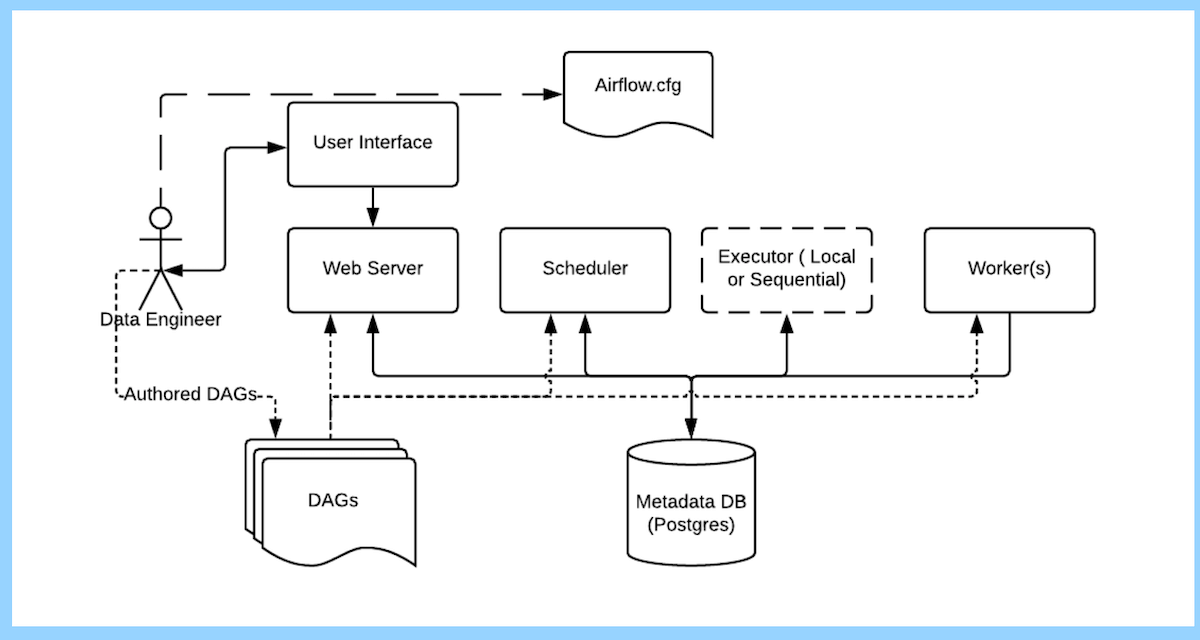

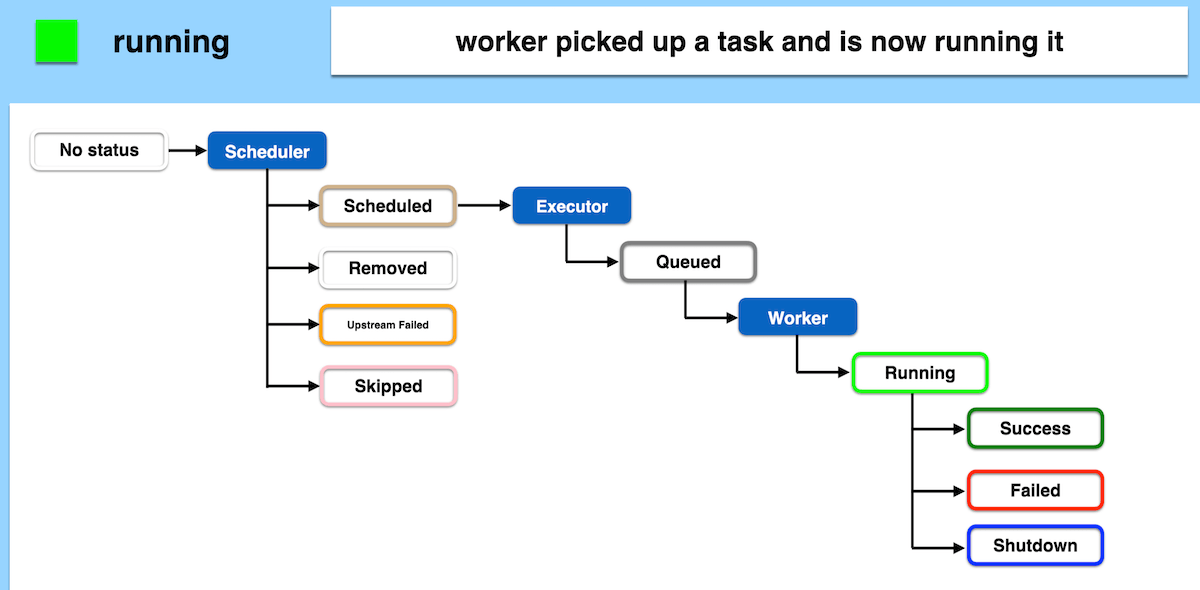

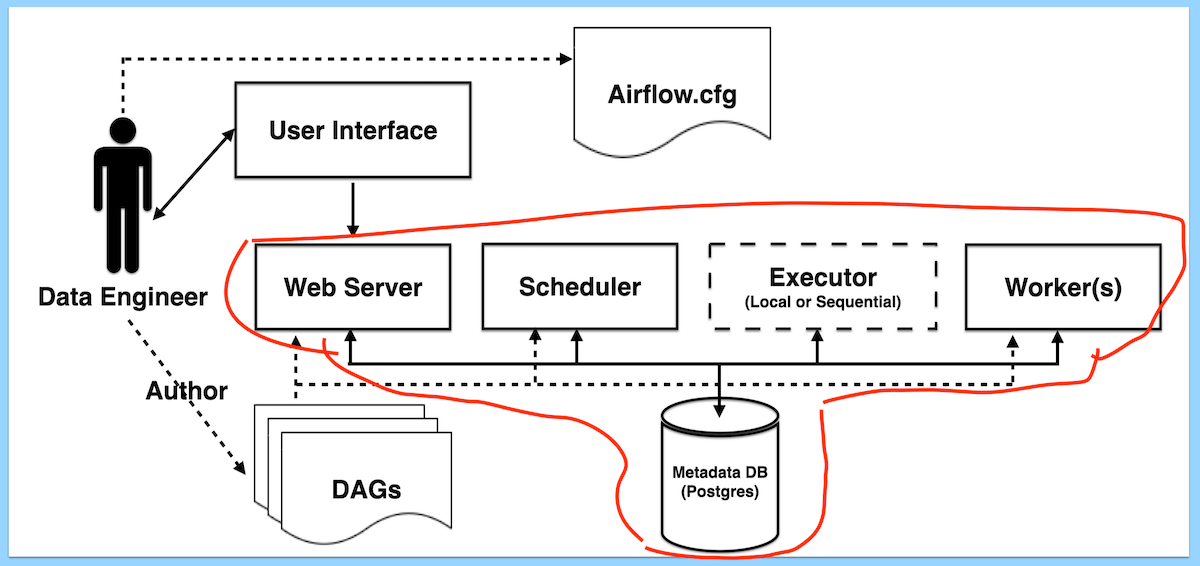

Apache Airflow S Task Lifecycle And Architecture Coder2j Kickstart Airflow’s architecture consists of multiple components. the following sections describe each component’s function and whether they’re required for a bare minimum airflow installation, or an optional component to achieve better airflow extensibility, performance, and scalability. In this tutorial, i will guide you through the task life cycle and basic architecture of apache airflow. by the end of this, you’ll have a solid understanding of how tasks progress from initiation to completion and how the core components of airflow collaborate.

Apache Airflow S Task Lifecycle And Architecture Coder2j Kickstart This guide, hosted on sparkcodehub, dives deep into the heart of airflow’s architecture: the scheduler, webserver, and executor, along with supporting elements like the metadata database. we’ll explore how each piece functions, why it matters, and how they fit together to power your data processes. Hi guys,in this video i am going to explain the apache airflow’s task life cycle and its basic architecture. by watching this video, you can understand what. Apache airflow’s architecture plays a vital role in its ability to manage and automate complex data pipelines. understanding the key components and their interactions within airflow. What is apache airflow? apache airflow is a workflow orchestration platform that allows you to define, schedule, and monitor complex data pipelines as code using python to define directed acyclic graphs (dags).

Apache Airflow S Task Lifecycle And Architecture Coder2j Kickstart Apache airflow’s architecture plays a vital role in its ability to manage and automate complex data pipelines. understanding the key components and their interactions within airflow. What is apache airflow? apache airflow is a workflow orchestration platform that allows you to define, schedule, and monitor complex data pipelines as code using python to define directed acyclic graphs (dags). This document describes the high level architecture of apache airflow, focusing on how the major subsystems interact to orchestrate and execute workflow tasks. it covers the core components (scheduler, dag processor, triggerer, api server, executors, and task execution system), their responsibilities, and the communication patterns between them. This is a multithreaded python process that uses the dagb object to decide what tasks need to be run, when and where. the task state is retrieved and updated from the database accordingly. Apache airflow is an open source platform designed to programmatically author, schedule, and monitor workflows. its architecture is built to be dynamic, extensible, and scalable, making it ideal for tasks ranging from simple batch jobs to complex data pipelines involving multiple dependencies. That is where apache airflow steps in —an open source platform designed to programmatically author, schedule, and monitor workflows. in this blog, we will explain airflow architecture, including its main components and best practices for implementation. so let’s dive right in!.

Apache Airflow S Task Lifecycle And Architecture Coder2j Kickstart This document describes the high level architecture of apache airflow, focusing on how the major subsystems interact to orchestrate and execute workflow tasks. it covers the core components (scheduler, dag processor, triggerer, api server, executors, and task execution system), their responsibilities, and the communication patterns between them. This is a multithreaded python process that uses the dagb object to decide what tasks need to be run, when and where. the task state is retrieved and updated from the database accordingly. Apache airflow is an open source platform designed to programmatically author, schedule, and monitor workflows. its architecture is built to be dynamic, extensible, and scalable, making it ideal for tasks ranging from simple batch jobs to complex data pipelines involving multiple dependencies. That is where apache airflow steps in —an open source platform designed to programmatically author, schedule, and monitor workflows. in this blog, we will explain airflow architecture, including its main components and best practices for implementation. so let’s dive right in!.

Comments are closed.