Training Mamba Model With Huggingface Transformers From Scratch Issue

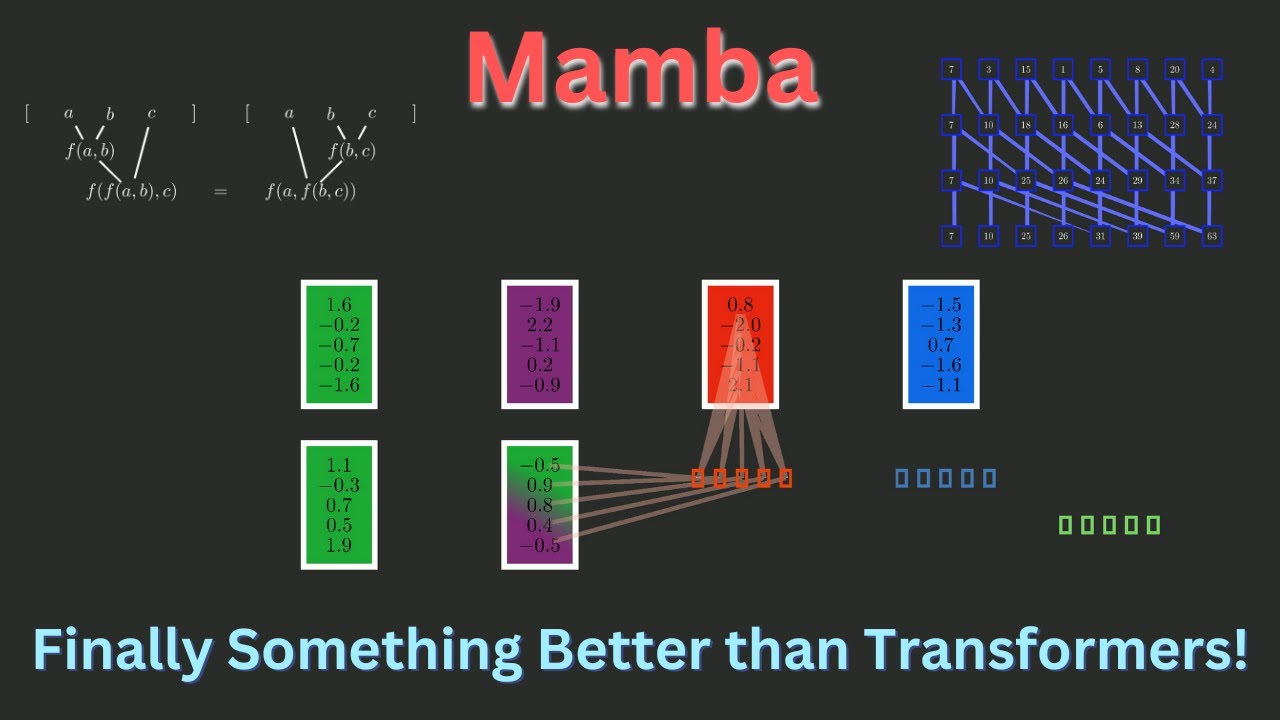

Mamba From Scratch Neural Nets Better And Faster Than Transformers Hi everyone, i want to create new mamba model to use in my project for generating new sequences. i have my own dataset based on midi files so i'm not keen on using pretrained model and i want to play with number of parameters. Mamba is a selective structured state space model (ssms) designed to work around transformers computational inefficiency when dealing with long sequences. it is a completely attention free architecture, and comprised of a combination of h3 and gated mlp blocks (mamba block).

Fine Tuning A Mamba Model With Using Hugging Face Transformers рџ A step to step guide to navigate you through training your own transformer based language model. As we conclude our comprehensive code walkthrough of building mamba from scratch, we’ve journeyed through the intricacies of its implementation, translating theory into practice. In this paper, we aim to explore this question by developing a cross architecture transfer paradigm that leverages existing transformer based pre trained models to facilitate the training of sub quadratic models, such as mamba, in a more computationally efficient and sustainable manner. Discover how ai21 solved a critical vllm state corruption bug in mamba architectures. a deep dive into debugging vllm's scheduler and memory management.

Mamba A New Approach That May Outperform Transformers In this paper, we aim to explore this question by developing a cross architecture transfer paradigm that leverages existing transformer based pre trained models to facilitate the training of sub quadratic models, such as mamba, in a more computationally efficient and sustainable manner. Discover how ai21 solved a critical vllm state corruption bug in mamba architectures. a deep dive into debugging vllm's scheduler and memory management. In this project guide, we will create a custom hybrid architecture by integrating insights from our previous work on mamba and transformer based models. This project focuses on implementing the mamba model based on the research paper mamba: linear time sequence modeling with selective state spaces. the mamba architecture presents a linear time alternative to transformers using selective state space models (ssms) for efficient long sequence modeling. For this practical session, i want to see what sort of bang for our buck we can get with the smallest model state spaces mamba 130m. the larger models in theory encode more hidden within their parameters, but they require you to have a large gpu and are slower to train. We decided to train our tokenizer from scratch with the huggingface tokenizers library to improve coverage of our training datasets and to have a larger vocabulary size.

Mamba The Next Evolution In Sequence Modeling In this project guide, we will create a custom hybrid architecture by integrating insights from our previous work on mamba and transformer based models. This project focuses on implementing the mamba model based on the research paper mamba: linear time sequence modeling with selective state spaces. the mamba architecture presents a linear time alternative to transformers using selective state space models (ssms) for efficient long sequence modeling. For this practical session, i want to see what sort of bang for our buck we can get with the smallest model state spaces mamba 130m. the larger models in theory encode more hidden within their parameters, but they require you to have a large gpu and are slower to train. We decided to train our tokenizer from scratch with the huggingface tokenizers library to improve coverage of our training datasets and to have a larger vocabulary size.

Comments are closed.