Tracerl Rl For Diffusion Llms

Diffusion Llms A New Era Of Large Language Models We propose tracerl, a trajectory aware reinforcement learning framework for diffusion language models (dlms) that incorporates preferred inference trajectory into post training, and is applicable across different architectures. We propose tracerl, a trajectory aware reinforcement learning method for diffusion language models, which demonstrates the best performance among rl approaches for dlms. we also introduce a diffusion based value model that reduces variance and improves stability during optimization.

Diffusion Llms A New Era Of Large Language Models Based on the above experimental results, the research team verified the effectiveness of tracerl in different rl tasks. meanwhile, they also demonstrated the advantages of tracerl in. Tracerl is a trajectory aware reinforcement learning framework for diffusion llms (dlms), designed to incorporate preferred inference trajectories into post training and to stabilize optimization through a diffusion based value model. This paper introduces tracerl, a reinforcement learning framework designed to improve the performance of diffusion language models (dlms) by aligning their post training objective with their inference trajectory. Proposes tracerl, a trajectory aware rl framework with diffusion based value model, deriving trado models where trado 4b instruct outperforms 7b scale ar models on complex math reasoning tasks with 18.1% gain on math500.

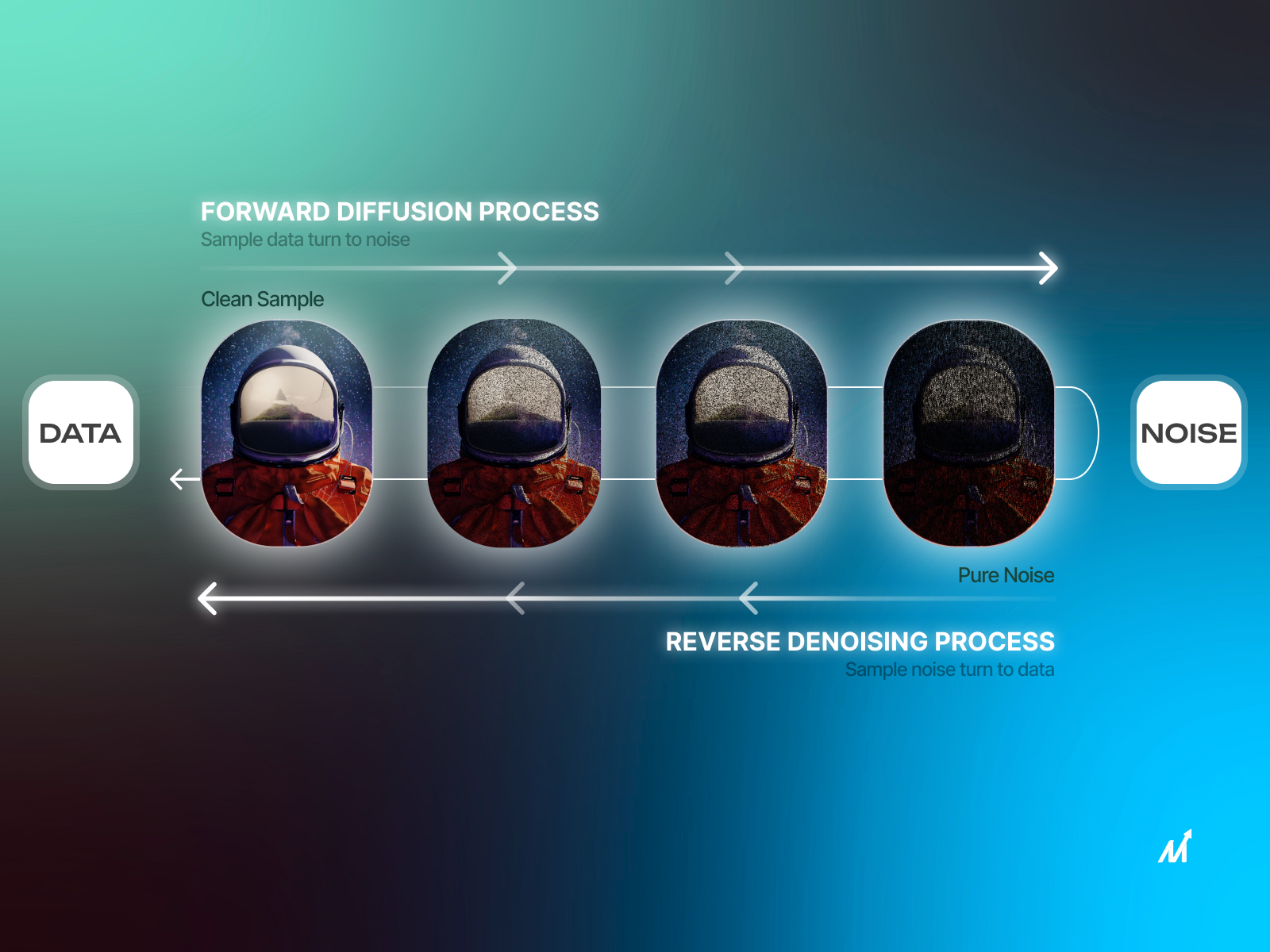

Diffusion Llms A New Era Of Large Language Models This paper introduces tracerl, a reinforcement learning framework designed to improve the performance of diffusion language models (dlms) by aligning their post training objective with their inference trajectory. Proposes tracerl, a trajectory aware rl framework with diffusion based value model, deriving trado models where trado 4b instruct outperforms 7b scale ar models on complex math reasoning tasks with 18.1% gain on math500. It introduces grouped step optimization, a diffusion based value model with step wise gae, and a ppo like objective with kl control for stability. the method supports process level and verifiable. Reinforcement learning (rl) has been effective for post training autoregressive (ar) language models, but extending these methods to diffusion language models (dlms) is challenging due to intractable sequence level likelihoods. The tracerl framework is a trajectory aware reinforcement learning (rl) methodology designed for post training and optimizing diffusion llms (dlms) and masked diffusion llms (mdms) using multi step inference traces. Reinforcement learning (rl) has proven highly effective for autoregressive language models, but adapting these methods to diffusion large language models (dllms) presents fundamental challenges.

Diffusion Llms A New Era Of Large Language Models It introduces grouped step optimization, a diffusion based value model with step wise gae, and a ppo like objective with kl control for stability. the method supports process level and verifiable. Reinforcement learning (rl) has been effective for post training autoregressive (ar) language models, but extending these methods to diffusion language models (dlms) is challenging due to intractable sequence level likelihoods. The tracerl framework is a trajectory aware reinforcement learning (rl) methodology designed for post training and optimizing diffusion llms (dlms) and masked diffusion llms (mdms) using multi step inference traces. Reinforcement learning (rl) has proven highly effective for autoregressive language models, but adapting these methods to diffusion large language models (dllms) presents fundamental challenges.

Diffusion Llms Rewriting The Rules Of Language Generation Neil Sahota The tracerl framework is a trajectory aware reinforcement learning (rl) methodology designed for post training and optimizing diffusion llms (dlms) and masked diffusion llms (mdms) using multi step inference traces. Reinforcement learning (rl) has proven highly effective for autoregressive language models, but adapting these methods to diffusion large language models (dllms) presents fundamental challenges.

Diffusion Llms Rewriting The Rules Of Language Generation Neil Sahota

Comments are closed.