Tiling With Shared Memory Gpu Programming Episode 7

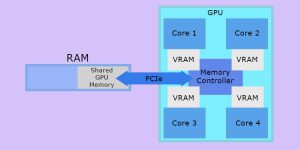

What Is Shared Gpu Memory How Is It Different From Dedicated Vram Tiling with shared memory | gpu programming | episode 7 simon oz 15.9k subscribers subscribe. Share your videos with friends, family, and the world.

Tiling And Shared Memory Kernel Download Scientific Diagram Tiling with shared memory | gpu programming | episode 7 12 added last week anonymously in misc gifs source: watch the full video | create gif from this video. 代码中初始化共享内存、计算输出元素的行列、加载平铺并进行同步,确保所有线程在同一时间执行。 最终,平铺矩阵乘法算法的性能显著优于标准算法,cpu版本的速度更慢,显示了gpu的优势。. Optimizing cuda matrix multiplication using tiling and shared memory, with detailed explanations of memory access patterns and performance improvements. Hi, i’m studying the sgemm algorithm on cuda, but i couldn’t figure out how shared memory bandwidth bottleneck is alleviated. i try to calculate the tiling size needed to achieve the theoretical peak floating point performance (ignoring memory latency and only look at bandwidth).

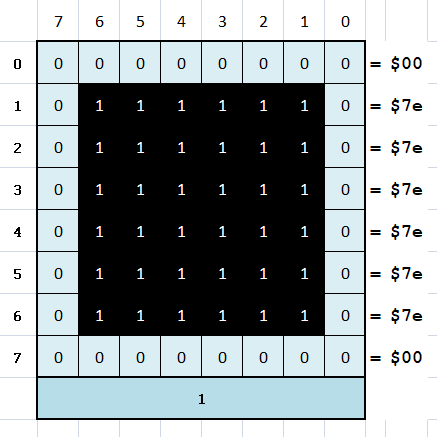

Tiling Basics Retroprogramming By Spotlessmind1975 Optimizing cuda matrix multiplication using tiling and shared memory, with detailed explanations of memory access patterns and performance improvements. Hi, i’m studying the sgemm algorithm on cuda, but i couldn’t figure out how shared memory bandwidth bottleneck is alleviated. i try to calculate the tiling size needed to achieve the theoretical peak floating point performance (ignoring memory latency and only look at bandwidth). Tiling splits the computation into small tiles that fit in shared memory. instead of each thread independently reading a full row and column from global memory, threads in a block cooperatively load a tile of a and a tile of b into shared memory, compute a partial result, then move to the next tile. I'm trying to familiarize myself with cuda programming, and having a pretty fun time of it. i'm currently looking at this pdf which deals with matrix multiplication, done with and without shared memory. Implemented a naive and a shared memory tiled blur kernel, validated correctness against a cpu reference, and measured performance on an nvidia t4. for larger kernels (7×7), shared memory delivered a meaningful speedup through better data reuse. This page documents the tiling and shared memory strategy employed by the sgemm optimized kernel to improve memory locality and reduce global memory traffic. it covers tile dimension selection, shared memory allocation, and cooperative loading patterns.

Tiling And Shared Memory Kernel Download Scientific Diagram Tiling splits the computation into small tiles that fit in shared memory. instead of each thread independently reading a full row and column from global memory, threads in a block cooperatively load a tile of a and a tile of b into shared memory, compute a partial result, then move to the next tile. I'm trying to familiarize myself with cuda programming, and having a pretty fun time of it. i'm currently looking at this pdf which deals with matrix multiplication, done with and without shared memory. Implemented a naive and a shared memory tiled blur kernel, validated correctness against a cpu reference, and measured performance on an nvidia t4. for larger kernels (7×7), shared memory delivered a meaningful speedup through better data reuse. This page documents the tiling and shared memory strategy employed by the sgemm optimized kernel to improve memory locality and reduce global memory traffic. it covers tile dimension selection, shared memory allocation, and cooperative loading patterns.

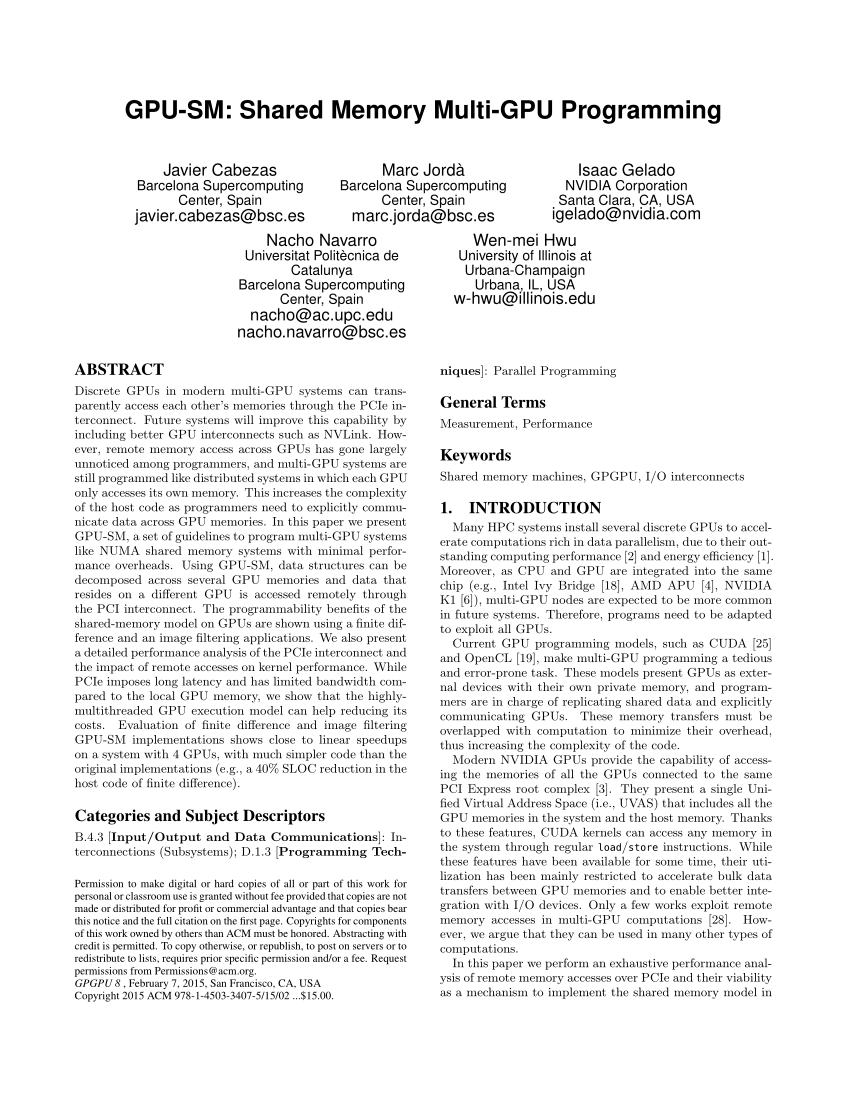

Pdf Gpu Sm Shared Memory Multi Gpu Programming Implemented a naive and a shared memory tiled blur kernel, validated correctness against a cpu reference, and measured performance on an nvidia t4. for larger kernels (7×7), shared memory delivered a meaningful speedup through better data reuse. This page documents the tiling and shared memory strategy employed by the sgemm optimized kernel to improve memory locality and reduce global memory traffic. it covers tile dimension selection, shared memory allocation, and cooperative loading patterns.

Comments are closed.