The Differences Between L1 And L2

Differences Between L1 And L2 Pdf Second Language First Language When working with high dimensional data, regularization is especially crucial since it lowers the likelihood of overfitting and keeps the model from becoming overly complicated. in this post, we'll look at regularization and the differences between l1 and l2 regularization. It turns out they have different but equally useful properties. from a practical standpoint, l1 tends to shrink coefficients to zero whereas l2 tends to shrink coefficients evenly. l1 is therefore useful for feature selection, as we can drop any variables associated with coefficients that go to zero.

Similarities Differences Between L1 L2 A Pdf Second Language If your dataset has thousands of features, many of which may be irrelevant, l1 helps you reduce features. if features are correlated, l2 may give more stable, better predictions. In this article, we will focus on two regularization techniques, l1 and l2, explain their differences and show how to apply them in python. what is regularization and why is it important?. In both l1 and l2 regularization, when the regularization parameter (α ∈ [0, 1]) is increased, this would cause the l1 norm or l2 norm to decrease, forcing some of the regression coefficients to zero. hence, l1 and l2 regularization models are used for feature selection and dimensionality reduction. In deep learning, the choice between l1 and l2 regularization (or their combination) depends on the specific problem, the data’s characteristics, and the model’s desired behaviour.

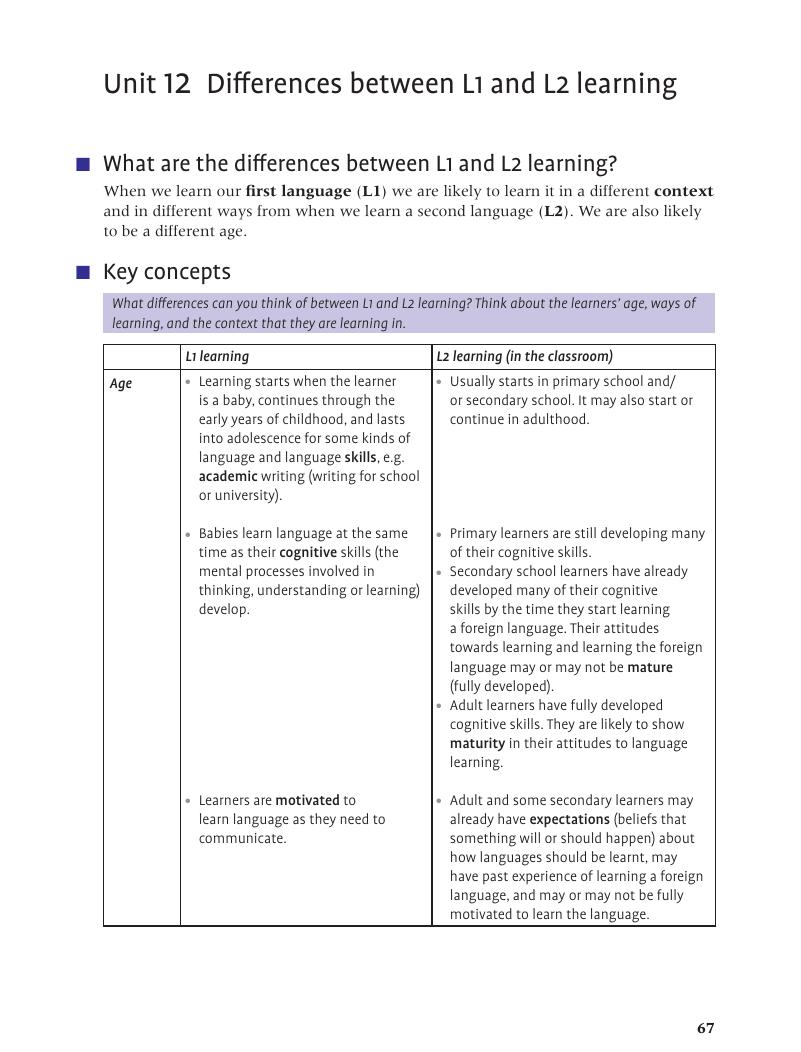

Differences Between L1 And L2 Learning Unit 12 The Tkt Course In both l1 and l2 regularization, when the regularization parameter (α ∈ [0, 1]) is increased, this would cause the l1 norm or l2 norm to decrease, forcing some of the regression coefficients to zero. hence, l1 and l2 regularization models are used for feature selection and dimensionality reduction. In deep learning, the choice between l1 and l2 regularization (or their combination) depends on the specific problem, the data’s characteristics, and the model’s desired behaviour. Two commonly used regularization techniques in sparse modeling are l1 norm and l2 norm, which penalize the size of the model's coefficients and encourage sparsity or smoothness, respectively. Learn about regularization in machine learning, including how techniques like l1 and l2 regularization help prevent overfitting. Two of the most commonly used regularization methods are l1 regularization (lasso) and l2 regularization* (ridge). in this article, we’ll explore what these techniques are, how they work, their differences, and when to use them. A comprehensive guide on l1 and l2 regularization techniques in machine learning, including their differences and when to use each. master data interview question concepts for technical interviews with practical examples, expert insights, and proven frameworks used by top tech companies.

Comments are closed.