Temporal Consistency Stable Diffusion Img2img

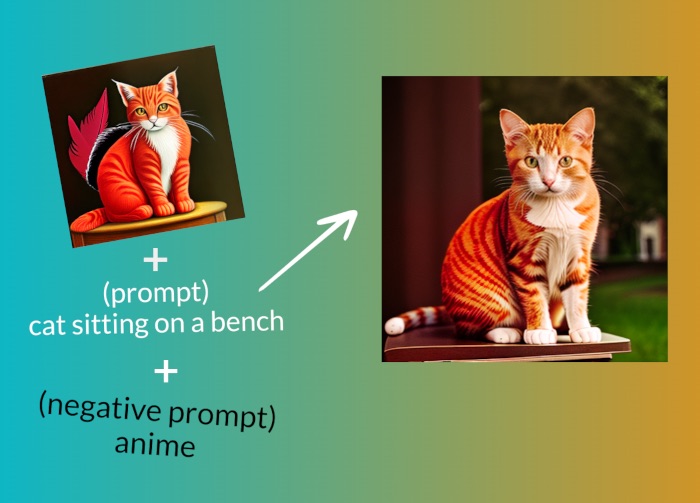

Stable Diffusion Img2img Cpu A Hugging Face Space By Fffiloni Achieving temporal consistency in generated ai animations is difficult, and there is not a definitive production ready solution yet. however, we can apply several strategies to create a reasonably consistent animation. Another test demonstrating my temporal consistency method with talking subjects using stable diffusion. largely adapted from @thejabthejab and @bunnetsong .b.

Stable Diffusion Img2img This approach builds upon the pioneering work of ebsynth, a computer program designed for painting videos, and leverages the capabilities of stable diffusion's img2img module to enhance the results. In this paper, we propose an elegant yet effective temporal consistent video editing (tcve) method to mitigate the temporal inconsistency challenge for robust text guided video editing. Diffusers' ethical guidelines evaluating diffusion models. we’re on a journey to advance and democratize artificial intelligence through open source and open science. The img2img and controlnet input image dimensions are automatically resized to be divisible by 8, so if the inlaid tiles' dimensions aren't also divisible by 8, they will warp and slightly dislodge from their given area during the resize.

Stable Diffusion Img2img Diffusers' ethical guidelines evaluating diffusion models. we’re on a journey to advance and democratize artificial intelligence through open source and open science. The img2img and controlnet input image dimensions are automatically resized to be divisible by 8, so if the inlaid tiles' dimensions aren't also divisible by 8, they will warp and slightly dislodge from their given area during the resize. Only a month ago, controlnet revolutionized the ai image generation landscape with its groundbreaking control mechanisms for spatial consistency in stable diffusion images, paving the way. In this article, we will explore two tricks that can greatly enhance the consistency and smoothness of stable diffusion animations. these tricks involve deleting half of the frames and using specific settings in stable diffusion. This video to video method converts a video to a series of images and then uses stable diffusion img2img with controlnet to transform each frame. use the following button to download the video if you wish to follow with the same video. Learn how temporal consistency works, why it matters, and how techniques like optical flow, latent reuse, and diffusion based models (including stable diffusion) maintain smooth, high quality motion in both guided and blind video generation.

Comments are closed.