Tampere University Low Latency Edge Cloud Offloading Software Stack Aisa

Tampere University Low Latency Edge Cloud Offloading Software Stack Aisa To address this, cpc also collaborates with ultra video group in researching edge offloading suitable low latency compression methodologies aimed for compute vision only situations. The aisa (ai based situational awareness) project strengthens the ability of finnish industrial companies and research institutes to apply these technologies at the forefront.

Figure 2 From An Edge Cloud Collaborative Task Offloading Model With Instead of performing heavy computation on a “local device” can we implement a peer to peer compute resource marketplace? meet you at the tau demo booth!. While reducing the complexity of such codecs is an active research area, computationally restricted devices can still lack the resources to encode the input with sufficiently low latency. To address these problems we propose pocl r, a computing runtime that acts as a scalable low latency hardware abstraction layer for distributed heterogeneous compute devices and aims to answer these unique challenges. A novel hybrid hyper heuristic algorithm has been developed to address large scale task allocation challenges in heterogeneous edge cloud environments, enabling the flexible allocation of computational resources and performance optimization.

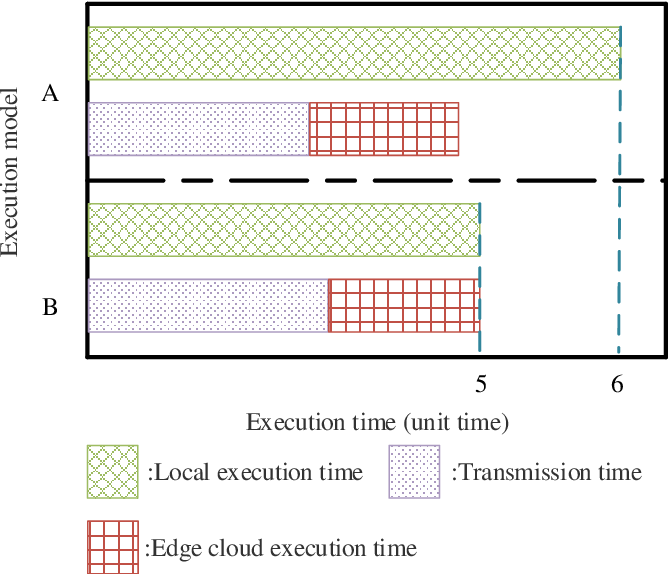

Cloud Architecture And Edge Computing Enhancing Latency And To address these problems we propose pocl r, a computing runtime that acts as a scalable low latency hardware abstraction layer for distributed heterogeneous compute devices and aims to answer these unique challenges. A novel hybrid hyper heuristic algorithm has been developed to address large scale task allocation challenges in heterogeneous edge cloud environments, enabling the flexible allocation of computational resources and performance optimization. This paper focuses on the optimization of network resource allocation and reduction of end to end (e2e) latency through the strategic decision of whether and where to offload user requests in a cloud edge elastic optical network (ce eon). Offloading the most demanding parts of applications to an edge gpu server cluster to save power or improve the result quality is a solution that becomes increasingly realistic with new. In this paper, we propose a two stage task offloading framework with edge cloud collaboration to assist end devices processing latency sensitive tasks either on the edge servers or in the cloud center. Edge computing and artificial intelligence (ai) algorithms integrate to decrease latency, increase mission efficiency, and conserve onboard resources. this system dynamically assigns.

Comments are closed.