Table Ii From Trust Ai Regulation Discerning Users Are Vital To Build

논문 리뷰 Trust Ai Regulation Discerning Users Are Vital To Build Trust Discerning users, namely those whose trust in the regulatory system is conditional on its perceived effectiveness, are essential to enable a stable and long lasting trust in the system, where creators comply with regulations and regulators continue to enforce them. We show that creating trustworthy ai and user trust requires regulators to be incentivised to regulate effectively. we demonstrate the effectiveness of two mechanisms that can achieve this. the first is where governments can recognise and reward regulators that do a good job.

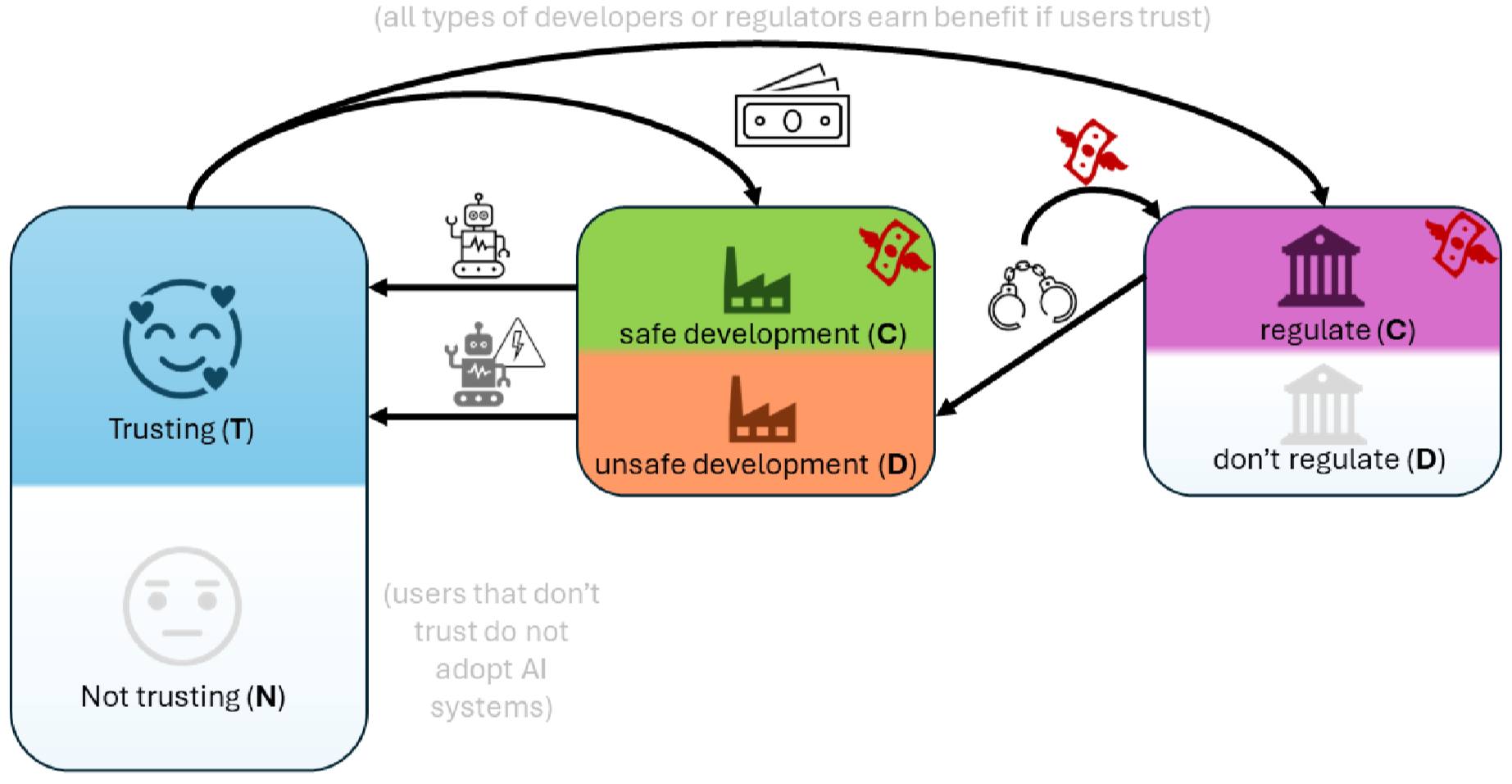

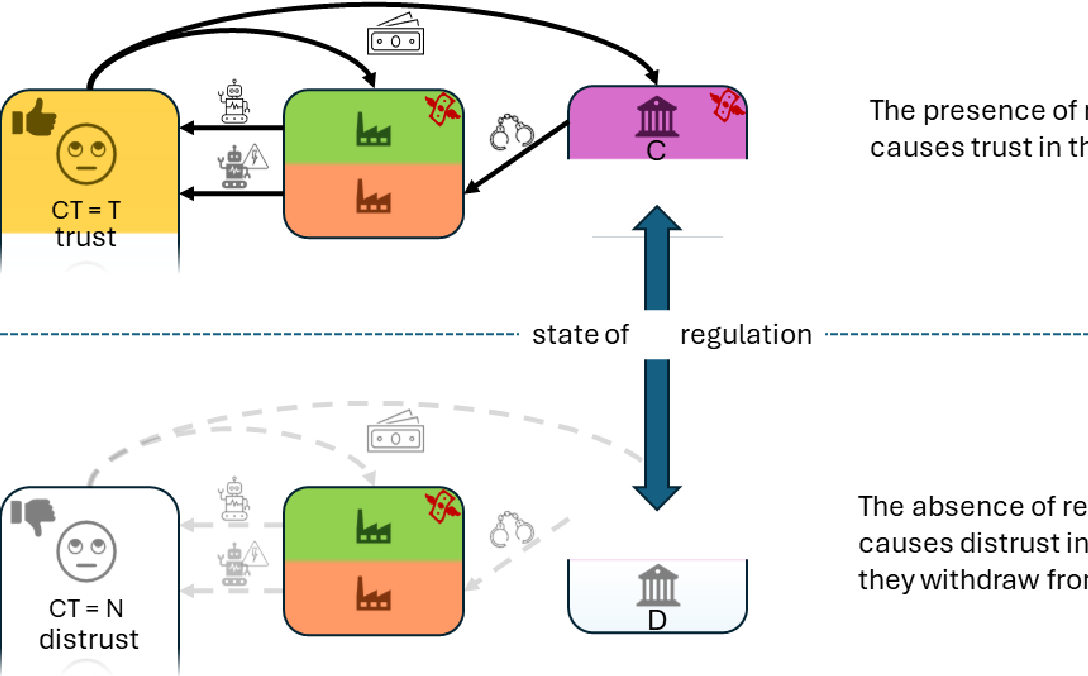

Pdf Trust Ai Regulation Discerning Users Are Vital To Build Trust The research explores two key mechanisms for achieving responsible governance, safe ai development and adoption of safe ai: incentivising effective regulation through media reporting, and conditioning user trust on commentariats' recommendation. There is general agreement that some form of regulation is necessary both for ai creators to be incentivised to develop trustworthy systems, and for users to actually trust those systems . Here, we propose that evolutionary game theory can be used to quantitatively model the dilemmas faced by users, ai creators, and regulators, and provide insights into the possible effects of different regulatory regimes. We show that creating trustworthy ai and user trust requires regulators to be incentivised to regulate effectively. we demonstrate the effectiveness of two mechanisms that can achieve this.

Figure 1 From Trust Ai Regulation Discerning Users Are Vital To Build Here, we propose that evolutionary game theory can be used to quantitatively model the dilemmas faced by users, ai creators, and regulators, and provide insights into the possible effects of different regulatory regimes. We show that creating trustworthy ai and user trust requires regulators to be incentivised to regulate effectively. we demonstrate the effectiveness of two mechanisms that can achieve this. We show that creating trustworthy ai and user trust requires regulators to be incentivised to regulate effectively. we demonstrate the effectiveness of two mechanisms that can achieve this. the first is where governments can recognise and reward regulators that do a good job. The research explores two key mechanisms for achieving responsible governance, safe ai development and adoption of safe ai: incentivising effective regulation through media reporting, and conditioning user trust on commentariats' recommendation. We focus on the policy implications of our main findings iii and iv: high costs in providing efective regulation pose an obstacle to building trust in future ai systems, and that it is vital to help users discern which systems have undergone efective regulation.

Comments are closed.