Table 6 From A Robust Diffusion Modeling Framework For Radar Camera 3d

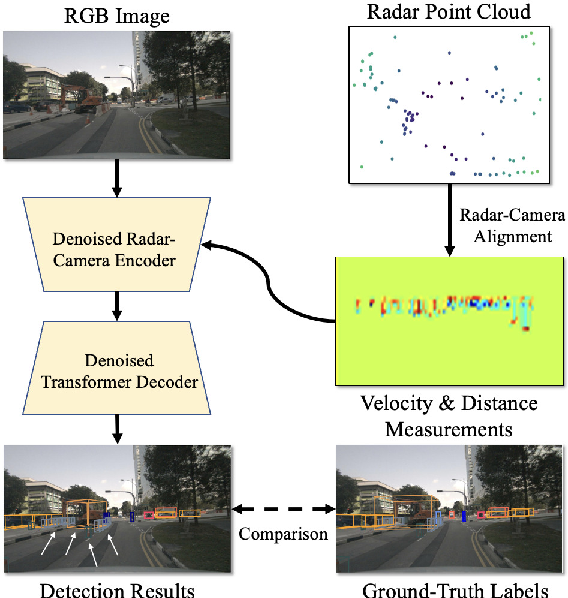

Radarcam Depth Radar Camera Fusion For Depth Estimation With Learned Radar camera 3d object detection aims at interacting radar signals with camera images for identifying objects of interest and localizing their corresponding 3d. We develop an end to end differentiable framework for the robust learning of radar camera 3d object de tection based on probabilistic denoising diffusion. our framework takes no need for lidar point clouds for either the training or the inference process.

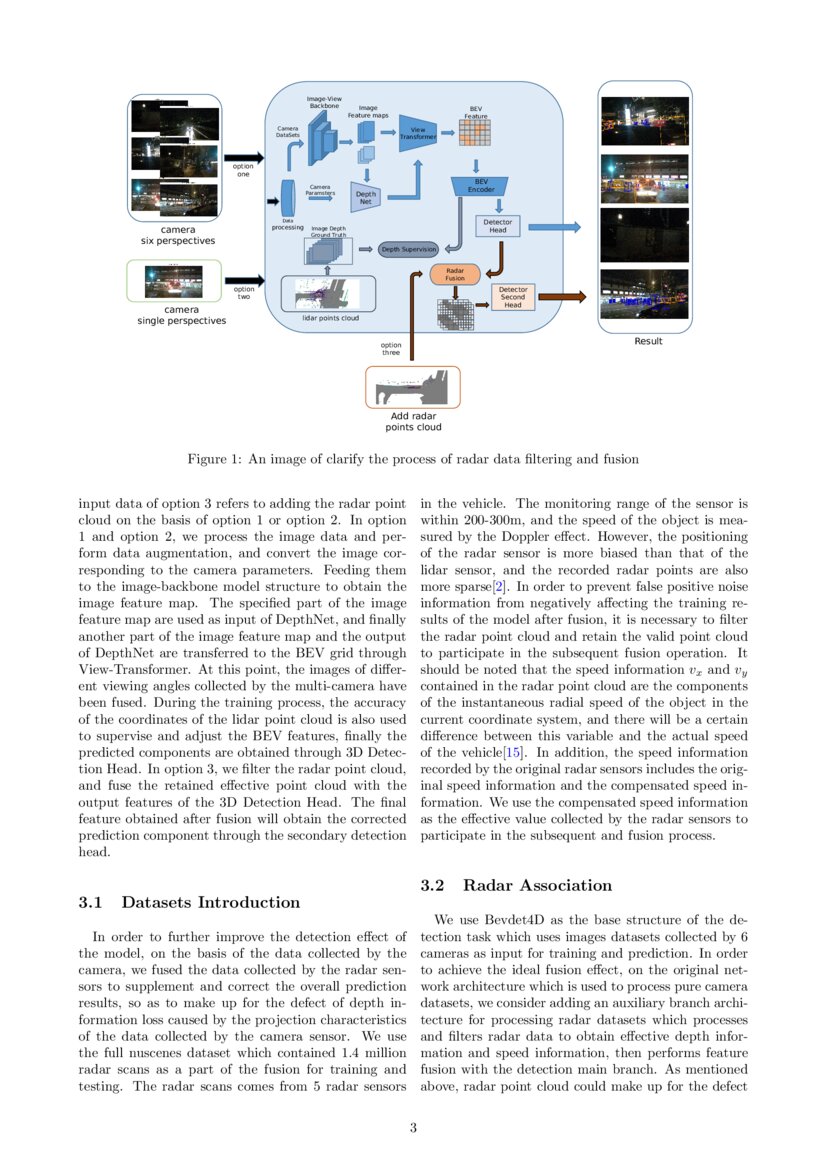

Hvdetfusion A Simple And Robust Camera Radar Fusion Framework Deepai This paper proposes camera radar net (crn), a novel camera radar fusion framework that generates a semantically rich and spatially accurate bird’s eye view (bev) feature map for various tasks and transforms perspective view image features to bev with the help of sparse but accurate radar points. Here we propose a novel proposal level early fusion approach that effectively exploits both spatial and contextual properties of camera and radar for 3d object detection. We design our framework to be easily implementable on different multi view 3d detectors without the requirement of using lidar point clouds during either the training or inference. This paper proposes a middle fusion approach to exploit both radar and camera data for 3d object detection and solves the key data association problem using a novel frustum based method.

Figure 1 From A Robust Diffusion Modeling Framework For Radar Camera 3d We design our framework to be easily implementable on different multi view 3d detectors without the requirement of using lidar point clouds during either the training or inference. This paper proposes a middle fusion approach to exploit both radar and camera data for 3d object detection and solves the key data association problem using a novel frustum based method. Article "a robust diffusion modeling framework for radar camera 3d object detection" detailed information of the j global is an information service managed by the japan science and technology agency (hereinafter referred to as "jst"). Radar camera fusion in autonomous driving. contribute to radar camera fusion awesome radar camera fusion development by creating an account on github. 带语义嵌入的ddm分析:若将ddm的输入从原始雷达特征改为加噪雷达特征,性能会有所下降。 这表明雷达传感器自身具有的模糊特性。 雷达特征的分析:使用雷达的距离信息、速度信息对3d检测有利,但进一步添加rcs信息对性能没有提升。. We design our framework to be easily implementable on different multi view 3d detectors without the requirement of using lidar point clouds during either the training or inference.

Comments are closed.