Table 1 From Adaptivity And Modularity For Efficient Generalization

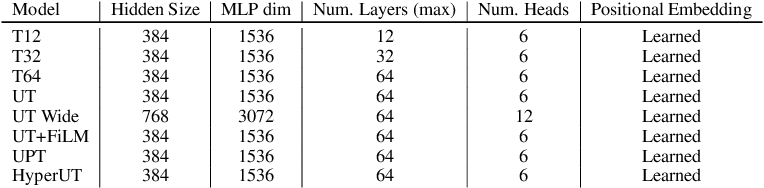

Table 1 From Adaptivity And Modularity For Efficient Generalization Table 1: architectural details of transformer variants trained on c pvr (modulus) from scratch. "adaptivity and modularity for efficient generalization over task complexity". We introduce a new task tailored to assess generalization over different complexities and present results that indicate that standard transformers face challenges in solving these tasks.

Adaptivity And Modularity For Efficient Generalization Over Task Complexity We explore the interplay between adaptive depth mechanism and modularity and how they can synergize for eficient generalization in the context of example complexity;. This work informs the design of more biologically plausible, energy efficient, and high performance snn architectures. the main contributions of this paper include the following: novel spiking neural network designs are proposed, which explicitly incorporate modularity and community structures to enhance training efficiency and generalization. Title={adaptivity and modularity for efficient generalization over task complexity}, author={samira abnar, omid saremi, laurent dinh, shantel wilson, miguel angel bautista, chen huang, vimal thilak, etai littwin, jiatao gu, josh susskind, samy bengio},. We introduce a new task tailored to assess generalization over different complexities and present results that indicate that standard transformers face challenges in solving these tasks.

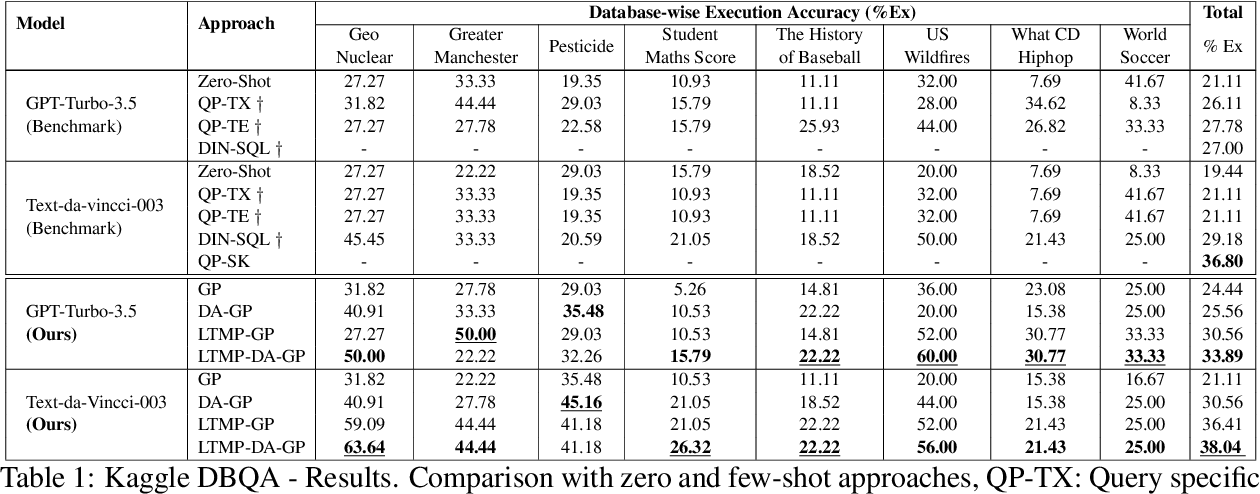

Table 1 From Adapt And Decompose Efficient Generalization Of Text To Title={adaptivity and modularity for efficient generalization over task complexity}, author={samira abnar, omid saremi, laurent dinh, shantel wilson, miguel angel bautista, chen huang, vimal thilak, etai littwin, jiatao gu, josh susskind, samy bengio},. We introduce a new task tailored to assess generalization over different complexities and present results that indicate that standard transformers face challenges in solving these tasks. Hyper ut shows improved accuracy and computational efficiency when generalizing to more reasoning steps. the benefits extend to image classification, where hyper ut matches vit accuracy on imagenet with 70% less compute. We introduce a new task tailored to assess generalization over different complexities and present results that indicate that standard transformers face challenges in solving these tasks. We explore the interplay between adaptive depth mechanism and modularity and how they can synergize for efficient generalization in the context of example complexity;. To systematize the relationship between wm constructs and ai affordances, table 1 presents a conceptual framework mapping the three wm constructs (capacity, utilization, and training plasticity) to four key ai affordances (adaptivity, multimodality, generative support, and feedback timing).

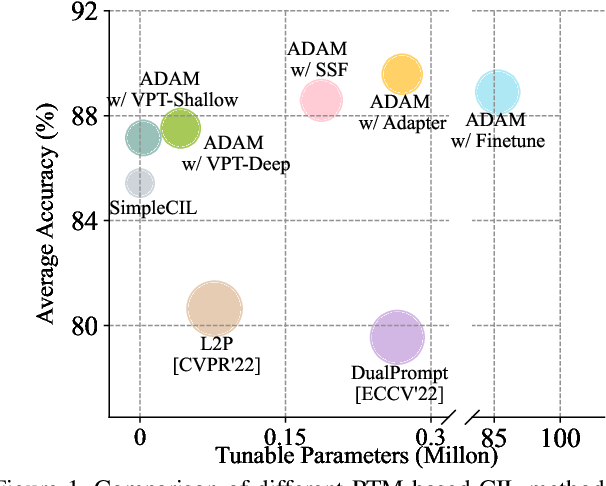

Revisiting Class Incremental Learning With Pre Trained Models Hyper ut shows improved accuracy and computational efficiency when generalizing to more reasoning steps. the benefits extend to image classification, where hyper ut matches vit accuracy on imagenet with 70% less compute. We introduce a new task tailored to assess generalization over different complexities and present results that indicate that standard transformers face challenges in solving these tasks. We explore the interplay between adaptive depth mechanism and modularity and how they can synergize for efficient generalization in the context of example complexity;. To systematize the relationship between wm constructs and ai affordances, table 1 presents a conceptual framework mapping the three wm constructs (capacity, utilization, and training plasticity) to four key ai affordances (adaptivity, multimodality, generative support, and feedback timing).

Comments are closed.