Table 1 From A Vector Quantized Masked Autoencoder For Audiovisual

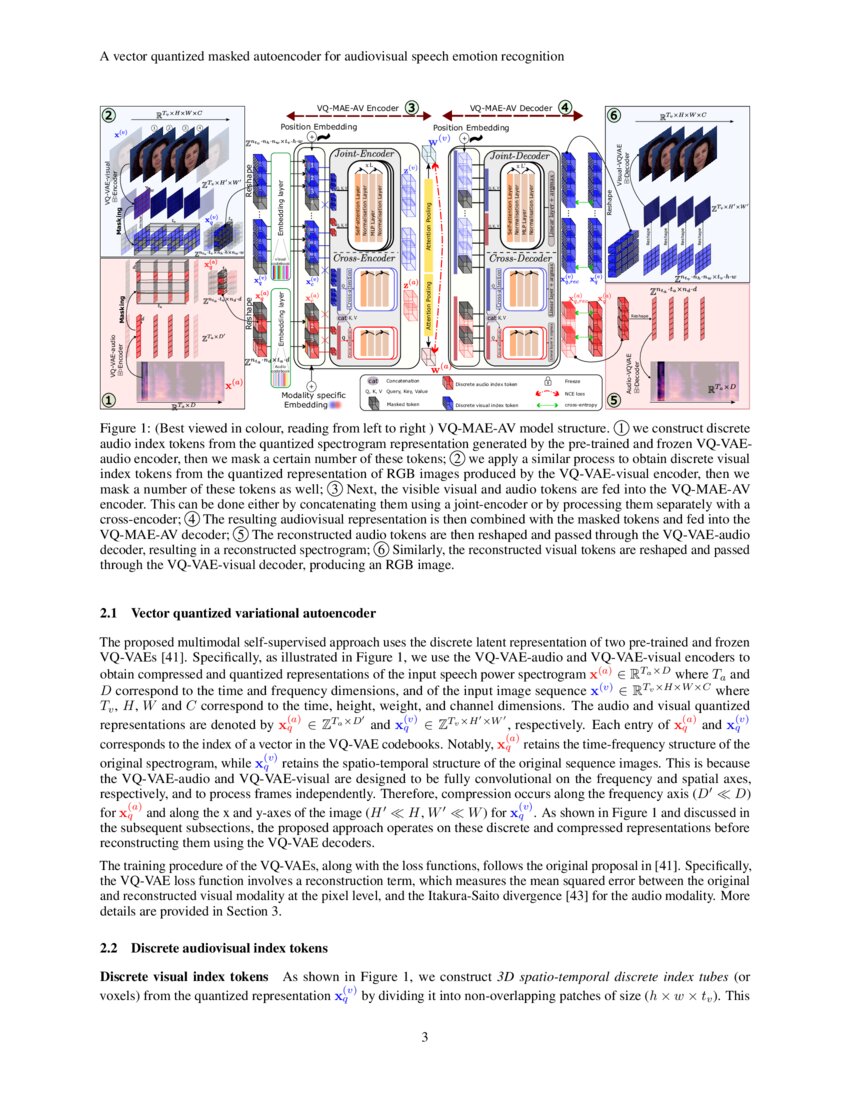

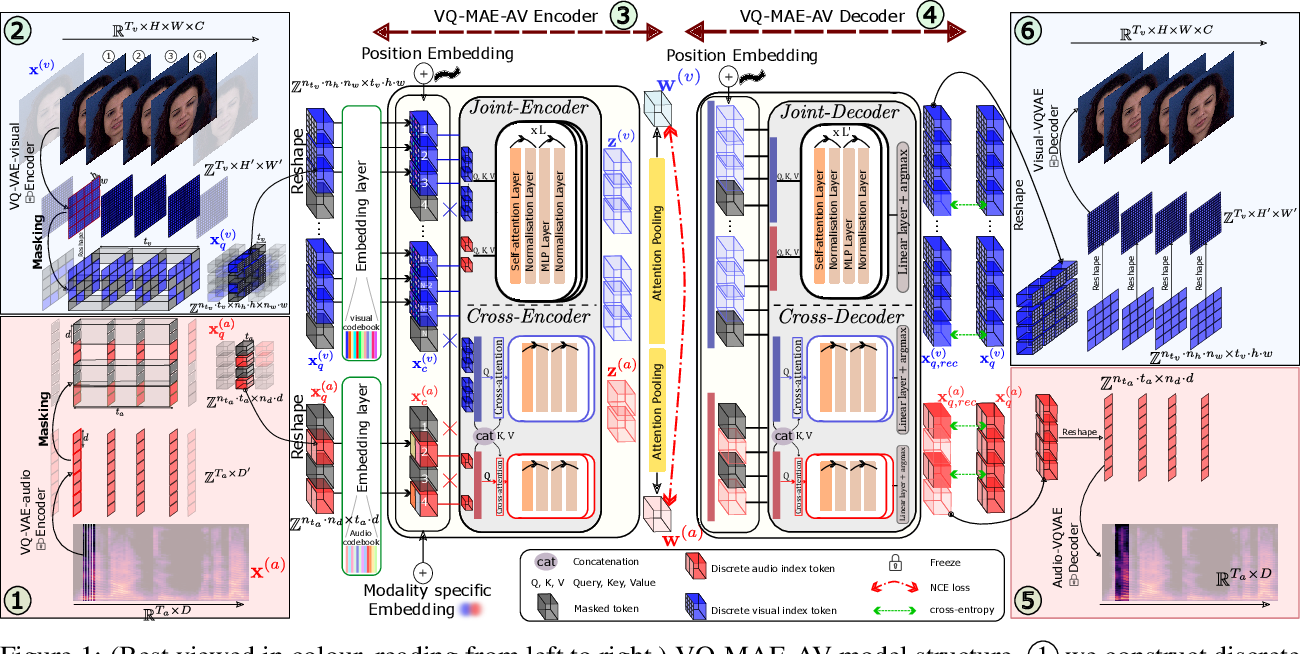

A Vector Quantized Masked Autoencoder For Audiovisual Speech Emotion In this paper, we propose the vq mae av model, a self supervised multimodal model that leverages masked autoencoders to learn representations of audiovisual speech without labels. We propose the vq mae av model, a vector quantized (vq) masked autoencoder (mae) designed for audiovisual (av) speech representation learning and applied to emotion recognition.

A Vector Quantized Masked Autoencoder For Audiovisual Speech Emotion Experimental results show that the proposed vq mae av model, a vector quantized masked autoencoder designed for audiovisual speech self supervised representation learning and applied to ser, outperforms the state of the art audiovisual ser methods. In this paper, we propose the vq mae av model, a vector quantized mae specifically designed for audiovisual speech self supervised representation learning. In this paper, we propose the vq mae av model, a self supervised multimodal model that leverages masked autoencoders to learn representations of audiovisual speech without labels. In this paper, we propose the vq mae av model, a self supervised multimodal model that leverages masked autoencoders to learn representations of audiovisual speech without labels.

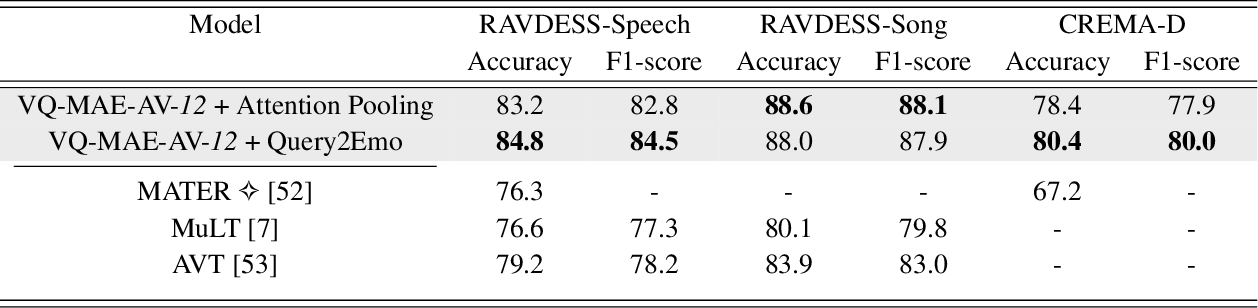

A Vector Quantized Masked Autoencoder For Audiovisual Speech Emotion In this paper, we propose the vq mae av model, a self supervised multimodal model that leverages masked autoencoders to learn representations of audiovisual speech without labels. In this paper, we propose the vq mae av model, a self supervised multimodal model that leverages masked autoencoders to learn representations of audiovisual speech without labels. Table 1 compares the emotion recognition performance (accuracy and f1 score) of the proposed vq mae av model (using the cross attention fusion strategy for both the encoder and decoder) with the performance of several audiovisual state of the art methods. This survey bridges the gap between traditional vector quantization and modern llm applications, serving as a foundational reference for the development of efficient and generalizable multimodal systems. During self supervised pre training, the vq mae av model is trained on a large scale unlabeled dataset of audiovisual speech, for the task of reconstructing randomly masked audiovisual speech tokens and with a contrastive learning strategy. This paper proposes the vq mae av model, a vector quantized masked autoencoder (mae) designed for audiovisual speech self supervised representation learning and applied to ser.

A Vector Quantized Masked Autoencoder For Audiovisual Speech Emotion Table 1 compares the emotion recognition performance (accuracy and f1 score) of the proposed vq mae av model (using the cross attention fusion strategy for both the encoder and decoder) with the performance of several audiovisual state of the art methods. This survey bridges the gap between traditional vector quantization and modern llm applications, serving as a foundational reference for the development of efficient and generalizable multimodal systems. During self supervised pre training, the vq mae av model is trained on a large scale unlabeled dataset of audiovisual speech, for the task of reconstructing randomly masked audiovisual speech tokens and with a contrastive learning strategy. This paper proposes the vq mae av model, a vector quantized masked autoencoder (mae) designed for audiovisual speech self supervised representation learning and applied to ser.

A Vector Quantized Masked Autoencoder For Audiovisual Speech Emotion During self supervised pre training, the vq mae av model is trained on a large scale unlabeled dataset of audiovisual speech, for the task of reconstructing randomly masked audiovisual speech tokens and with a contrastive learning strategy. This paper proposes the vq mae av model, a vector quantized masked autoencoder (mae) designed for audiovisual speech self supervised representation learning and applied to ser.

A Vector Quantized Masked Autoencoder For Speech Emotion Recognition

Comments are closed.