Swe Delivery Github

Swe Delivery Github Swe delivery has 3 repositories available. follow their code on github. About swe bench live is a live benchmark for issue resolving, designed to evaluate an ai system's ability to complete real world software engineering tasks. thanks to our automated dataset curation pipeline, we plan to update swe bench live on a monthly basis to provide the community with up to date task instances and support rigorous and contamination free evaluation. note: if you think your.

Abstract Live leaderboard ranking 220 ai models on swe bench pro, swe rebench, livecodebench, humaneval, swe bench verified, flteval, and react native evals. see which llm writes the best code — updated march 2026. Swe bench verified is a human filtered subset of 500 instances; use the agent dropdown to compare lms with mini swe agent or view all agents [post]. swe bench multilingual features 300 tasks across 9 programming languages [post]. Swe bench is a benchmark that tests whether language models can solve real software engineering problems. each task is a github issue from a popular open source python project, paired with the human written pull request that fixed it. to "resolve" a task, an ai agent must produce a code patch that passes the project's test suite — including the specific tests added by the original fix. this. Swe bench is the most widely cited benchmark for ai coding agents. it measures whether a model can resolve real github issues by generating working patches. this guide covers the full swe bench family, the 2026 leaderboard, and the other benchmarks that matter.

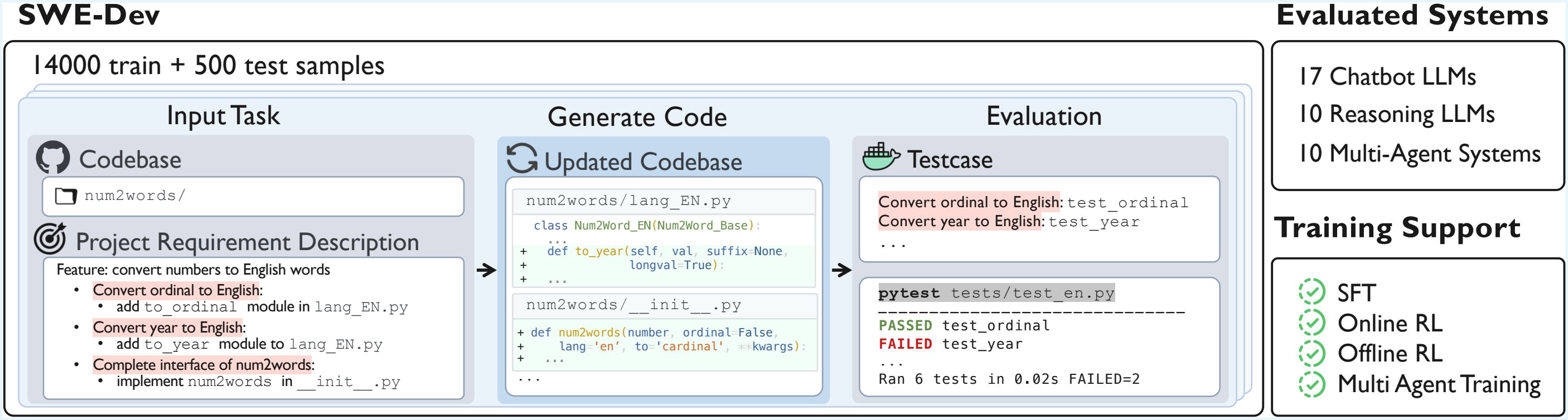

Swe Agent An Open Source Ai Programmer That Automatically Fixes Bugs In Swe bench is a benchmark that tests whether language models can solve real software engineering problems. each task is a github issue from a popular open source python project, paired with the human written pull request that fixed it. to "resolve" a task, an ai agent must produce a code patch that passes the project's test suite — including the specific tests added by the original fix. this. Swe bench is the most widely cited benchmark for ai coding agents. it measures whether a model can resolve real github issues by generating working patches. this guide covers the full swe bench family, the 2026 leaderboard, and the other benchmarks that matter. About we introduce swe bench pro, a substantially more challenging benchmark that builds upon the best practices of swe bench, but is explicitly designed to capture realistic, complex, enterprise level problems beyond the scope of swe bench. swe bench pro contains 1,865 problems sourced from a diverse set of 41 actively maintained repositories spanning business applications, b2b services, and. Swe bench, a benchmark for evaluating ai systems on real world github issues. swe agent, a system that automatically solves github issues using an lm agent. swe smith, a toolkit for generating swe training data at scale. also check out the supporting infrastructure for working with swe * projects. 🔍 𝐁𝐞𝐲𝐨𝐧𝐝 𝐓𝐨𝐲 𝐏𝐫𝐨𝐛𝐥𝐞𝐦𝐬: 𝐇𝐨𝐰 𝐒𝐖𝐄 𝐛𝐞𝐧𝐜𝐡 𝐌𝐞𝐚𝐬𝐮𝐫𝐞𝐬 𝐑𝐞𝐚𝐥 𝐀𝐈. Swe bench is a benchmark for evaluating large language models on real world software issues collected from github. given a codebase and an issue, a language model is tasked with generating a patch that resolves the described problem.

Comments are closed.