Stylegan2 Training Process Sample

The Projection Process Of Stylegan2 Model Download Scientific Diagram Training the next section deals with training your own models. it will show how to prepare a dataset, begin training stylegan, and explain how to resume from existing checkpoints and. This page showcases examples of images generated using the stylegan2 pytorch implementation and provides technical documentation on how to generate your own samples.

The Projection Process Of Stylegan2 Model Download Scientific Diagram Simple pytorch implementation of stylegan2 based on arxiv.org abs 1912.04958 that can be completely trained from the command line, no coding needed. below are some flowers that do not exist. Implement the primary building blocks of the stylegan generator, such as its mapping network and style based generator, using pytorch. practical guidance helps you develop these components. We trained this on celeba hq dataset. you can find the download instruction in this discussion on fast.ai. save the images inside data stylegan folder. this samples randomly and get from the mapping network. we also apply style mixing sometimes where we generate two latent variables and and get corresponding and . To train a stylegan model from scratch, you need a large dataset of high quality images. you can follow the training script in the stylegan2 pytorch repository. here is a simplified overview of the training process:.

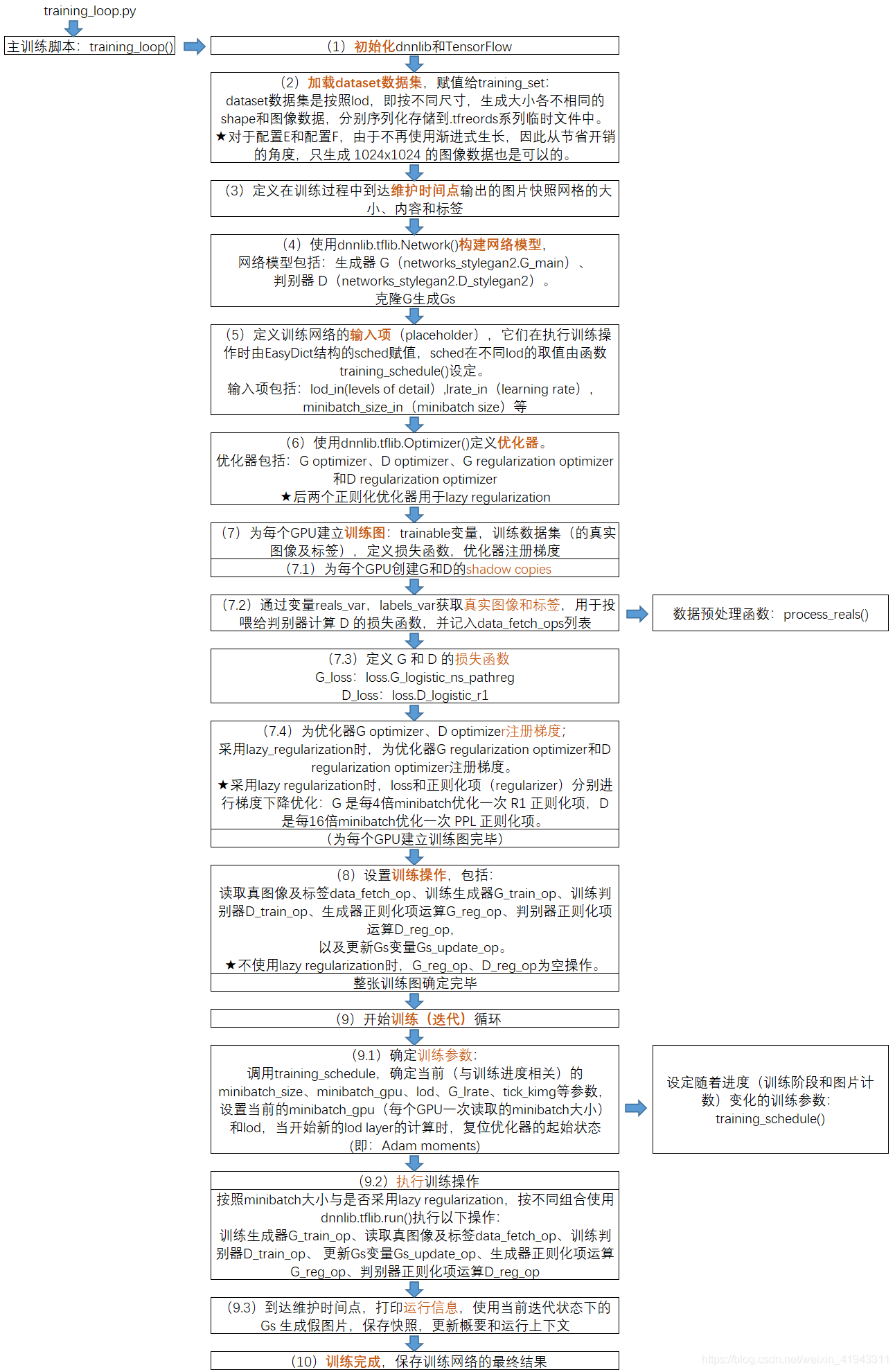

轻轻松松使用stylegan2 七 详解并中文注释training Loop Py 看一看stylegan2是怎样构建训练图并进行训练的 We trained this on celeba hq dataset. you can find the download instruction in this discussion on fast.ai. save the images inside data stylegan folder. this samples randomly and get from the mapping network. we also apply style mixing sometimes where we generate two latent variables and and get corresponding and . To train a stylegan model from scratch, you need a large dataset of high quality images. you can follow the training script in the stylegan2 pytorch repository. here is a simplified overview of the training process:. The article provides a guide on how to train stylegan2 ada, a popular generative model by nvidia, on a custom dataset. the author explains the concept of stylegan and its popularity. As shown in figure 1, the training process is about training a stylegan2 model by specific design style images. base on the random latent vector and noise, the generator can generate fake. In this post we implement the stylegan and in the third and final post we will implement stylegan2. you can find the stylegan paper here. note, if i refer to the “the authors” i am referring to karras et al, they are the authors of the stylegan paper. Processing 1 kimg requires 1 min of computation time, so: the total training time for 25 mimg should be 2.5 weeks of computation. nb: to ensure your colab session stays connected, follow:.

Comments are closed.