Stable Diffusion Img2img Control Net Animation Test

Controlnet And Stable Diffusion A Game Changer For Ai 58 Off Enjoy the videos and music you love, upload original content, and share it all with friends, family, and the world on . This repository contains example jupyter notebooks demonstrating the use of diffusion models for image enhancement and style transfer using controlnet and stable diffusion's img2img pipelines.

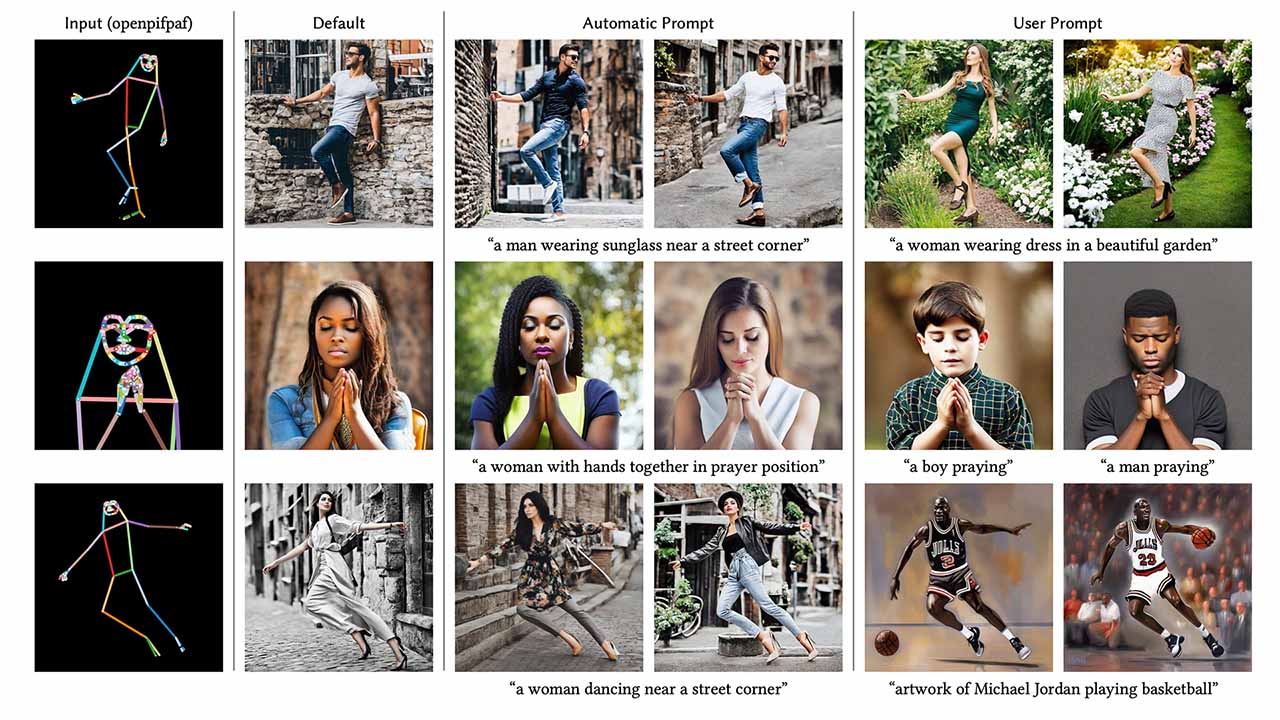

Controlnet Animation Test R Stablediffusion Controlnet is a neural network that controls image generation in stable diffusion by adding extra conditions. details can be found in the article adding conditional control to text to image diffusion models by lvmin zhang and coworkers. Controlnet is a major milestone towards developing highly configurable ai tools for creators, rather than the "prompt and pray" stable diffusion we know today. so how can you begin to control your image generations?. R stablediffusion is back open after the protest of reddit killing open api access, which will bankrupt app developers, hamper moderation, and exclude blind users from the site. Controlnet enhances the level of control in stable diffusion image composition, taking it to a whole new level. imagine image2image with a powerful boost. it empowers you with extensive and refined command over the image creation process using txt2img and img2img.

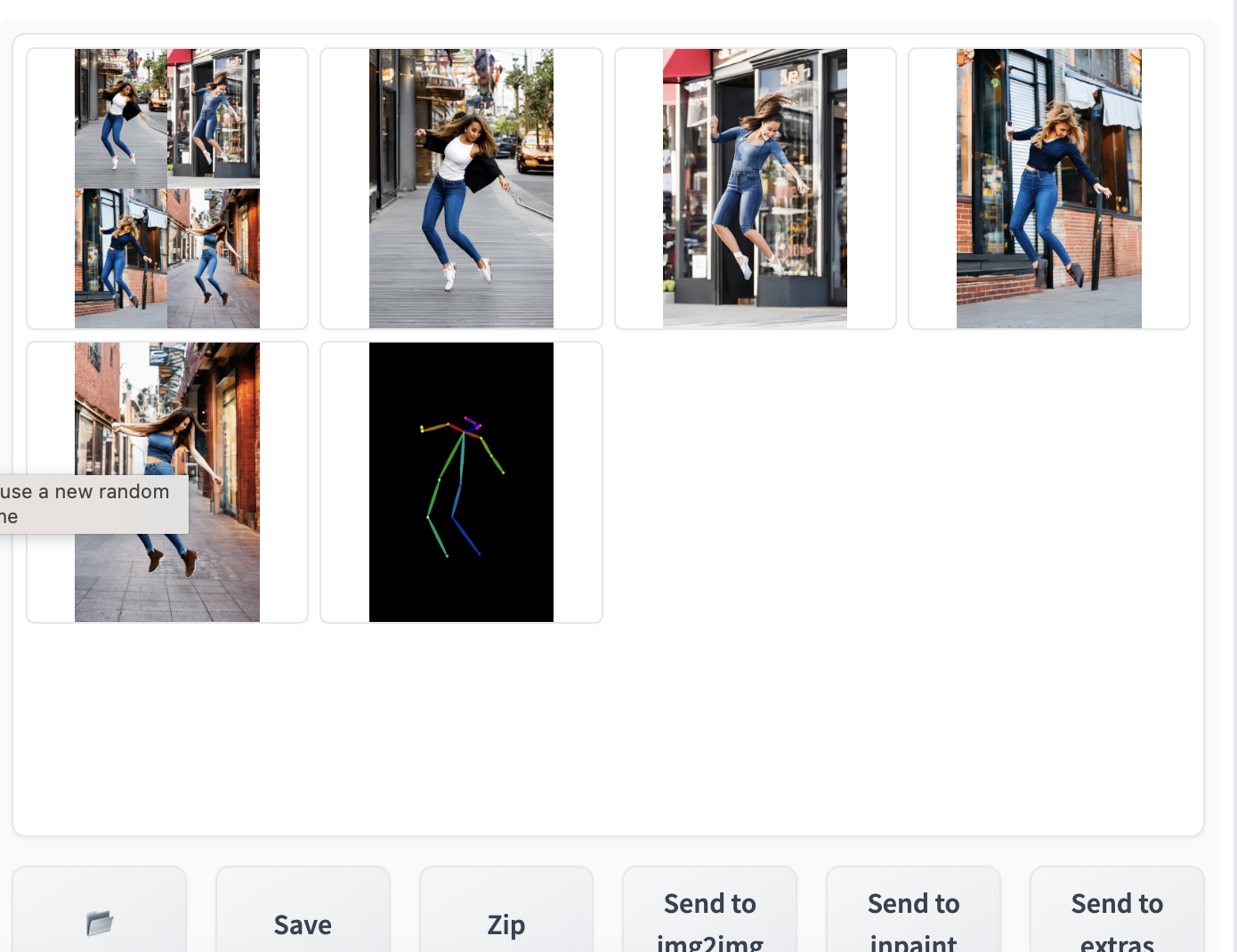

Controlnet V1 1 A Complete Guide Stable Diffusion Art R stablediffusion is back open after the protest of reddit killing open api access, which will bankrupt app developers, hamper moderation, and exclude blind users from the site. Controlnet enhances the level of control in stable diffusion image composition, taking it to a whole new level. imagine image2image with a powerful boost. it empowers you with extensive and refined command over the image creation process using txt2img and img2img. Deftest img2img simple performed(self): self.assert status ok () deftest img2img default params(self): self.simple img2img ["alwayson scripts"] ["controlnet"] ["args"] = [ { "image": utils.readimage ("test test files img2img basic "), "model": utils.get model (), } ] self.assert status ok () defrun(conf: dict) > none: init images = [ encode. A comprehensive guide to using open pose and control net in stable diffusion for transforming pose detection into stunning images. A step by step guide on how to use img2img stable diffusion webui to generate animation from video. Learn techniques for creating smooth, flicker free animations using stable diffusion, ebsynth, and controlnet. master frame extraction, keyframe selection, image processing, and advanced compositing for professional quality results.

Controlnet V1 1 A Complete Guide Stable Diffusion Art Deftest img2img simple performed(self): self.assert status ok () deftest img2img default params(self): self.simple img2img ["alwayson scripts"] ["controlnet"] ["args"] = [ { "image": utils.readimage ("test test files img2img basic "), "model": utils.get model (), } ] self.assert status ok () defrun(conf: dict) > none: init images = [ encode. A comprehensive guide to using open pose and control net in stable diffusion for transforming pose detection into stunning images. A step by step guide on how to use img2img stable diffusion webui to generate animation from video. Learn techniques for creating smooth, flicker free animations using stable diffusion, ebsynth, and controlnet. master frame extraction, keyframe selection, image processing, and advanced compositing for professional quality results.

Comments are closed.