Stable Diffusion Captioning Viral Clips Blip 2 Recognize Anything Vizard

Using The Blip 2 Model For Image Captioning Stable diffusion captioning & viral clips: blip 2, recognize anything, vizard. from stable diffusion tagging to automated short form publishing—this video shows my. Key takeaway: this guide turns a hands on captioning and training workflow into a repeatable pipeline that ends with automated distribution. claim: the approach is grounded in practical tests with ramplus, tag2text, blip 2, cosmos 2, and an integrated clipping and scheduling flow.

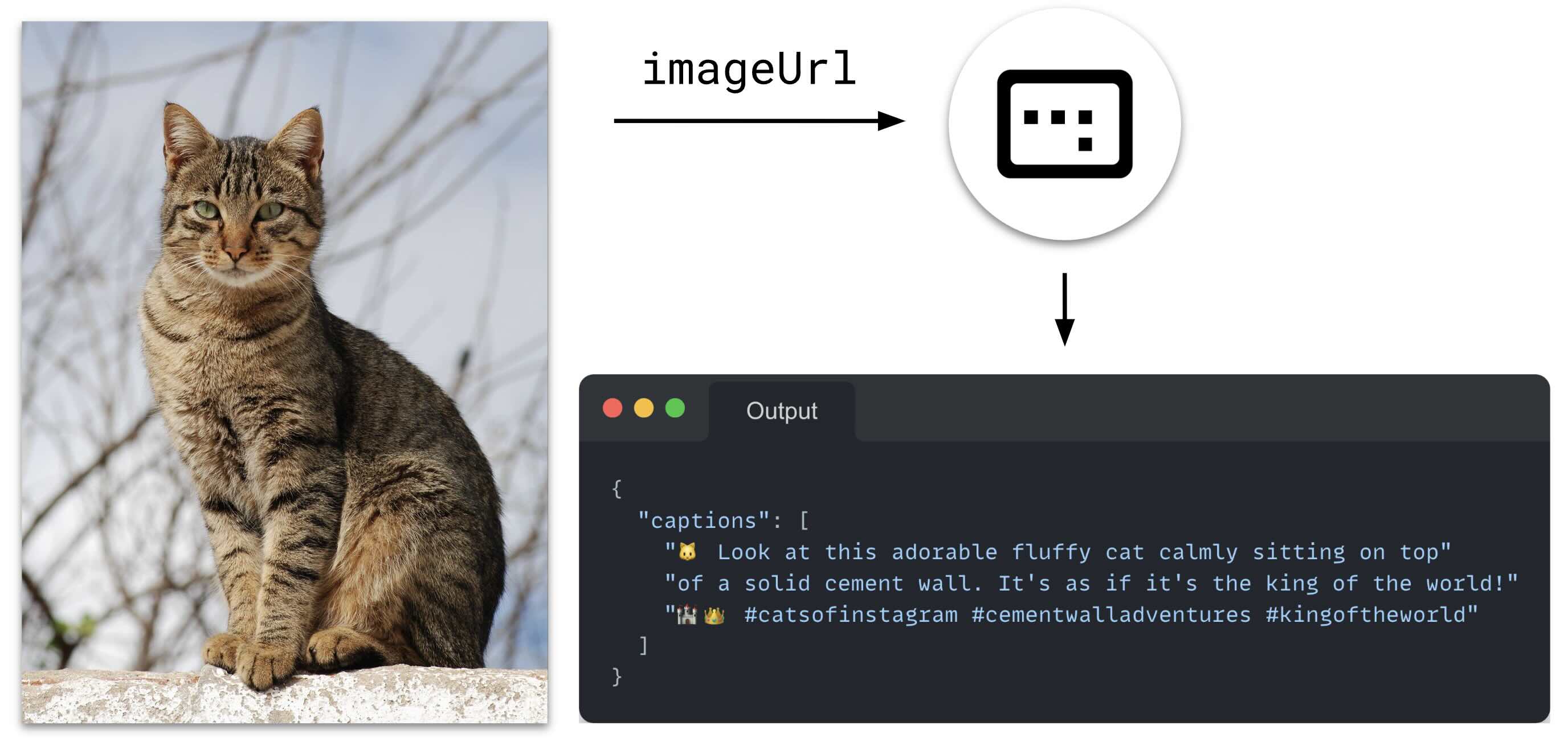

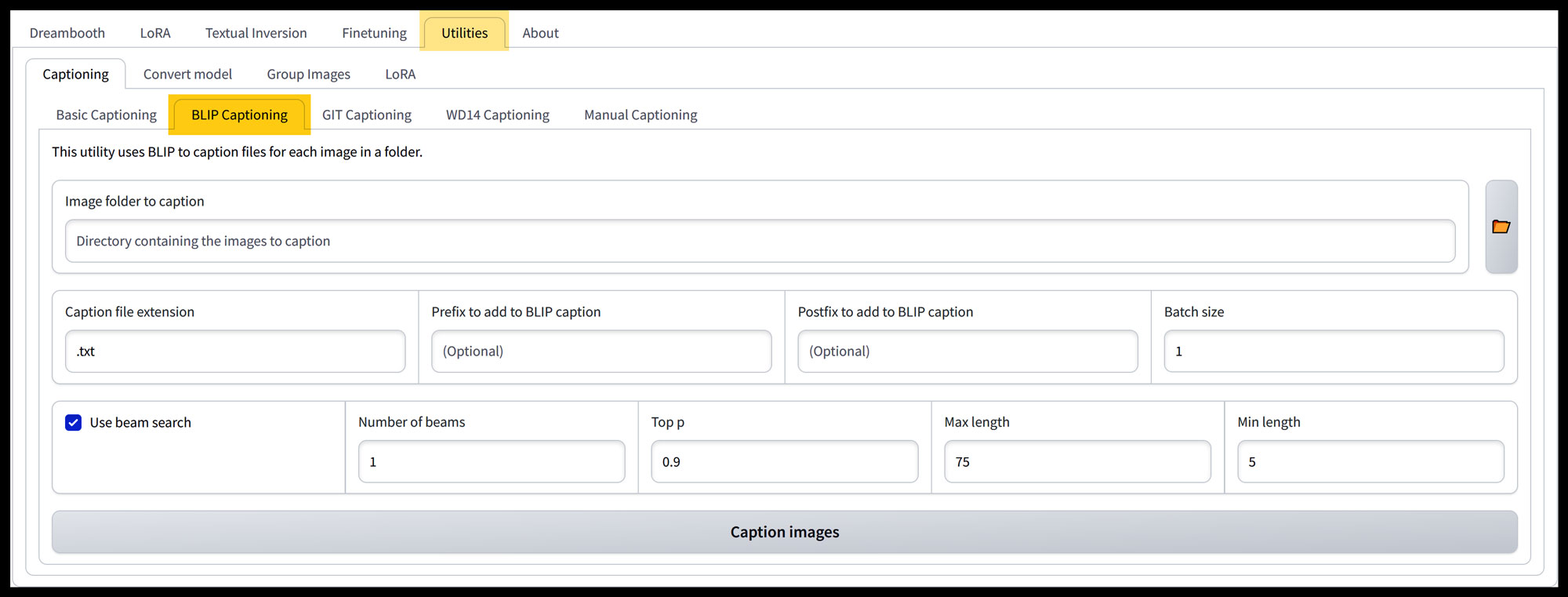

Github P1atdev Stable Diffusion Webui Blip2 Captioner Blip2 From tweak and regenerate chaos to a clean, reproducible pipeline: in this video i revisit stable diffusion fine tuning, scale up image captioning with modern vision language models, and then. If very large, caption accuracy may degrade. the minimum length of the caption to be generated. the cumulative probability for nucleus sampling. #machinelearning #imagecaptioning #ai today i'm taking a look at some multi modal large language models that can be used for automated image captioning. rich captions can be used for training. Let's find out if blip 2 can caption a new yorker cartoon in a zero shot manner. to caption an image, we do not have to provide any text prompt to the model, only the preprocessed input image.

Blip Captioning A Guide For Creating Captions And Datasets For Stable #machinelearning #imagecaptioning #ai today i'm taking a look at some multi modal large language models that can be used for automated image captioning. rich captions can be used for training. Let's find out if blip 2 can caption a new yorker cartoon in a zero shot manner. to caption an image, we do not have to provide any text prompt to the model, only the preprocessed input image. Turn long videos into high performing shorts and reels with vizard. in this video, i show my end to end workflow: auto find viral moments, transcript first trimming, fast captioning, ai. By bypassing complex, specialized video input designs, our experiments demonstrate that blip 2 straightforwardly derived from image captioning, can be effectively optimized to deliver competitive video captioning performance. Join jonathan dinu and pearson for an in depth discussion in this video, generating automatic captions with blip 2, part of programming generative ai: from variational autoencoders to. Enhance visual understanding through automated image descriptions. generate high quality images from text descriptions. support multilingual captioning and speech synthesis for accessibility. load.

Blip Captioning A Guide For Creating Captions And Datasets For Stable Turn long videos into high performing shorts and reels with vizard. in this video, i show my end to end workflow: auto find viral moments, transcript first trimming, fast captioning, ai. By bypassing complex, specialized video input designs, our experiments demonstrate that blip 2 straightforwardly derived from image captioning, can be effectively optimized to deliver competitive video captioning performance. Join jonathan dinu and pearson for an in depth discussion in this video, generating automatic captions with blip 2, part of programming generative ai: from variational autoencoders to. Enhance visual understanding through automated image descriptions. generate high quality images from text descriptions. support multilingual captioning and speech synthesis for accessibility. load.

Blip Captioning A Guide For Creating Captions And Datasets For Stable Join jonathan dinu and pearson for an in depth discussion in this video, generating automatic captions with blip 2, part of programming generative ai: from variational autoencoders to. Enhance visual understanding through automated image descriptions. generate high quality images from text descriptions. support multilingual captioning and speech synthesis for accessibility. load.

Blip Captioning A Guide For Creating Captions And Datasets For Stable

Comments are closed.