Speech Recognition Using Transformers In Python The Python Code

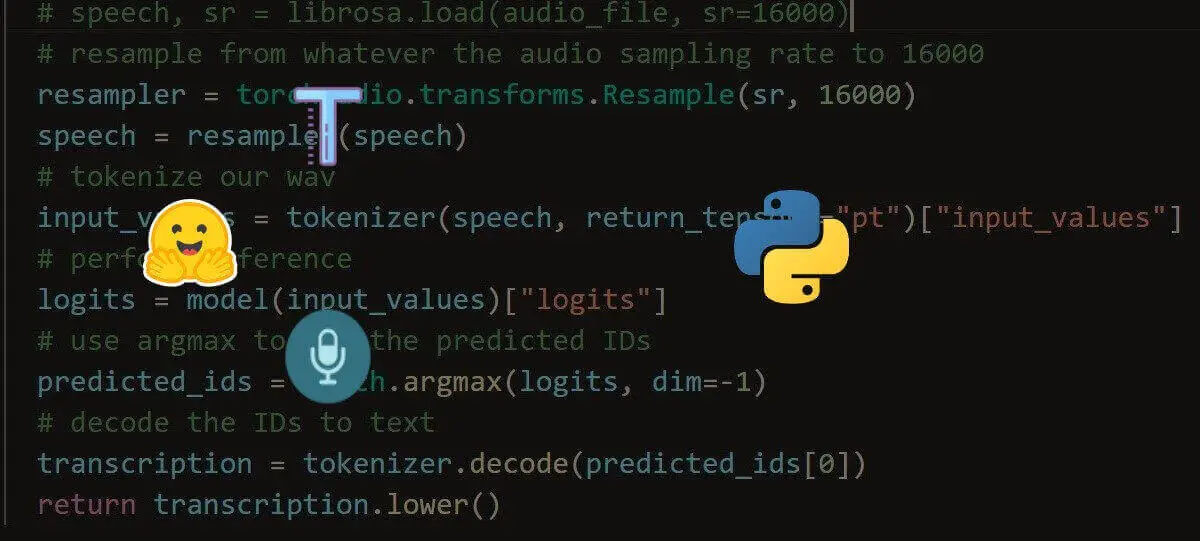

Speech Recognition Using Transformers In Python The Python Code Learn how to perform speech recognition using wav2vec2 and whisper transformer models with the help of huggingface transformers library in python. Args: inputs (`np.ndarray` or `bytes` or `str` or `dict`): the inputs is either : `str` that is either the filename of a local audio file, or a public url address to download the audio file. the file will be read at the correct sampling rate to get the waveform using *ffmpeg*.

Speech Recognition Using Transformers In Python The Python Code Automatic speech recognition (asr), also known as speech to text or voice recognition, is the process of converting spoken language into text. it involves the analysis of audio signals containing human speech and the transcription of the spoken words into written text. Automatic speech recognition (asr) consists of transcribing audio speech segments into text. asr can be treated as a sequence to sequence problem, where the audio can be represented as a sequence of feature vectors and the text as a sequence of characters, words, or subword tokens. This example demonstrates how to record audio live from your microphone using python and transcribe it on the fly using openai whisper. it uses the sounddevice library to capture audio and runs the transcription in memory, no audio file is saved. In this python tutorial i’m going to show you how to do speech to text in just three lines of code using the hugging face transformers pipeline with openai whisper.

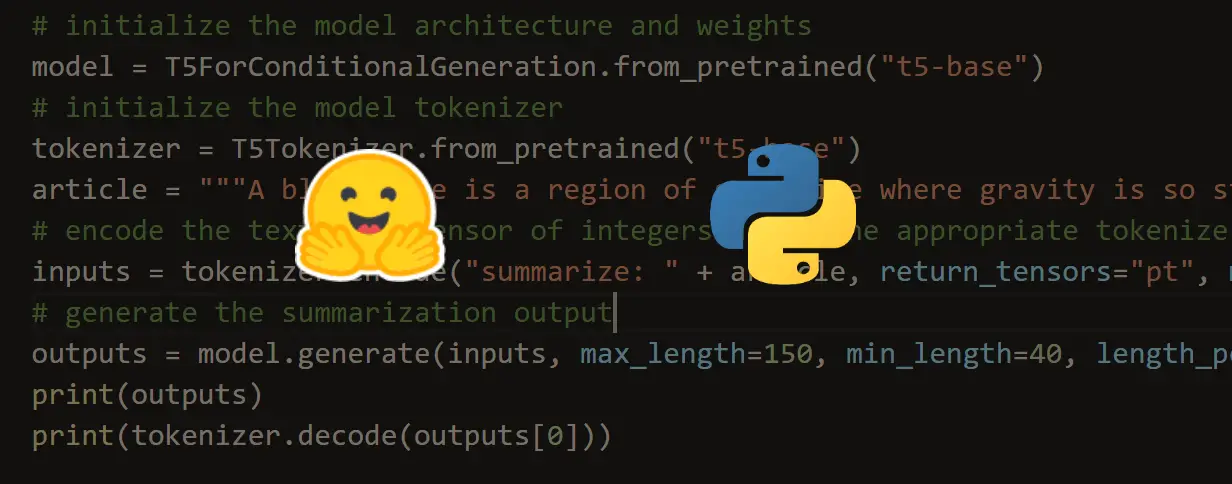

Speech Recognition Using Transformers In Python The Python Code This example demonstrates how to record audio live from your microphone using python and transcribe it on the fly using openai whisper. it uses the sounddevice library to capture audio and runs the transcription in memory, no audio file is saved. In this python tutorial i’m going to show you how to do speech to text in just three lines of code using the hugging face transformers pipeline with openai whisper. This notebook shows how to fine tune multi lingual pretrained speech models for automatic speech recognition. this notebook is built to run on the timit dataset with any speech model. Fine tune wav2vec2 on the minds 14 dataset to transcribe audio to text. use your fine tuned model for inference. to see all architectures and checkpoints compatible with this task, we recommend checking the task page. before you begin, make sure you have all the necessary libraries installed:. In recent years, advances in deep learning have significantly enhanced the efficiency and accuracy of these systems. in this article, we'll focus on building a speech to text system using the pytorch library and transformer architectures. Learn how to do automatic speech recognition (asr) using apis and or directly performing whisper inference on transformers in python.

Comments are closed.