Spark On Aws Lambda An Apache Spark Runtime For Aws Lambda Warrmx

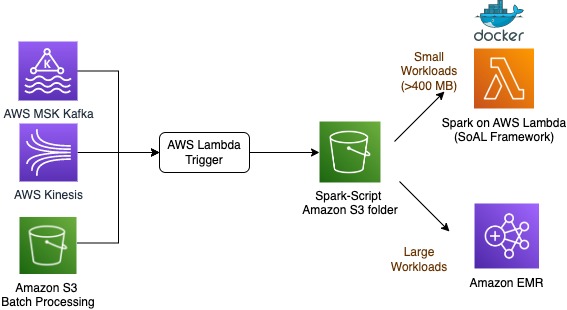

Spark On Aws Lambda An Apache Spark Runtime For Aws Lambda Aws Big Spark on aws lambda (soal) is a framework that runs apache spark workloads on aws lambda. it’s designed for both batch and event based workloads, handling data payload sizes from 10 kb to 400 mb. Apache spark on aws lambda (soal) framework is a standalone installation of spark running on aws lambda. the spark is packaged in a docker container, and aws lambda is used to execute the spark image.

Spark On Aws Lambda An Apache Spark Runtime For Aws Lambda Aws Big Soal provides a scalable, cost effective serverless solution for running apache spark, streamlining analytics workloads on aws lambda for various enterprise needs. Apache spark on aws lambda (soal) framework is a standalone installation of spark running on aws lambda. the spark is packaged in a docker container, and aws lambda is used to execute the spark image. the spark image pulls in a pyspark script. Spark on aws lambda is a standalone installation of spark that runs on aws lambda using a docker container. it provides a cost effective solution for event driven pipelines with smaller files, where heavier engines like amazon emr or aws glue incur overhead costs and operate more slowly. The spark on lambda engine provides a containerized apache spark execution environment running within aws lambda. this component enables real time data processing for datasets under 500mb through a docker container that packages pyspark with configurable data lake frameworks.

Spark On Aws Lambda An Apache Spark Runtime For Aws Lambda Aws Big Spark on aws lambda is a standalone installation of spark that runs on aws lambda using a docker container. it provides a cost effective solution for event driven pipelines with smaller files, where heavier engines like amazon emr or aws glue incur overhead costs and operate more slowly. The spark on lambda engine provides a containerized apache spark execution environment running within aws lambda. this component enables real time data processing for datasets under 500mb through a docker container that packages pyspark with configurable data lake frameworks. The spark on aws lambda (soal) github repository contains the spark runtime docker image. soal framework provides a standalone or local mode apache spark installation within a docker container. execution is facilitated by aws lambda working in tandem with pyspark scripts. Upon successful command execution, an aws lambda function is created using the spark container as its runtime. to test the aws lambda functions, utilize the pyspark scripts found in. We can package the spark application in a docker container and can use aws lambda to execute the spark image. in this blog, we will see how we can run a pyspark application on aws lambda. I want to create a lambda function using pyspark to ingest a small amount of data into a datalake in order to avoid the (costly) overhead of glue jobs. i created a custom docker image. even though the image works locally, i fail to execute it within aws.

Spark On Aws Lambda An Apache Spark Runtime For Aws Lambda Warrmx The spark on aws lambda (soal) github repository contains the spark runtime docker image. soal framework provides a standalone or local mode apache spark installation within a docker container. execution is facilitated by aws lambda working in tandem with pyspark scripts. Upon successful command execution, an aws lambda function is created using the spark container as its runtime. to test the aws lambda functions, utilize the pyspark scripts found in. We can package the spark application in a docker container and can use aws lambda to execute the spark image. in this blog, we will see how we can run a pyspark application on aws lambda. I want to create a lambda function using pyspark to ingest a small amount of data into a datalake in order to avoid the (costly) overhead of glue jobs. i created a custom docker image. even though the image works locally, i fail to execute it within aws.

Lambda Architecure On For Batch Aws Download Free Pdf Apache Spark We can package the spark application in a docker container and can use aws lambda to execute the spark image. in this blog, we will see how we can run a pyspark application on aws lambda. I want to create a lambda function using pyspark to ingest a small amount of data into a datalake in order to avoid the (costly) overhead of glue jobs. i created a custom docker image. even though the image works locally, i fail to execute it within aws.

Comments are closed.