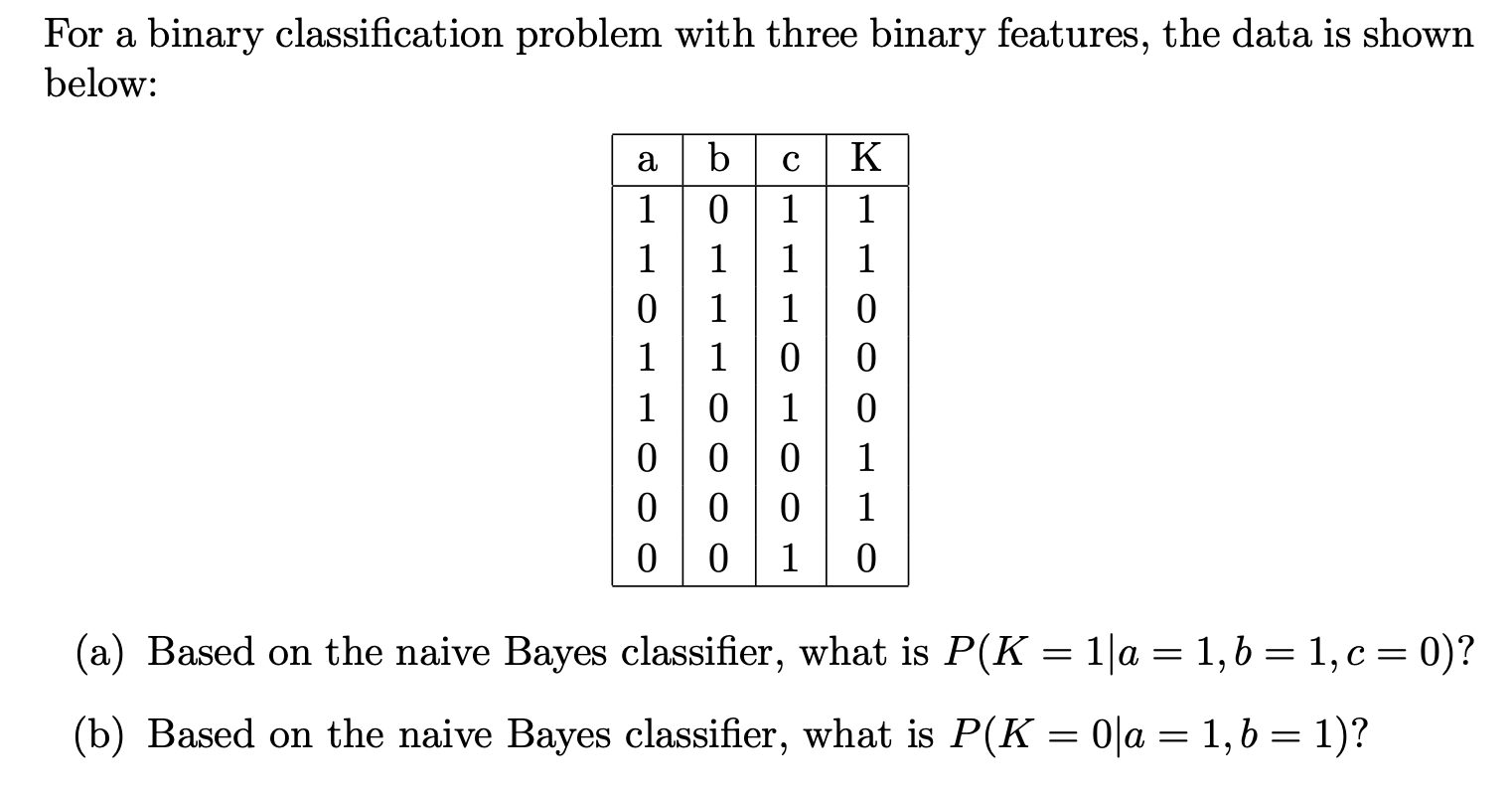

Solved For A Binary Classification Problem With Three Binary Chegg

Solved For A Binary Classification Problem With Three Binary Chegg Your solution’s ready to go! our expert help has broken down your problem into an easy to learn solution you can count on. see answer. Your solution’s ready to go! our expert help has broken down your problem into an easy to learn solution you can count on. see answer.

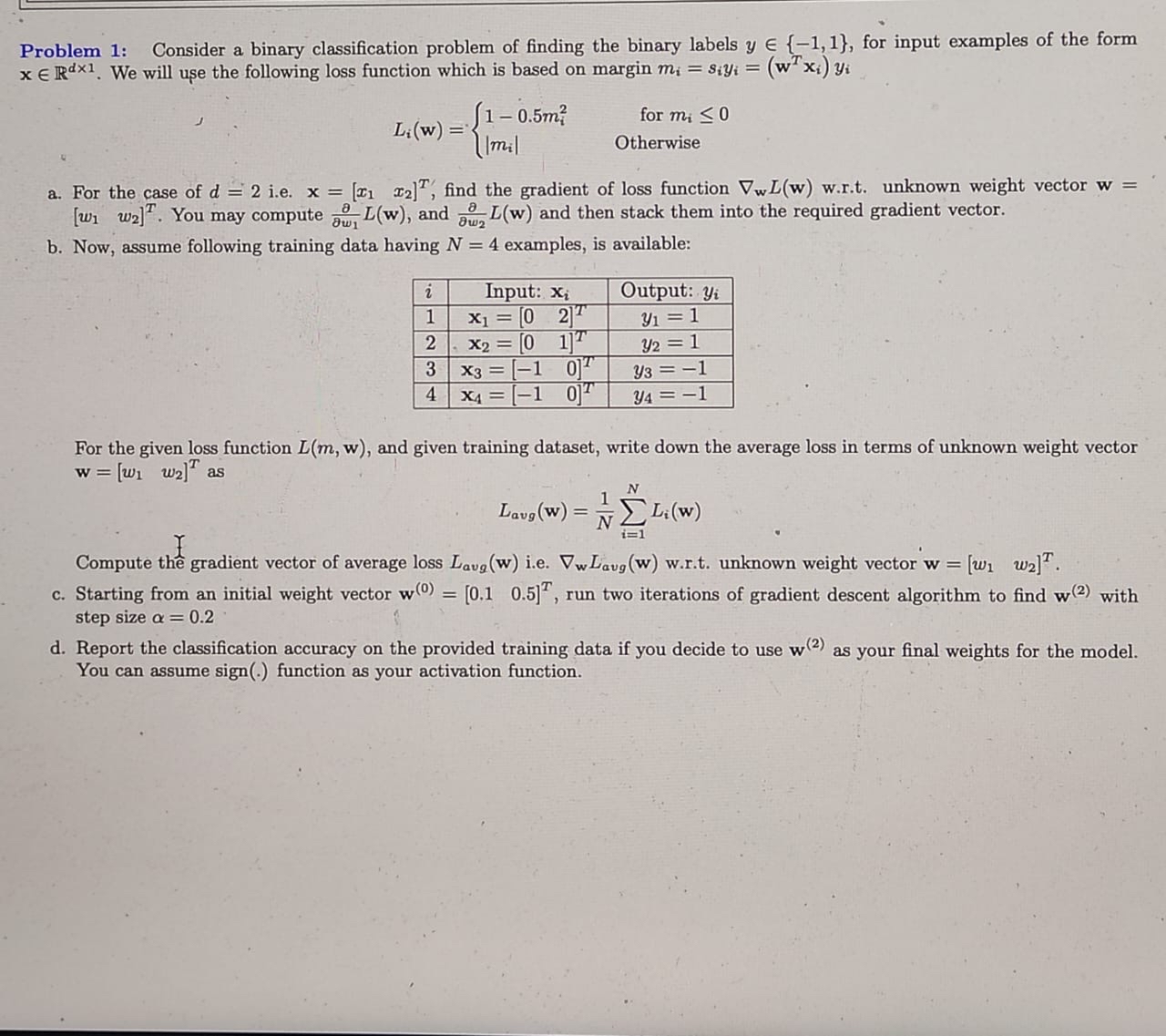

Solved Problem 1 Consider A Binary Classification Problem Chegg Our expert help has broken down your problem into an easy to learn solution you can count on. question: the linear discriminant function for a binary classification problem with three features is the plane defined by the equation 3x1 2x2 4x3 = 18, where the features are x1, x2, and x3. For a binary classification problem, your task is to classify 10 instances using a combination of 3 base classifiers, each of which has an accuracy of 70%. Your solution’s ready to go! our expert help has broken down your problem into an easy to learn solution you can count on. see answer. A logistic regression model is fitted for a binary classification problem with three (3) input features. the optimal parameters are: fo = 2 of = 1.5 ez = 2 f = 5 z = z according to the model, the probability that a particular triple (an, a, as).

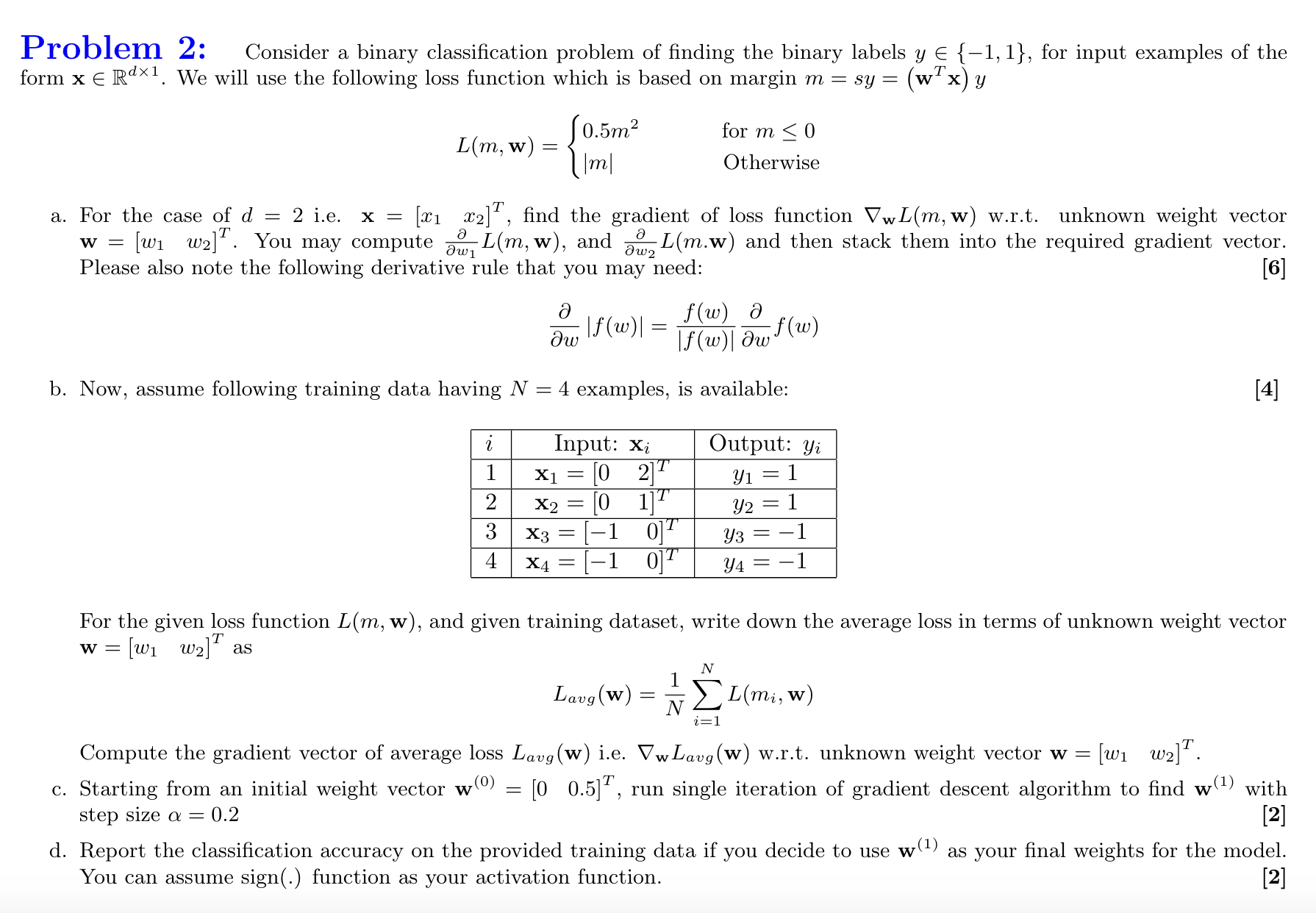

Solved Problem 2 Consider A Binary Classification Problem Chegg Your solution’s ready to go! our expert help has broken down your problem into an easy to learn solution you can count on. see answer. A logistic regression model is fitted for a binary classification problem with three (3) input features. the optimal parameters are: fo = 2 of = 1.5 ez = 2 f = 5 z = z according to the model, the probability that a particular triple (an, a, as). First, let's define the kernel functions: (a) linear kernel: $k (x, x') = x \cdot x'$ (b) polynomial kernel with degree 3: $k (x, x') = (x \cdot x' 1)^3$ now, let's process the examples one by one using the kernelized perceptron algorithm. Luckily, there's a solution for this problem: stratified sampling. stratifying our data ensures that the ratio of distinct values in the given column remains the same in our training and test. With all three estimators agreeing on the same 70% of the data, the max voting will correctly classify that 70%. however, there's a possibility that on the remaining 30% of the data, at least two of the three estimators might agree on the correct classification. In this post, you discovered the use of pytorch to build a binary classification model. you learned how you can work through a binary classification problem step by step with pytorch, specifically:.

Comments are closed.