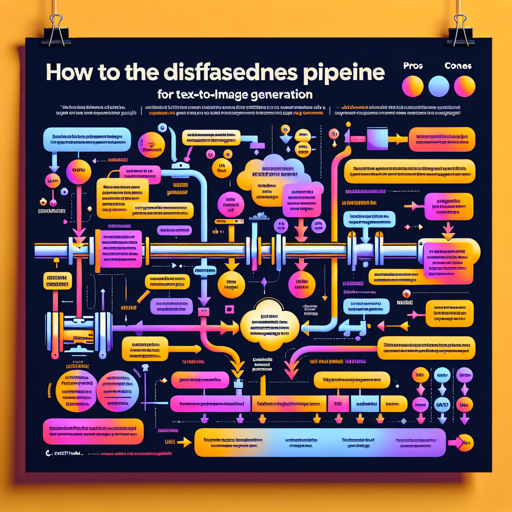

Simple Image Generation Pipeline With Diffusers

How To Use The Diffusers Pipeline For Text To Image Generation Fxis Ai Ernieimagepipeline use [ernieimagepipeline] to generate images from text prompts. the pipeline supports prompt enhancer (pe) by default, which enhances the user’s raw prompt to improve output quality, though it may reduce instruction following accuracy. You’ll learn the theory behind diffusion models, and learn how to use the diffusers library to generate images, fine tune your own models, and more. we’re on a journey to advance and democratize artificial intelligence through open source and open science.

How To Utilize The Diffusers Pipeline For Text To Image Generation Fxis Ai This tutorial shows a practical, production minded workflow for image generation using huggingface diffusers. start by resolving dependency conflicts and pinning runtime libraries to avoid runtime errors in image processing. This article will implement the text 2 image application using the hugging face diffusers library. we will demonstrate two different pipelines with 2 different pre trained stable diffusion models. To generate an image, we simply run the pipeline and don't even need to give it any input, it will generate a random initial noise sample and then iterate the diffusion process. In this tutorial, we design a practical image generation workflow using the diffusers library. we start by stabilizing the environment, then generate high quality images from text prompts using stable diffusion with an optimized scheduler.

Diffusers Src Diffusers Pipelines Flux Pipeline Flux Py At Main To generate an image, we simply run the pipeline and don't even need to give it any input, it will generate a random initial noise sample and then iterate the diffusion process. In this tutorial, we design a practical image generation workflow using the diffusers library. we start by stabilizing the environment, then generate high quality images from text prompts using stable diffusion with an optimized scheduler. Explore text to image generation using the diffusers library. learn about diffusion models, ddpm pipelines, and practical steps for image generation with python. This tutorial focuses on unconditional image generation. for your information, the main purpose of unconditional image generation is to create novel and unique images without any specific. In this article, we will look at a simple and flexible pipeline for training diffusion models for unconditional image generation, primarily using the diffusers and accelerate libraries built by huggingface. All pipelines in this family use the same core components: a vae for latent encoding decoding, a unet2dconditionmodel for denoising, a clip text encoder for prompt conditioning, and a scheduler for the diffusion process.

Pipelines Explore text to image generation using the diffusers library. learn about diffusion models, ddpm pipelines, and practical steps for image generation with python. This tutorial focuses on unconditional image generation. for your information, the main purpose of unconditional image generation is to create novel and unique images without any specific. In this article, we will look at a simple and flexible pipeline for training diffusion models for unconditional image generation, primarily using the diffusers and accelerate libraries built by huggingface. All pipelines in this family use the same core components: a vae for latent encoding decoding, a unet2dconditionmodel for denoising, a clip text encoder for prompt conditioning, and a scheduler for the diffusion process.

Further Stable Diffusion Pipeline With Diffusers In this article, we will look at a simple and flexible pipeline for training diffusion models for unconditional image generation, primarily using the diffusers and accelerate libraries built by huggingface. All pipelines in this family use the same core components: a vae for latent encoding decoding, a unet2dconditionmodel for denoising, a clip text encoder for prompt conditioning, and a scheduler for the diffusion process.

Comments are closed.