Shared Memory Communications Over Rdma Kgvqd

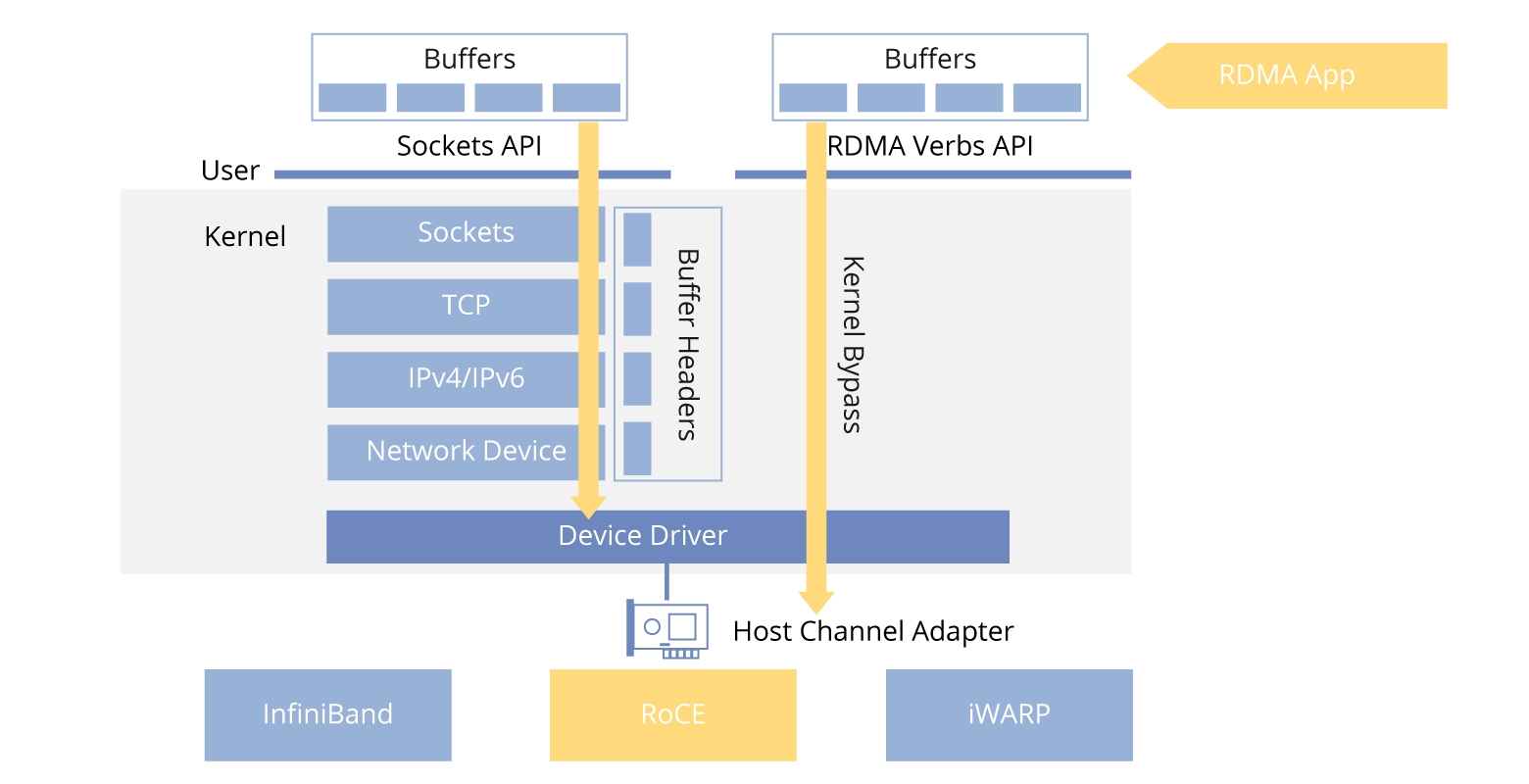

Shared Memory Communications Over Rdma Kgvqd The shared memory communications over remote direct memory access (smc r) protocol solution provides direct, high speed, low latency, and memory to memory (peer to peer) communications. This document describes ibm's shared memory communications over rdma. (smc r) protocol. this protocol provides remote direct memory access. (rdma) communications to tcp endpoints in a manner that is. transparent to socket applications. it further provides for dynamic. discovery of partner rdma capabilities and dynamic setup of rdma.

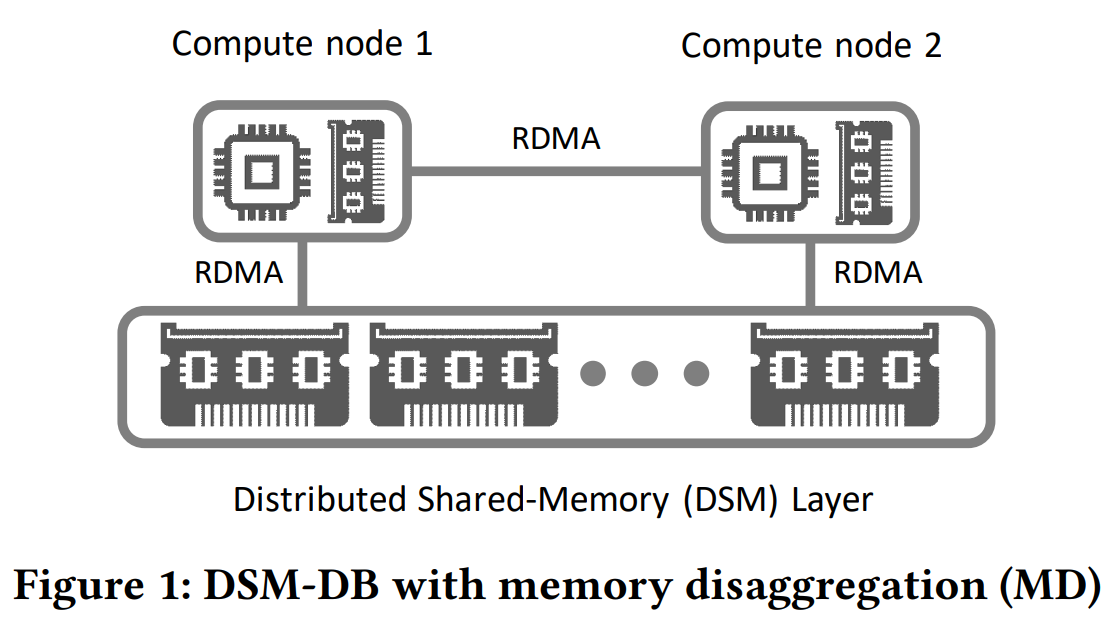

Configuring Shared Memory Communications Version Over Rdma Version 2 Rdma is a fundamental communication infrastructure in high performance computing (hpc). however, as the number of rdma connections increases, system performance rapidly declines and memory consumption increases sharply. previous research demonstrates that sharing rdma connections among processes is necessary and effective to address the scalability problem. unfortunately, previous work shares. This document describes ibm's shared memory communications over rdma (smc r) protocol. this protocol provides remote direct memory access (rdma) communications to tcp endpoints in a manner that is transparent to socket applications. While hardware and software improvements greatly accelerated modern database systems' internal operations, the decades old stream based socket api for external communication is still unchanged. we show experimentally, that for modern high performance systems networking has become a performance bottleneck. therefore, we argue that the communication stack needs to be redesigned to fully exploit. The shared memory communications over remote direct memory access (smc r) protocol solution provides direct, high speed, low latency, and memory to memory (peer to peer) communications.

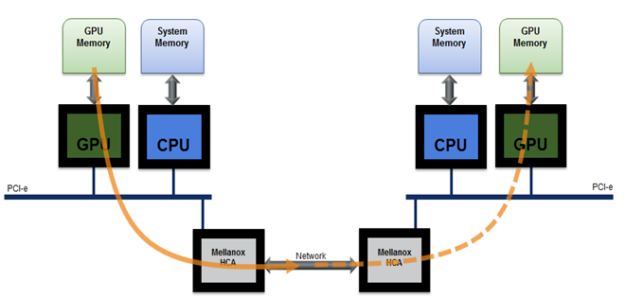

Remote Direct Memory Access Rdma While hardware and software improvements greatly accelerated modern database systems' internal operations, the decades old stream based socket api for external communication is still unchanged. we show experimentally, that for modern high performance systems networking has become a performance bottleneck. therefore, we argue that the communication stack needs to be redesigned to fully exploit. The shared memory communications over remote direct memory access (smc r) protocol solution provides direct, high speed, low latency, and memory to memory (peer to peer) communications. This new protocol is designed to provide highly efficient, low latency data transfer between z os systems over roce in a manner transparent to tcp socket applications. We propose l5, a high performance communication layer for database systems. l5 rethinks the flow of data in and out of the database system and is based on direct memory access techniques for intra datacenter (rdma) and intra machine communication (shared memory). This document describes ibm's shared memory communications over rdma (smc r) protocol. this protocol provides remote direct memory access (rdma) communications to tcp endpoints in a manner that is transparent to socket applications. To enable gpudirect rdma with vllm, configure the following settings: ipc lock security context: add the ipc lock capability to the container's security context to lock memory pages and prevent swapping to disk. shared memory with dev shm: mount dev shm in the pod spec to provide shared memory for interprocess communication (ipc).

Reading Group The Case For Distributed Shared Memory Databases With This new protocol is designed to provide highly efficient, low latency data transfer between z os systems over roce in a manner transparent to tcp socket applications. We propose l5, a high performance communication layer for database systems. l5 rethinks the flow of data in and out of the database system and is based on direct memory access techniques for intra datacenter (rdma) and intra machine communication (shared memory). This document describes ibm's shared memory communications over rdma (smc r) protocol. this protocol provides remote direct memory access (rdma) communications to tcp endpoints in a manner that is transparent to socket applications. To enable gpudirect rdma with vllm, configure the following settings: ipc lock security context: add the ipc lock capability to the container's security context to lock memory pages and prevent swapping to disk. shared memory with dev shm: mount dev shm in the pod spec to provide shared memory for interprocess communication (ipc).

Comments are closed.