Shared And Distributed Memory Architectures

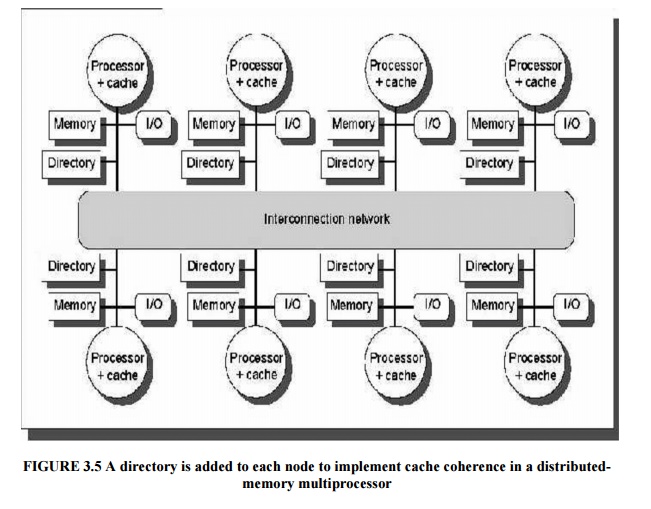

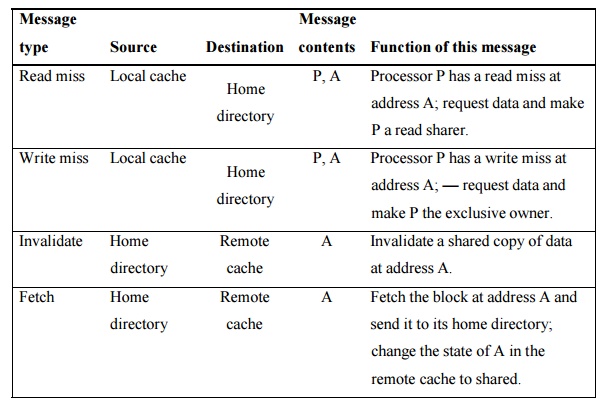

Distributed Shared Memory Architectures Explore the landscape of parallel programming: shared memory vs. distributed memory. uncover their strengths, weaknesses, and optimal use cases for faster, efficient computing. A layer of code, either implemented in the operating system kernel or as a runtime routine, is responsible for managing the mapping between shared memory addresses and physical memory locations. each node's physical memory holds pages of the shared virtual address space.

Distributed Shared Memory Architectures Memory architecture significantly impacts parallel computing performance and scalability. this lesson examines the two dominant models: shared memory and distributed memory, focusing on their practical tradeoffs and limitations. In a shared memory system all processors have access to a vector’s elements and any modifications are readily available to all other processors, while in a distributed memory system, a vector elements would be decomposed (data parallelism). In terms of hardware architecture, the shared memory and the distributed memory architectures have been the most commonly deployed architectures since the inception of the parallel computers. Two prominent approaches exist: shared memory and distributed memory. this tutorial will delve into these concepts, highlighting their key differences, advantages, disadvantages, and.

Understanding Symmetric And Distributed Shared Memory Architectures By In terms of hardware architecture, the shared memory and the distributed memory architectures have been the most commonly deployed architectures since the inception of the parallel computers. Two prominent approaches exist: shared memory and distributed memory. this tutorial will delve into these concepts, highlighting their key differences, advantages, disadvantages, and. Learn how to compare shared memory and distributed memory architectures based on their features, performance, applications, and future trends. This post delves into the various types of machines and programming styles used in hpc, particularly focusing on shared memory and distributed memory architectures. They allow multiple processors to access a common memory space, enabling direct communication and data sharing. this approach simplifies programming but introduces challenges in maintaining data consistency and scalability. As compared to shared memory systems, distributed memory (or message passing) systems can accommodate larger number of computing nodes. this scalability was expected to increase the utilization of message passing architectures.

Comments are closed.