Shader Intermediates Normal Mapping Gpu Shader Tutorial

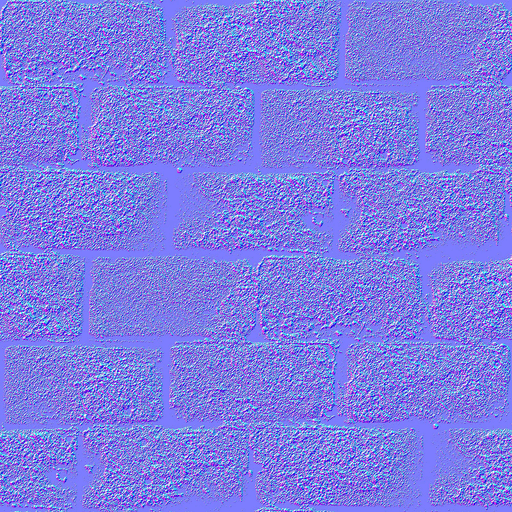

Shader Intermediates Mapping Gpu Shader Tutorial Similar to color mapping, normal mapping can be used to map normals to fragments inside a polygon to add more detail to a surface. normal maps add the appearance of roughness or small bumps on the surface of objects. Today we will talk about normal mapping. since tutorial 8 : basic shading, you know how to get decent shading using triangle normals. one caveat is that until now, we only had one normal per vertex : inside each triangle, they vary smoothly, on the opposite to the colour, which samples a texture.

Shader Intermediates Mapping Gpu Shader Tutorial Since the normal map, by default, is in tangent space, we need to transform all the other variables used in that calculation to tangent space as well. we'll need to construct the tangent matrix in the vertex shader. You can use the 'b' key to toggle between normal mapping and no normal mapping and see the effect. the normal map is bound to texture unit 2 which is now the standard texture unit for that purpose (0 is the color and 1 is the shadow map). In this example, we render a plane and map a texture on it and light it using the normal obtained from the normal map. instead of passing just one texture to the gpu, we send two: the texture itself and its normal map. In this chapter we start by analyzing how the traditional ray cast based normal map projection technique can be successfully implemented on a graphics processor. we also address common problems such as memory limitations and antialiasing.

Shader Intermediates Specular Mapping Gpu Shader Tutorial In this example, we render a plane and map a texture on it and light it using the normal obtained from the normal map. instead of passing just one texture to the gpu, we send two: the texture itself and its normal map. In this chapter we start by analyzing how the traditional ray cast based normal map projection technique can be successfully implemented on a graphics processor. we also address common problems such as memory limitations and antialiasing. Table of contents for the shader intermediates section. You can browse through the tutorial by clicking on the links in the sidebar, or start from here. learn about gpu shaders in a simple, relatively easy to digest format. A texture can be overlayed onto an object through the process of uv mapping, where each vertex of the object is mapped to a coordinate in the texture. by passing the uv coordinates as part of the vertex data, the fragments inside the polygons can have their uv coordinates interpolated by the gpu. Normal mapping allows you to add surface details without adding any geometry. typically, in a modeling program like blender, you create a high poly and a low poly version of your mesh. you take the vertex normals from the high poly mesh and bake them into a texture. this texture is the normal map.

Comments are closed.