Session 8 Supervised Text Classification Word2vec

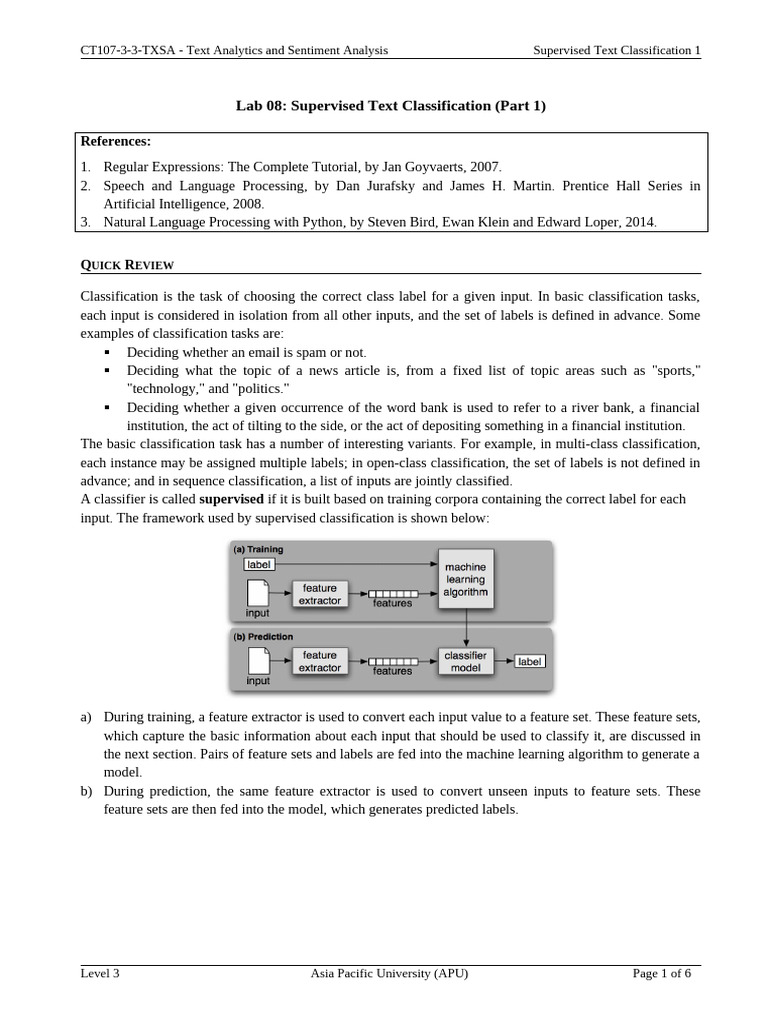

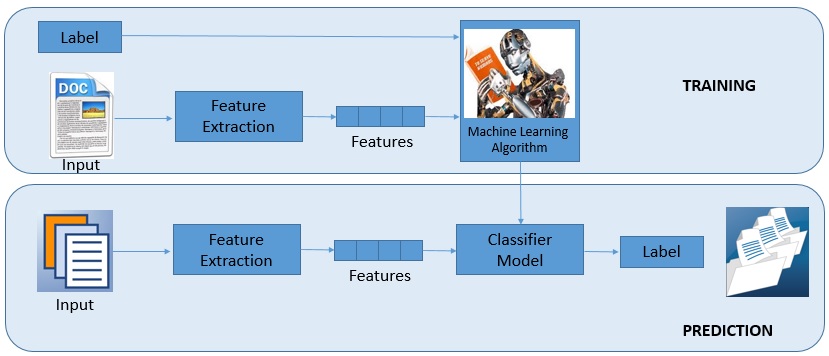

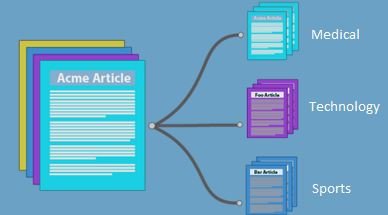

Lab 08 Supervised Text Classification Part 1 Pdf Statistical In session 8, we explore two essential nlp techniques used in modern machine learning: *text classification* and **word2vec**. So far, we have done unsupervised and semi structured approaches to text analysis (frequency, tf–idf, similarity, co occurrence, and word2vec). during these two weeks we are going to make a key transition to supervised methods.

Supervised Learning For Text Classification T Dg Blog Digital Thoughts In this comprehensive guide, we’ll explore how to use word2vec for text classification with a practical example that you can implement today. Part 2 — word2vec we train a word2vec model to explore how words relate to each other in vector space. you’ll see how similar words cluster together and how embeddings capture context and meaning. Word2vec is a popular algorithm used for text classification. learn when to use it over tf idf and how to implement it in python with cnn. In this case study, i will use the same dataset and show you how can you use the numeric representations of words from word2vec and create a classification model.

Supervised Learning For Text Classification T Dg Blog Digital Thoughts Word2vec is a popular algorithm used for text classification. learn when to use it over tf idf and how to implement it in python with cnn. In this case study, i will use the same dataset and show you how can you use the numeric representations of words from word2vec and create a classification model. In the next episode, we’ll train a word2vec model using both training methods and empirically evaluate the performance of each. we’ll also see how training word2vec models from scratch (rather than using a pretrained model) can be beneficial in some circumstances. A machine learning pipeline for binary text classification using word2vec embeddings and multiple classifiers, with detailed performance evaluation and visualization. To continue training, you’ll need the full word2vec object state, as stored by save(), not just the keyedvectors. you can perform various nlp tasks with a trained model. some of the operations are already built in see gensim.models.keyedvectors. This tutorial has shown you how to implement a skip gram word2vec model with negative sampling from scratch and visualize the obtained word embeddings. to learn more about word vectors and.

Comments are closed.