Sequence Ai Github

Sequence Ai Github Sequenceai has 4 repositories available. follow their code on github. Github is where sequence.ai builds software.

Sequenceai Github Sequence ai labs has one repository available. follow their code on github. Contribute to zhimingdeng5 sequence ai development by creating an account on github. This project develops a high performance ai agent for the board game sequence, combining reinforcement learning, heuristic search, and strategic planning to operate in a stochastic, adversarial environment. Contribute to ron12777 sequence ai development by creating an account on github.

Sequencemodel Github This project develops a high performance ai agent for the board game sequence, combining reinforcement learning, heuristic search, and strategic planning to operate in a stochastic, adversarial environment. Contribute to ron12777 sequence ai development by creating an account on github. In this notebook we'll be building a machine learning model to go from once sequence to another, using pytorch and torchtext. This algorithm will help your model understand where it should focus its attention given a sequence of inputs. this week, you will also learn about speech recognition and how to deal with audio data. Transformers offers a vast collection of pre trained models for various nlp tasks, including sequence classification, question answering, and named entity recognition. you can fine tune the pre trained models on your own datasets to adapt them to specific tasks or domains. On how to train your model in google colab, i’ve provided the steps in my github repo.

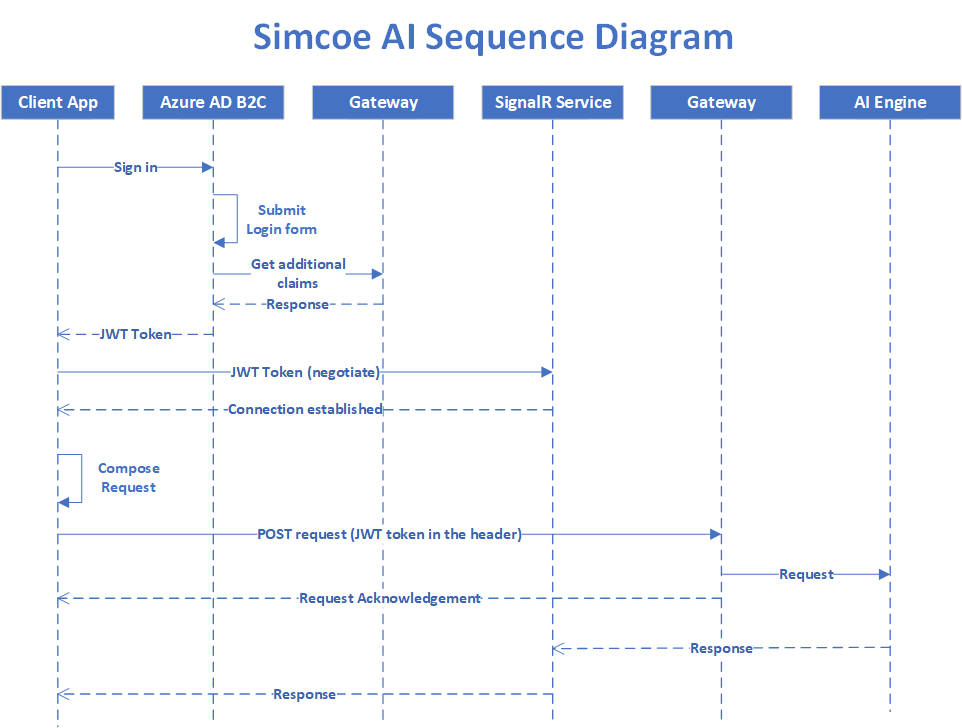

Simcoe Ai Sequence Diagram Simcoeai Github Io In this notebook we'll be building a machine learning model to go from once sequence to another, using pytorch and torchtext. This algorithm will help your model understand where it should focus its attention given a sequence of inputs. this week, you will also learn about speech recognition and how to deal with audio data. Transformers offers a vast collection of pre trained models for various nlp tasks, including sequence classification, question answering, and named entity recognition. you can fine tune the pre trained models on your own datasets to adapt them to specific tasks or domains. On how to train your model in google colab, i’ve provided the steps in my github repo.

Sequence Github Transformers offers a vast collection of pre trained models for various nlp tasks, including sequence classification, question answering, and named entity recognition. you can fine tune the pre trained models on your own datasets to adapt them to specific tasks or domains. On how to train your model in google colab, i’ve provided the steps in my github repo.

Comments are closed.