Scaling Knowledge Graph Embedding Models For Link Prediction

Scaling Knowledge Graph Embedding Models Deepai We propose a new method for scaling training of knowledge graph embedding models for link prediction to address these challenges. towards this end, we propose the following algorithmic strategies: self sufficient partitions, constraint based negative sampling, and edge mini batch training. We propose a new method for scaling training of knowledge graph embedding models for link prediction to address these challenges. towards this end, we propose the following algorithmic strategies: self sufficient partitions, constraint based negative sampling, and edge mini batch training.

Enhancing Knowledge Graph Embedding Models With Semantic Driven Loss To the best of our knowledge, we propose the first ar chitecture for distributed gnn based knowledge graph embedding model training for link prediction. we also in troduce edge mini batch training which allows us to train on large partitions. In this paper, authors provide a framework that incorporates domain oriented regularizations into graph neural networks (gnns) to increase link prediction performance. Due to the growing size and computational complexity of kgs, distributed kg embedding training has recently attracted considerable attention in the research community. In this article, an entity relation level graph attention network model is proposed to fully learn the information of entities and relationships in the graph.

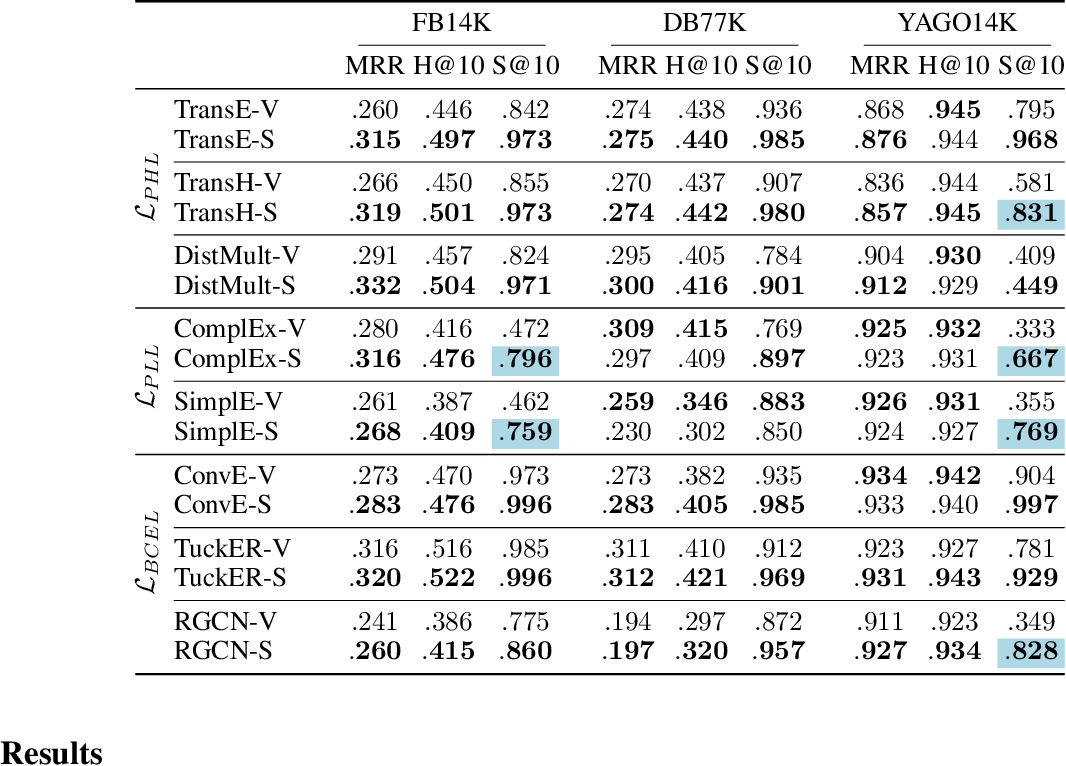

Knowledge Graph Embeddings For Link Prediction Beware Of Semantics Pdf Due to the growing size and computational complexity of kgs, distributed kg embedding training has recently attracted considerable attention in the research community. In this article, an entity relation level graph attention network model is proposed to fully learn the information of entities and relationships in the graph. We propose a new method for scaling training of knowledge graph embedding models for link prediction to address these challenges. towards this end, we propose the following algorithmic strategies: self sufficient partitions, constraint based negative sampling, and edge mini batch training. The knowledge graph embedding (kge) repository is an implementation of the state of the art techniques related to statistical relational learning (srl) to solve link prediction problems. Abstract or the task of link prediction, which aims to infer missing triples by learn ing representations for entities and relations. while kge models excel at ranking based link prediction, the critical issue of probability cali bration has been largely overlooked, resul. We propose a new method for scaling training of knowledge graph embedding models for link prediction to address these challenges. towards this end, we propose the following algorithmic strategies: self sufficient partitions, constraint based negative sampling, and edge mini batch training.

Pdf A Survey On Knowledge Graph Embeddings For Link Prediction We propose a new method for scaling training of knowledge graph embedding models for link prediction to address these challenges. towards this end, we propose the following algorithmic strategies: self sufficient partitions, constraint based negative sampling, and edge mini batch training. The knowledge graph embedding (kge) repository is an implementation of the state of the art techniques related to statistical relational learning (srl) to solve link prediction problems. Abstract or the task of link prediction, which aims to infer missing triples by learn ing representations for entities and relations. while kge models excel at ranking based link prediction, the critical issue of probability cali bration has been largely overlooked, resul. We propose a new method for scaling training of knowledge graph embedding models for link prediction to address these challenges. towards this end, we propose the following algorithmic strategies: self sufficient partitions, constraint based negative sampling, and edge mini batch training.

Comments are closed.