Run Python Job Hopsworks Documentation

Run Python Job Hopsworks Documentation In this guide you learned how to create and run a python job. official documentation for hopsworks and its feature store an open source data intensive ai platform used for the development and operation of machine learning models at scale. Python mode: for data science jobs to explore the features available in the feature store, generate training datasets and feed them in a training pipeline. python mode requires just a python interpreter and can be used both in hopsworks from python jobs jupyter kernels, amazon sagemaker or kubeflow.

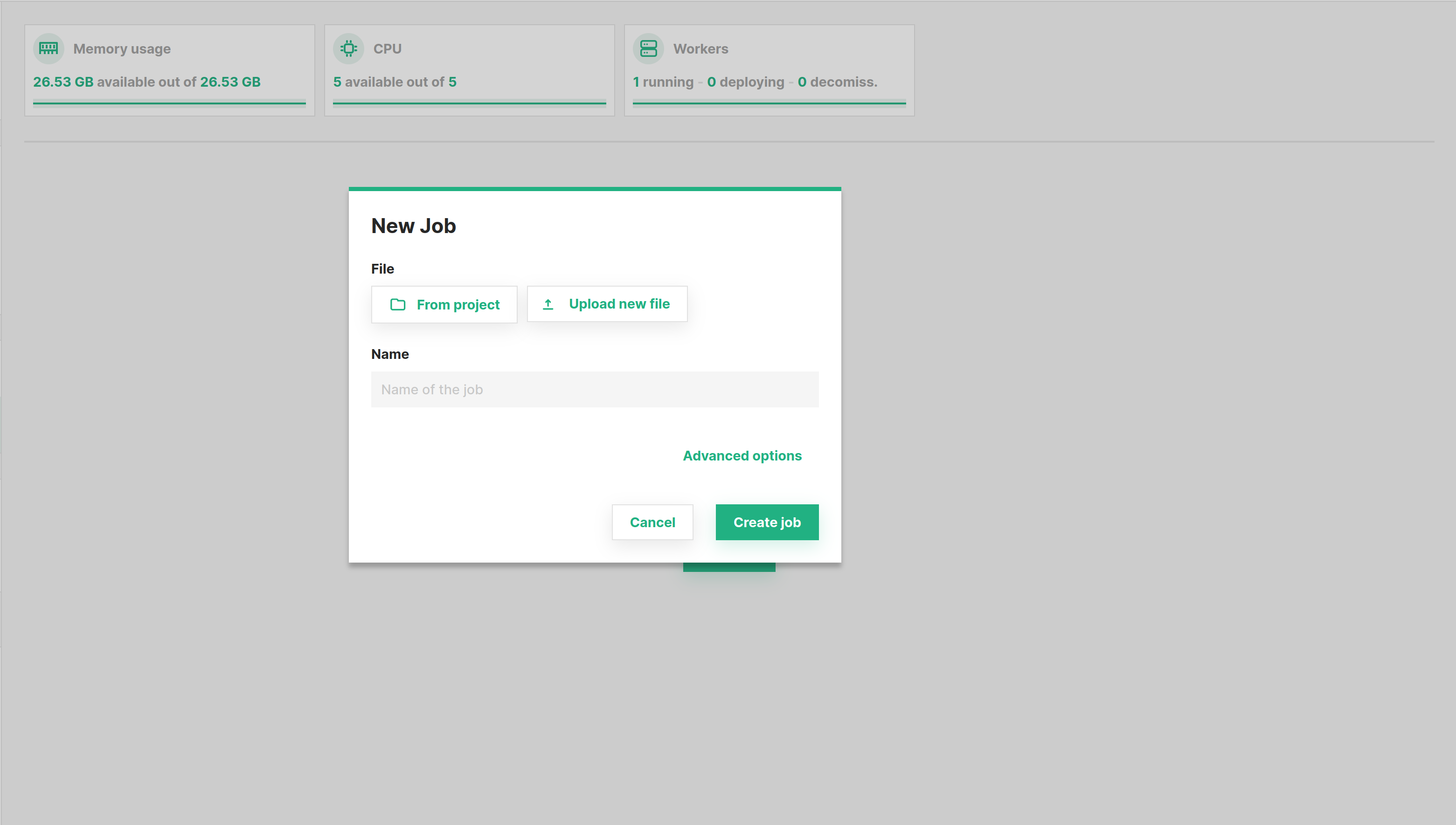

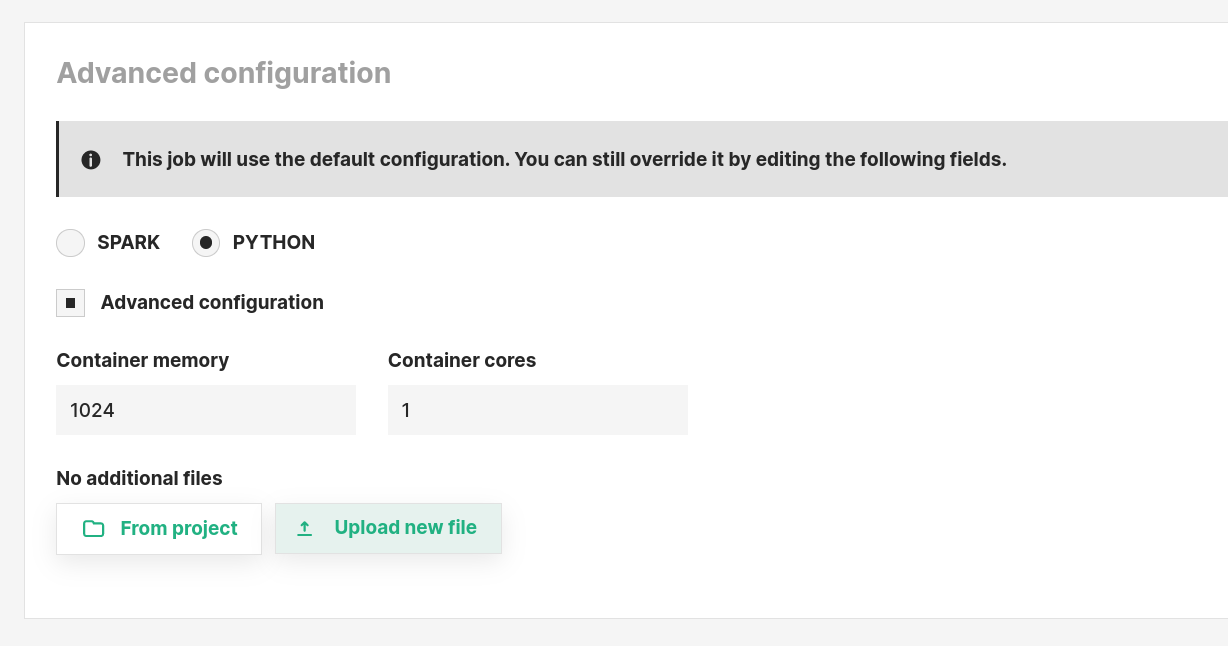

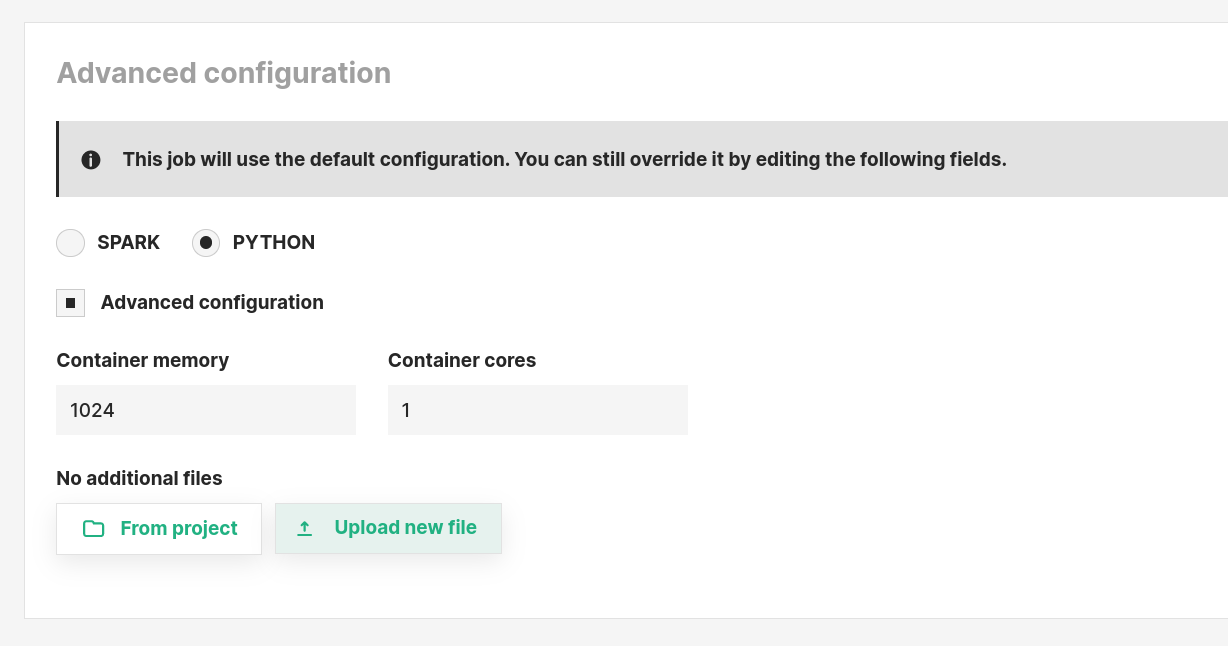

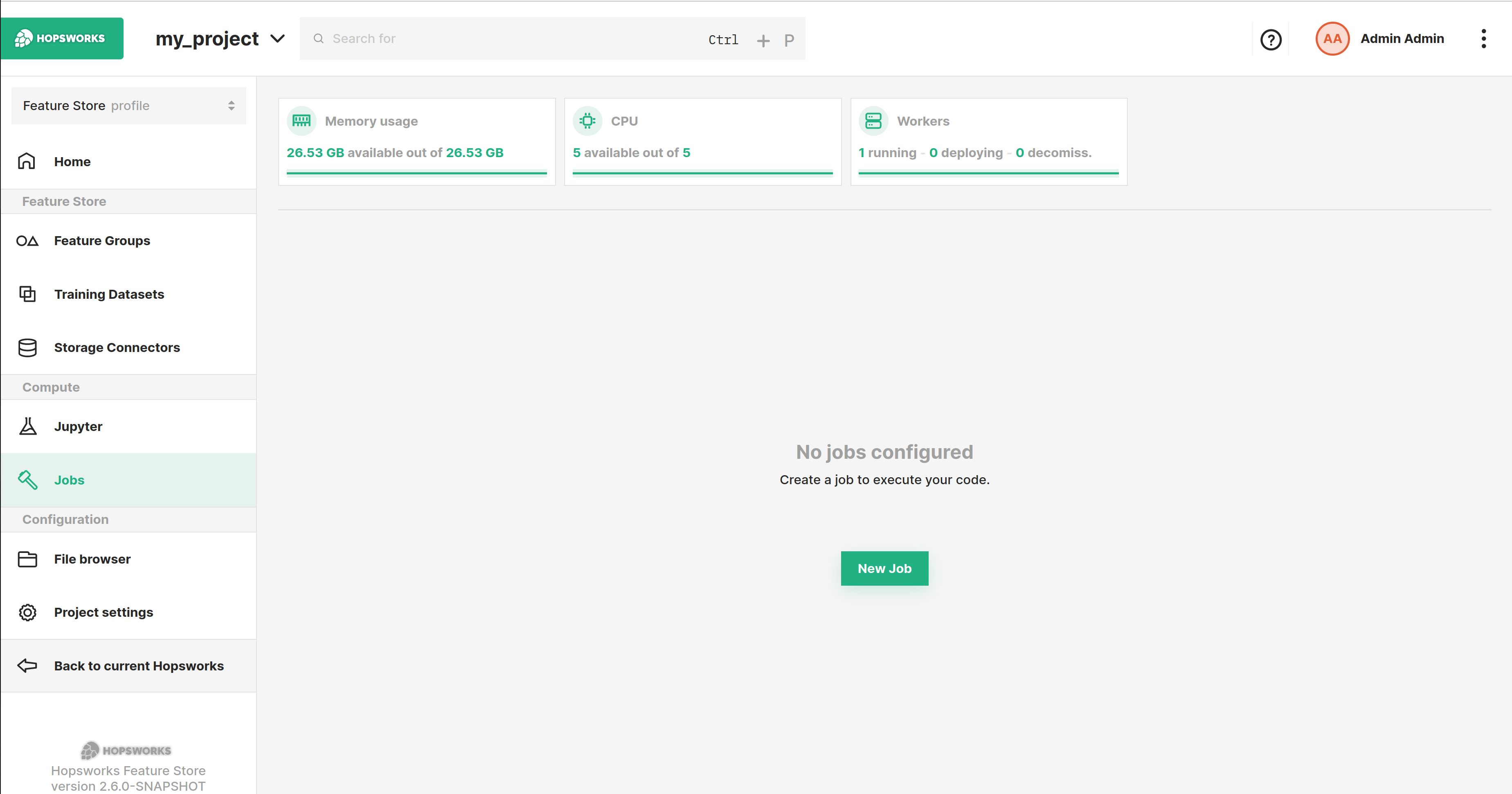

Run Python Job Hopsworks Documentation That is, a library installed in the project's anaconda environment can be used in a job or jupyter notebook (python or pyspark) run in the project. in the python ui, libraries can be installed or uninstalled from the project's anaconda environment. Scikit learn and xgboost models are deployed as python models, in which case you need to provide a predict class that implements the predict method. the predict () method invokes the model on the. Documentation on how to configure and execute a python job on hopsworks. When running a job, hopsworks will automatically convert the pyspark python notebook to a .py file and run it. the notebook is converted every time the job runs, which means changes in the notebook will be picked up by the job without having to update it.

Run Python Job Hopsworks Documentation Documentation on how to configure and execute a python job on hopsworks. When running a job, hopsworks will automatically convert the pyspark python notebook to a .py file and run it. the notebook is converted every time the job runs, which means changes in the notebook will be picked up by the job without having to update it. Each part of the pipeline is defined in a hopsworks job which corresponds to a jupyter notebook, a python script or a jar. the production pipelines are then orchestrated with airflow which is bundled in hopsworks. Documentation on how to configure and execute a jupyter notebook job on hopsworks. Python mode: for data science jobs to explore the features available in the feature store, generate training datasets and feed them in a training pipeline. python mode requires just a python interpreter and can be used both in hopsworks from python jobs jupyter kernels, amazon sagemaker or kubeflow. Your feature, training, and batch inference pipelines can be written and scheduled as python programs that write read to from the hopsworks feature store.

Run Python Job Hopsworks Documentation Each part of the pipeline is defined in a hopsworks job which corresponds to a jupyter notebook, a python script or a jar. the production pipelines are then orchestrated with airflow which is bundled in hopsworks. Documentation on how to configure and execute a jupyter notebook job on hopsworks. Python mode: for data science jobs to explore the features available in the feature store, generate training datasets and feed them in a training pipeline. python mode requires just a python interpreter and can be used both in hopsworks from python jobs jupyter kernels, amazon sagemaker or kubeflow. Your feature, training, and batch inference pipelines can be written and scheduled as python programs that write read to from the hopsworks feature store.

Run Python Job Hopsworks Documentation Python mode: for data science jobs to explore the features available in the feature store, generate training datasets and feed them in a training pipeline. python mode requires just a python interpreter and can be used both in hopsworks from python jobs jupyter kernels, amazon sagemaker or kubeflow. Your feature, training, and batch inference pipelines can be written and scheduled as python programs that write read to from the hopsworks feature store.

Run Python Job Hopsworks Documentation

Comments are closed.