Run Ai Locally The 0 Setup That Replaced My 200 Monthly Bill Ollama Ai

How Personalized Ai Assistant Works Perfect Memory Ai Tired of expensive cloud api bills? this video shows how to build a powerful local ai setup, reducing your monthly costs from hundreds to zero. I've been running ai locally for 6 months, processing thousands of coding tasks without spending a cent on cloud services. here's how you can do the same in under 10 minutes.

How To Run Ai Models Locally On Windows Without Internet Thanks to tools like ollama, you can now run world class ai models on the hardware you already own. in this guide, we show you how to take back control of your intelligence, achieving true data sovereignty and digital independence. Discover how to run powerful ai models like llama 3.3 locally on your computer using ollama in 2026. this complete guide shows privacy conscious users how to set up free, private ai without cloud dependency in under 15 minutes. Step by step guide to install ollama on linux, macos, or windows, pull your first model, and access the rest api. includes gpu setup and troubleshooting. Run llms on local hardware for privacy, lower costs, and faster inference—this guide covers ollama, llama.cpp, hardware, quantization, and deployment tips.

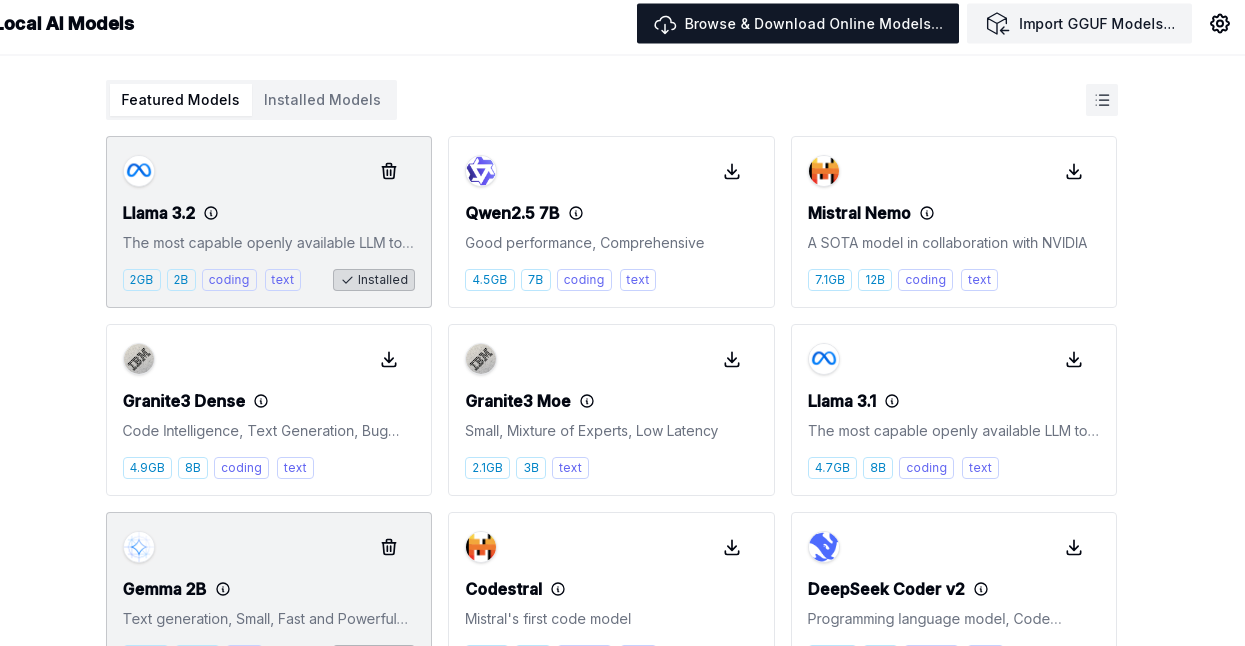

Run Ai Models Locally And Securely With Ollama No Internet Needed Step by step guide to install ollama on linux, macos, or windows, pull your first model, and access the rest api. includes gpu setup and troubleshooting. Run llms on local hardware for privacy, lower costs, and faster inference—this guide covers ollama, llama.cpp, hardware, quantization, and deployment tips. Running large language models locally is no longer limited to researchers or high end machines. with ollama and modelfiles, you can download capable models, run them on your own device, and tailor their behavior to fit your workflow. Learn how to run ai models locally on your gpu in 2026. complete hardware guide, step by step setup with ollama & lm studio, best models to use, and real cost savings. This guide walks you through setting up both ollama (cli first) and lm studio (gui first), choosing the right models, and integrating local ai into your development workflow. Step by step tutorial: install ollama, run llama 3, mistral, and 50 ai models locally for free. replace chatgpt in 5 minutes. works on mac, windows, linux.

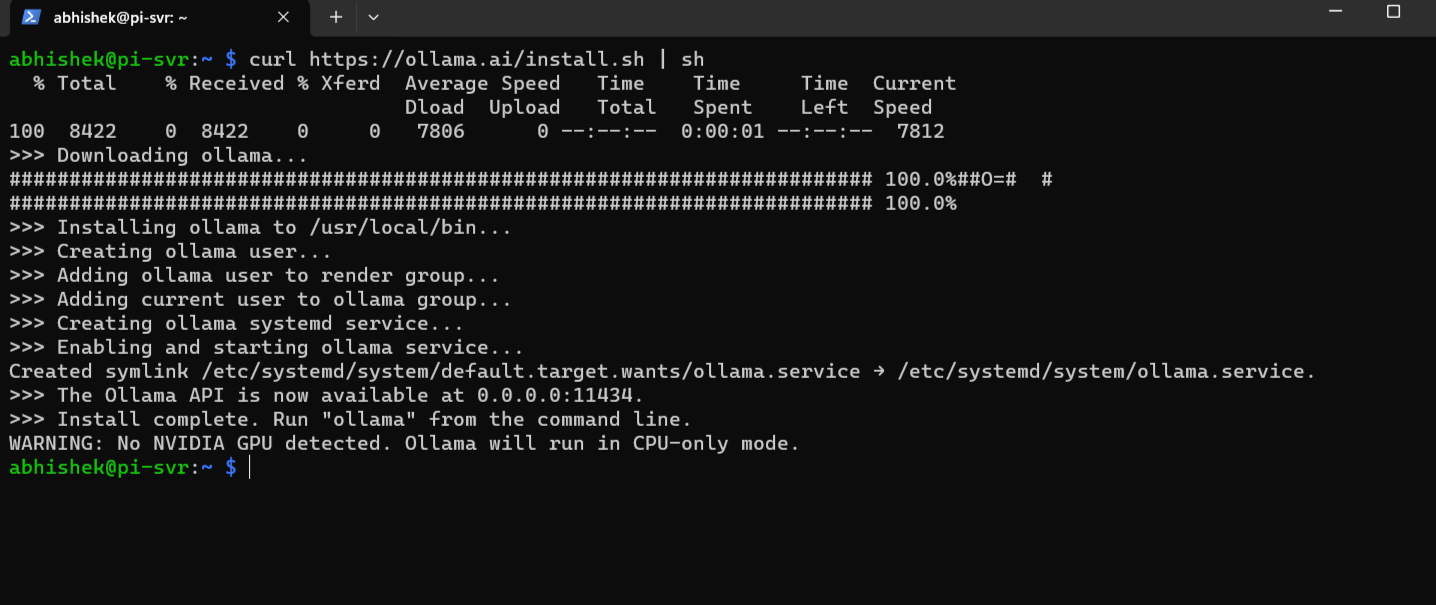

How To Run Llms Locally On Raspberry Pi Using Ollama Ai Running large language models locally is no longer limited to researchers or high end machines. with ollama and modelfiles, you can download capable models, run them on your own device, and tailor their behavior to fit your workflow. Learn how to run ai models locally on your gpu in 2026. complete hardware guide, step by step setup with ollama & lm studio, best models to use, and real cost savings. This guide walks you through setting up both ollama (cli first) and lm studio (gui first), choosing the right models, and integrating local ai into your development workflow. Step by step tutorial: install ollama, run llama 3, mistral, and 50 ai models locally for free. replace chatgpt in 5 minutes. works on mac, windows, linux.

How To Run Llms Locally On Raspberry Pi Using Ollama Ai This guide walks you through setting up both ollama (cli first) and lm studio (gui first), choosing the right models, and integrating local ai into your development workflow. Step by step tutorial: install ollama, run llama 3, mistral, and 50 ai models locally for free. replace chatgpt in 5 minutes. works on mac, windows, linux.

Running Ai Locally Without Spending All Day On Setup Hackaday

Comments are closed.