Rnns Made Simple Understanding Recurrent Neural Networks In Deep

Rnns Made Simple Understanding Recurrent Neural Networks In Deep Recurrent neural networks (rnns) are a class of neural networks designed to process sequential data by retaining information from previous steps. they are especially effective for tasks where context and order matter. lets understand rnn with a example:. Recurrent neural networks are a type of deep learning models that can process sequential data with variable length such as text, speech and time series by using internal memory to track.

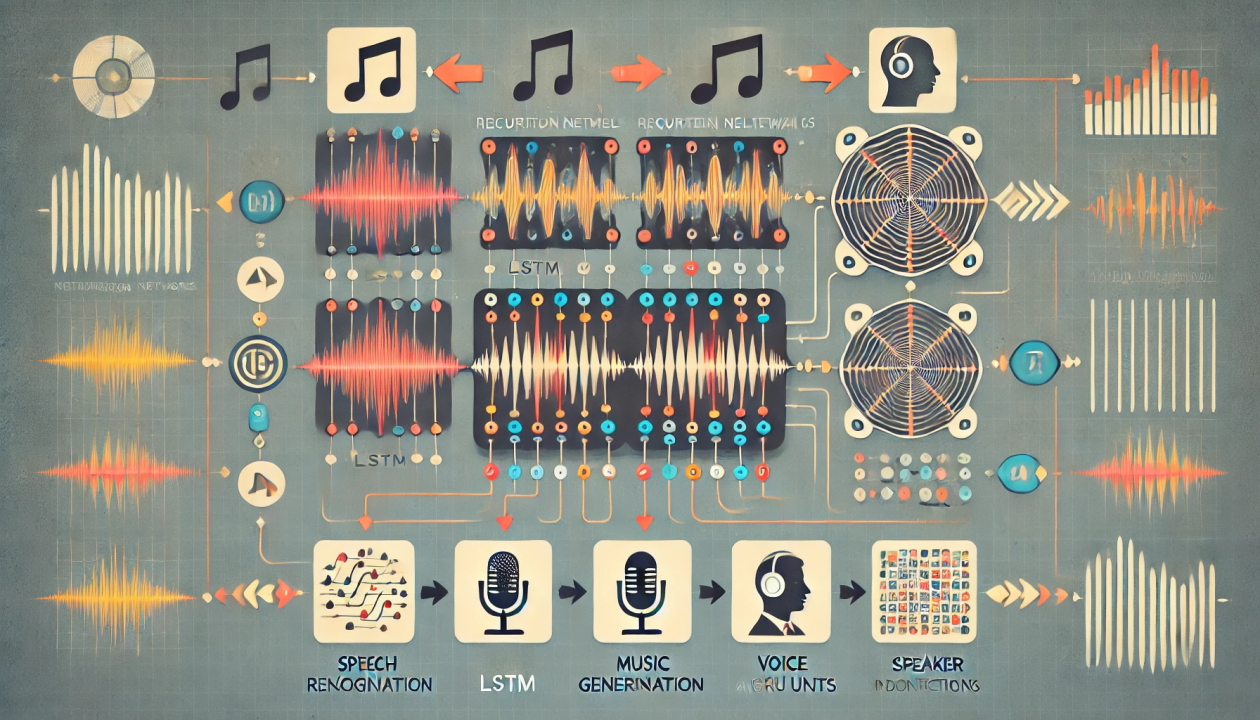

Understanding Recurrent Neural Networks Rnns In Deep Learning What are recurrent neural networks (rnns)? rnns are a type of neural network specifically designed to handle sequential data. unlike feedforward neural networks that process data in a single pass, rnns have a recurrent connection that allows them to maintain a 'memory' of past inputs. Before we deep dive into the details of what a recurrent neural network is, let’s take a glimpse of what are kind of tasks that one can achieve using such networks. A comprehensive guide to recurrent neural networks (rnns), from basic architecture to lstm and gru innovations, covering history, applications, and modern context. Unlike feedforward neural networks, which process inputs independently, rnns utilize recurrent connections, where the output of a neuron at one time step is fed back as input to the network at the next time step. this enables rnns to capture temporal dependencies and patterns within sequences.

Deep Recurrent Neural Networks With Keras Paperspace Blog A comprehensive guide to recurrent neural networks (rnns), from basic architecture to lstm and gru innovations, covering history, applications, and modern context. Unlike feedforward neural networks, which process inputs independently, rnns utilize recurrent connections, where the output of a neuron at one time step is fed back as input to the network at the next time step. this enables rnns to capture temporal dependencies and patterns within sequences. Recurrent neural networks (rnns) are a type of artificial neural network designed to process sequences of data. they work especially well for jobs requiring sequences, such as time series data, voice, natural language, and other activities. Unlike traditional neural networks that see the world one snapshot at a time, rnns remember the past, making them uniquely suited to understanding time, language, and structure in data. in this article, we’ll dive into how they work, where they shine, and why they’re both brilliant and flawed. Turns out a rnn is not only a lot different but also more versatile and more powerful. but before we add it to our forecasting toolkit, we should do our best to develop an intuitive understanding of how it works – starting with how an rnn is able to remember the past. let’s find out. Recurrent neural networks (rnns) are a specific type of neural networks (nns) that are especially relevant for sequential data like time series, text, or audio data. traditional neural networks process each input independently, meaning they cannot retain information about previous inputs.

Comments are closed.