Replication Databurst Data Engineering Wiki

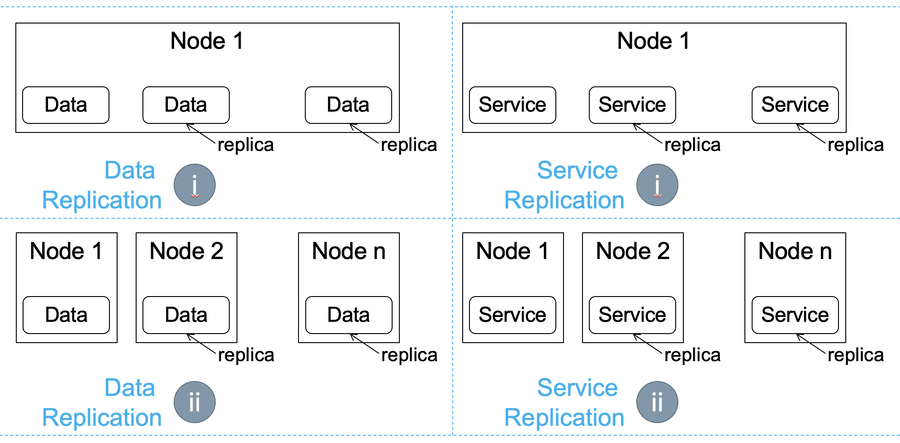

Replication Databurst Data Engineering Wiki Replication in distributed systems is a fundamental strategy used to ensure data availability, durability, and fault tolerance. it involves maintaining copies of data on multiple machines (replicas) to prevent data loss in case of hardware failure or other issues. This is the official wiki built and maintained by the data engineering community. the data engineering wiki is an open source living document that contains a constantly evolving collection of topics related to data engineering.

Replication Databurst Data Engineering Wiki A replication is extendable across a computer network, so that the disks can be located in physically distant locations, and the primary replica database replication model is usually applied. The databurst wiki is a key resource designed to complement the data engineering roadmaps. it provides a brief overview of each topic covered in the roadmaps and introduces free resources to help you dive deeper into each subject. 🗺️ the data engineering roadmap: dive into topics tailored for both newcomers and seasoned experts. 💡 databurst products and services: discover how our solutions transform data operations and business intelligence. Databurst data engineering wiki roadmap step by step programming language operating system networking general software engineering skills web frameworks and api development version control system version control system hosting distributed systems concepts sql fundamentals databases data modeling data management architecture data integration storage.

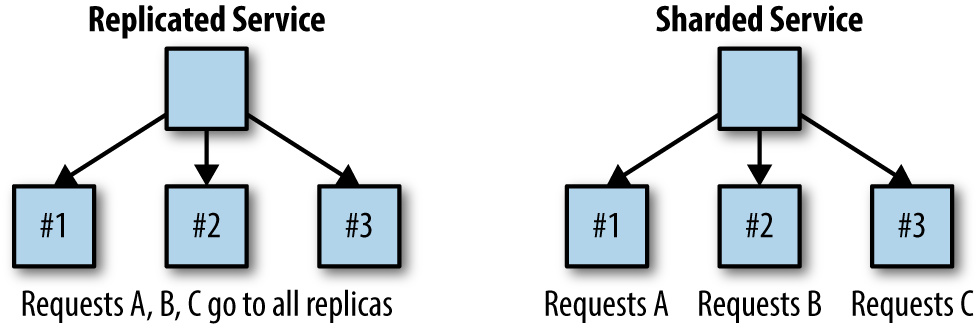

Types Of Data Replication Strategies The Ultimate Guide Airbyte 🗺️ the data engineering roadmap: dive into topics tailored for both newcomers and seasoned experts. 💡 databurst products and services: discover how our solutions transform data operations and business intelligence. Databurst data engineering wiki roadmap step by step programming language operating system networking general software engineering skills web frameworks and api development version control system version control system hosting distributed systems concepts sql fundamentals databases data modeling data management architecture data integration storage. We are a group of dedicated data engineers at databurst, are excited to share a comprehensive roadmap tailored for data engineering professionals at all levels—from those just starting out to the most seasoned experts. Databay automatically connects all your business data sources and keeps them clean, reliable, and updated in real time within your data warehouse. get instant insights from trustworthy data while we handle all the complexity behind the scenes—no technical setup or coding required. By capturing only the changes, rather than the entire data set, cdc makes the process of data replication, synchronization, and integration more efficient, especially for applications like data warehousing, etl processes, and real time analytics. Data replication helps in boosting data durability, provides more processing and computation power, and enables extensive data sharing among systems. it can also be used to divide the network burden among multiple sites, making data accessible on several hosts or data centers.

Advantages Of Data Replication A Quick Overview Airbyte We are a group of dedicated data engineers at databurst, are excited to share a comprehensive roadmap tailored for data engineering professionals at all levels—from those just starting out to the most seasoned experts. Databay automatically connects all your business data sources and keeps them clean, reliable, and updated in real time within your data warehouse. get instant insights from trustworthy data while we handle all the complexity behind the scenes—no technical setup or coding required. By capturing only the changes, rather than the entire data set, cdc makes the process of data replication, synchronization, and integration more efficient, especially for applications like data warehousing, etl processes, and real time analytics. Data replication helps in boosting data durability, provides more processing and computation power, and enables extensive data sharing among systems. it can also be used to divide the network burden among multiple sites, making data accessible on several hosts or data centers.

Comments are closed.