Releases Aws Samples Lidar 3d Point Cloud Labeling Example Github

Releases Aws Samples Lidar 3d Point Cloud Labeling Example Github Through sensor fusion, workers will be able to adjust labels in the 3d scene and in 2d images, and label adjustments will be mirrored in the other view. the dataset used is provided to us by velodyne. In this post, we demonstrate how to label 3d point cloud data generated by velodyne lidar sensors using amazon sagemaker ground truth. we break down the process of sending data for annotation so that you can obtain precise, high quality results. the code for this example is available on github.

Github Venkatnarayanan11 Lidar Pointcloud Processing Data Set In this demo, you’ll start by inspecting the input data and manifest files used to in the demo. then, you will specifying resources needed to create a labeling job. in this step, you’ll have the option to make yourself a worker on a private work team that you send the labeling job tasks to. You can create a release to package software, along with release notes and links to binary files, for other people to use. learn more about releases in our docs. Through sensor fusion, workers will be able to adjust labels in the 3d scene and in 2d images, and label adjustments will be mirrored in the other view.< p>\n

the dataset used is provided to us by velodyne. Contribute to aws samples lidar 3d point cloud labeling example development by creating an account on github.

Github Roburishabh Lidar Point Cloud Based 3d Object Detection The Through sensor fusion, workers will be able to adjust labels in the 3d scene and in 2d images, and label adjustments will be mirrored in the other view.< p>\n

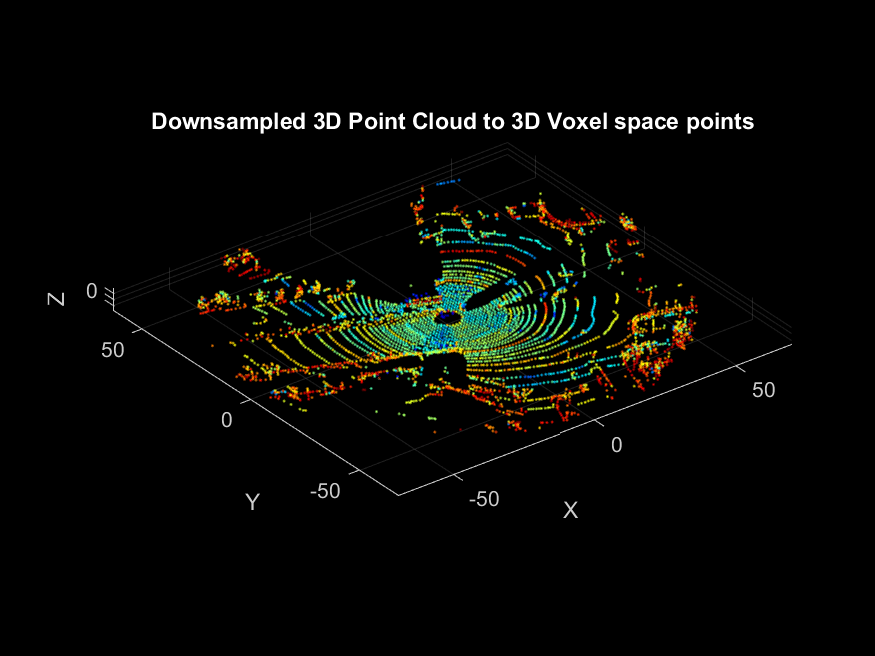

the dataset used is provided to us by velodyne. Contribute to aws samples lidar 3d point cloud labeling example development by creating an account on github. Create a 3d point cloud labeling job to have workers label objects in 3d point clouds generated from 3d sensors like light detection and ranging (lidar) sensors and depth cameras, or generated from 3d reconstruction by stitching images captured by an agent like a drone. This notebook will demonstrate how you can pre process your 3d point cloud input data to create an object tracking labeling job and include sensor and camera data for sensor fusion. []in this post, we demonstrate how to label 3d point cloud data generated by velodyne lidar sensors using amazon sagemaker ground truth. we break down the process of sending data for annotation so that you can obtain precise, high quality results. []the code for this example is available on github. Create a 3d point cloud labeling job with amazon sagemaker ground truth ¶ this sample notebook takes you through an end to end workflow to demonstrate the functionality of sagemaker ground truth 3d point cloud built in task types.

Comments are closed.