Reducing Latency With Fog Computing

Fog Computing Examples Architecture Working And Challenges This review explores the crucial role of fog computing in addressing the increasing demands of the internet of things (iot) and cloud environments, focusing on its ability to reduce latency, manage large data volumes, and optimize bandwidth by bringing computational resources closer to data sources. Fog computing contributions: by processing data near the network edge, fog computing reduces latency by up to 40%, critical for real time applications. it also supports large scale sensor networks, a growing necessity as iot devices proliferate.

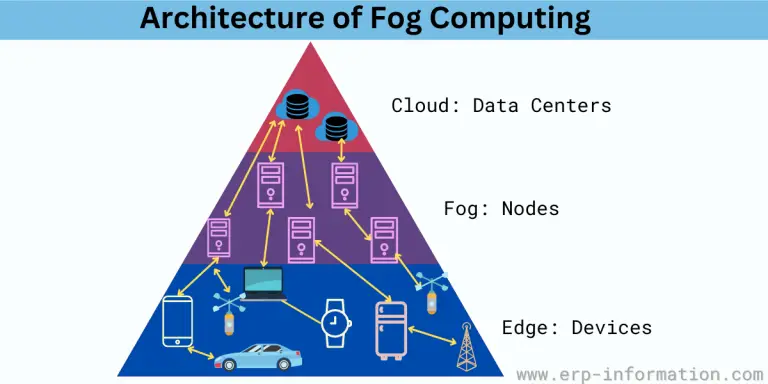

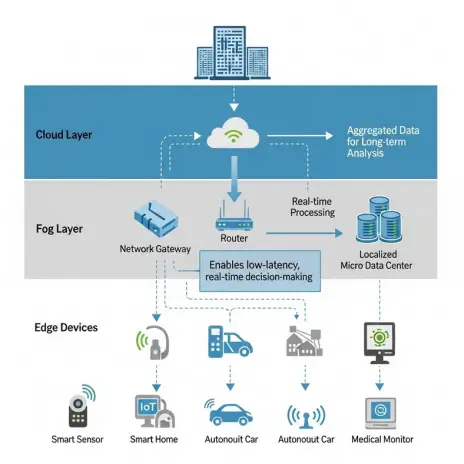

Fog Computing For Low Latency Applications This research proposes a novel smart fog gateway architecture augmented with machine learning (ml) algorithms to significantly reduce latency in fog based iot environments. This decentralized approach reduces latency and improves resource utilization, making it ideal for latency sensitive applications. fog computing enables the processing of data at or near the source of data generation, thereby reducing the burden on centralized cloud servers and enhancing the overall system efficiency. Fog computing on reducing latency in smart city iot architectures. we will analyze various case studies where fog nodes have been imple. ented to enhance the performance of latency sensitive applications. the findings will provide insights into the potential benefits of integrating fog computing into urban infrastructures, highlighting its r. Fog architecture is a distributed computing paradigm deploying processing at intermediate network layers to reduce latency and conserve bandwidth in iot and cyber physical systems. it integrates layered models that combine iot devices, edge gateways, and centralized clouds to enable real time analytics, mobility support, and secure data processing. key design challenges include managing.

An Iot Based Fog Computing Model Fog computing on reducing latency in smart city iot architectures. we will analyze various case studies where fog nodes have been imple. ented to enhance the performance of latency sensitive applications. the findings will provide insights into the potential benefits of integrating fog computing into urban infrastructures, highlighting its r. Fog architecture is a distributed computing paradigm deploying processing at intermediate network layers to reduce latency and conserve bandwidth in iot and cyber physical systems. it integrates layered models that combine iot devices, edge gateways, and centralized clouds to enable real time analytics, mobility support, and secure data processing. key design challenges include managing. Fog computing enhances cloud capabilities by analyzing data closer to where it is developed, which reduces latency and increases efficiency for internet of things (iot) applications. In fog computing, computation is offloaded to edge devices and these characteristics such as low latency enables to processing of data independently without the concern of a cloud server [7]. therefore, security limitations and challenges in fog computing contain privacy, security and trust because of a decentralized untrusted environment [8]. The goal of this research is to discover how well fog computing performs by measuring latency and energy consumption along with network utilization and ram usage and data transfer rate for determining application resource optimization and performance enhancement. Fog computing has emerged as a promising paradigm for bringing capabilities of cloud computing closer to the edge computing. it tries to overcome the limits of traditional cloud designs by.

Comments are closed.