Random Forests Ben Lau

Random Forests With m=1, the split variable is completely random, so all variables get a chance. this will decorrelate the trees the most, but can create bias, somewhat similar to that in ridge regression. Random forests are a combination of tree predictors such that each tree depends on the values of a random vector sampled independently and with the same distribution for all trees in the forest.

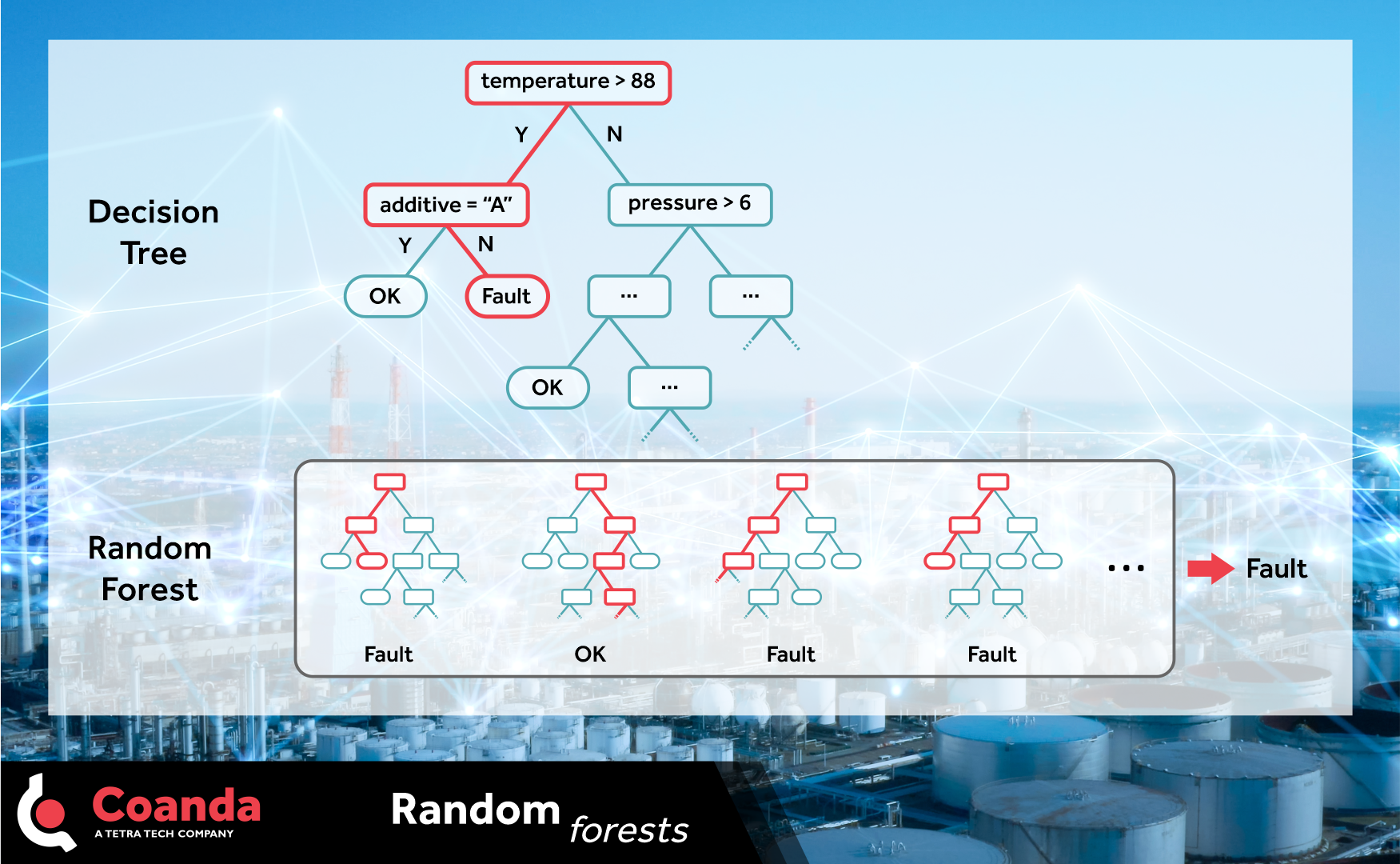

Random Forests Coanda Research Development Random forest is a machine learning algorithm that uses many decision trees to make better predictions. each tree looks at different random parts of the data and their results are combined by voting for classification or averaging for regression which makes it as ensemble learning technique. Random forests or random decision forests is an ensemble learning method for classification, regression and other tasks that works by creating a multitude of decision trees during training. Random forests are a combination of tree predictors such that each tree depends on the values of a random vector sampled independently and with the same distribution for all trees in the forest. Thus, not only can we build many more trees using the random ized tree learning algorithm, but these trees will also be less correlated. for these reasons, random forests tend to have excellent performance.

Random Forests Ben Lau Random forests are a combination of tree predictors such that each tree depends on the values of a random vector sampled independently and with the same distribution for all trees in the forest. Thus, not only can we build many more trees using the random ized tree learning algorithm, but these trees will also be less correlated. for these reasons, random forests tend to have excellent performance. In the vast forest of machine learning algorithms, one algorithm stands tall like a sturdy tree – random forest. it’s an ensemble learning method that’s both powerful and flexible, widely used for classification and regression tasks. The random forest algorithm is a supervised learning model; it uses labeled data to “learn” how to classify unlabeled data. Random forest is a part of bagging (bootstrap aggregating) algorithm because it builds each tree using different random part of data and combines their answers together. throughout this article, we’ll focus on the classic golf dataset as an example for classification. Random forest can indeed be used for this type of algorithm because it is applicable to both classification and regression problems. in this guide, we will discuss the working and advantages of the random forest algorithm, its operation, applications, and how it functions.

Comments are closed.