Quantization Boost Ai Efficiency

Quantization Struggles With Ai Model Efficiency Limits We introduce a set of advanced theoretically grounded quantization algorithms that enable massive compression for large language models and vector search engines. The nvidia tensorrt and model optimizer tools simplify the quantization process, maintaining model accuracy while improving efficiency. this blog series is designed to demystify quantization for developers new to ai research, with a focus on practical implementation.

Rethinking Ai Quantization The Missing Piece In Model Efficiency Quantization is a model optimization technique that reduces the precision of numerical values such as weights and activations in models to make them faster and more efficient. it helps lower memory usage, model size, and computational cost while maintaining almost the same level of accuracy. Quantization, a technique that reduces the precision of model values to a smaller set of discrete values, offers a promising solution by reducing the size of llms and accelerating inference. Quantization is a transformative ai optimization technique that compresses models by reducing precision from high bit floating point numbers (e.g., fp32) to low bit integers (e.g., int8). In this article, we’ll explore why everything is numbers under the hood, how precision impacts performance, and how quantization techniques unlock new levels of efficiency for deploying ai.

Unpacking Ai Quantization Limits Efficiency Vs Accuracy Learn Ai Quantization is a transformative ai optimization technique that compresses models by reducing precision from high bit floating point numbers (e.g., fp32) to low bit integers (e.g., int8). In this article, we’ll explore why everything is numbers under the hood, how precision impacts performance, and how quantization techniques unlock new levels of efficiency for deploying ai. The process of quantization in ai involves reducing the precision of numerical values used in models, which can dramatically boost computational efficiency and lower the resource requirements for running ai applications. Exploring novel quantization schemes, optimizing for specific hardware, and integrating quantization with other model compression techniques will further enhance the efficiency and accessibility of large language models, paving the way for their widespread adoption across diverse applications. Explore model quantization to boost the efficiency of your ai models! this guide discusses benefits and limitations with a hands on example. To make models smaller and more efficient, developers employ quantization techniques to run them at lower precision.

Faster Smaller Smarter Quantization In Ai Applydata The process of quantization in ai involves reducing the precision of numerical values used in models, which can dramatically boost computational efficiency and lower the resource requirements for running ai applications. Exploring novel quantization schemes, optimizing for specific hardware, and integrating quantization with other model compression techniques will further enhance the efficiency and accessibility of large language models, paving the way for their widespread adoption across diverse applications. Explore model quantization to boost the efficiency of your ai models! this guide discusses benefits and limitations with a hands on example. To make models smaller and more efficient, developers employ quantization techniques to run them at lower precision.

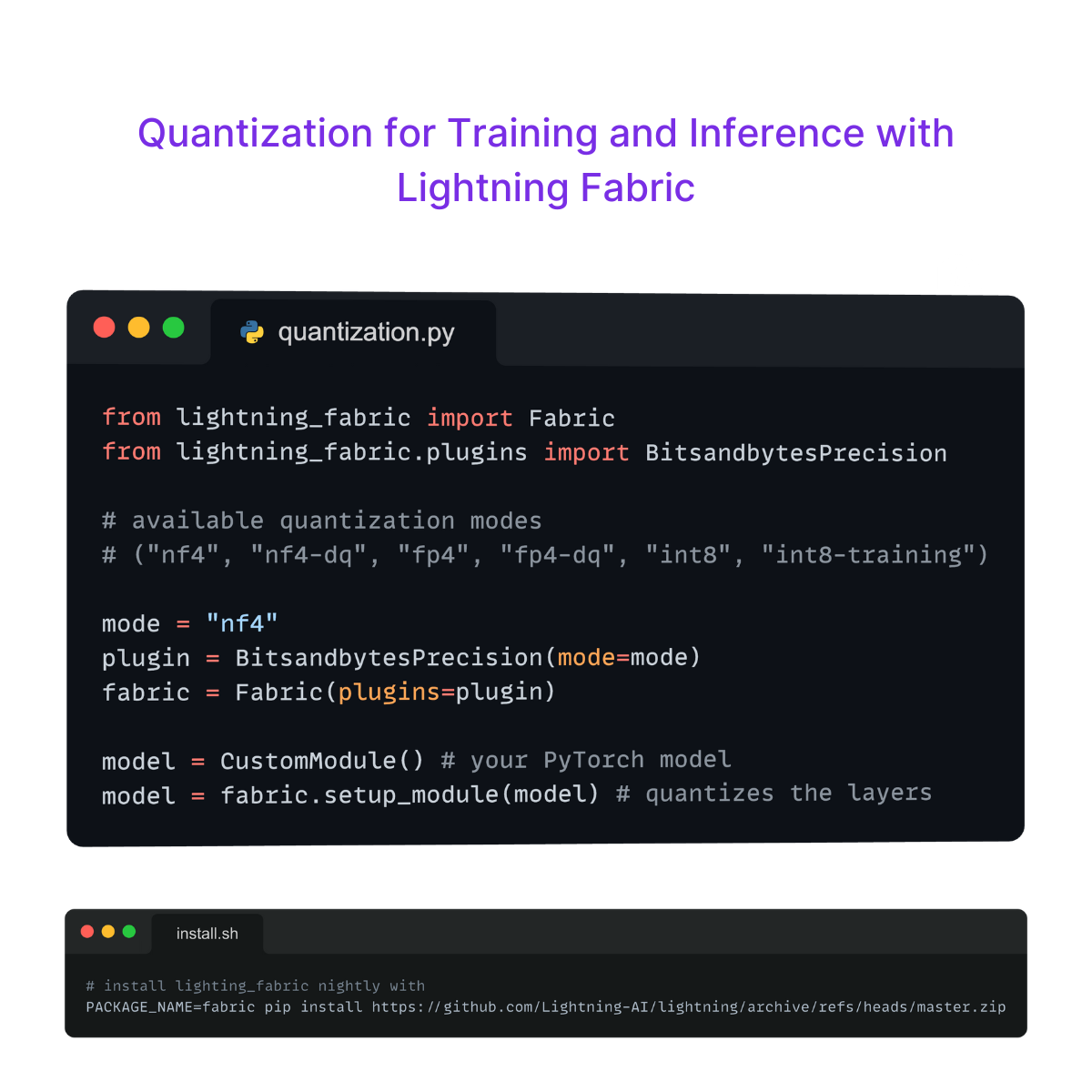

What Is Quantization Lightning Ai Explore model quantization to boost the efficiency of your ai models! this guide discusses benefits and limitations with a hands on example. To make models smaller and more efficient, developers employ quantization techniques to run them at lower precision.

Trends In Model Quantization And Efficiency Optimization Shaping The

Comments are closed.