Pytorch Transfer Learning Project Fine Tune Vs Feature Extraction Resnet18

Transfer Learning Fine Tuning Vs Fixed Feature Extraction Using Deep In this end to end tutorial, you’ll learn transfer learning the way it’s actually used in industry: with clear context, fair comparisons, and practical trade offs. In this article, we will apply transfer learning with pytorch to classify 102 flower species using a resnet18 pre trained on imagenet. we will compare two approaches: feature extraction (85.7% accuracy) and fine tuning (92.5%), understand when to use each one and why the difference is so significant.

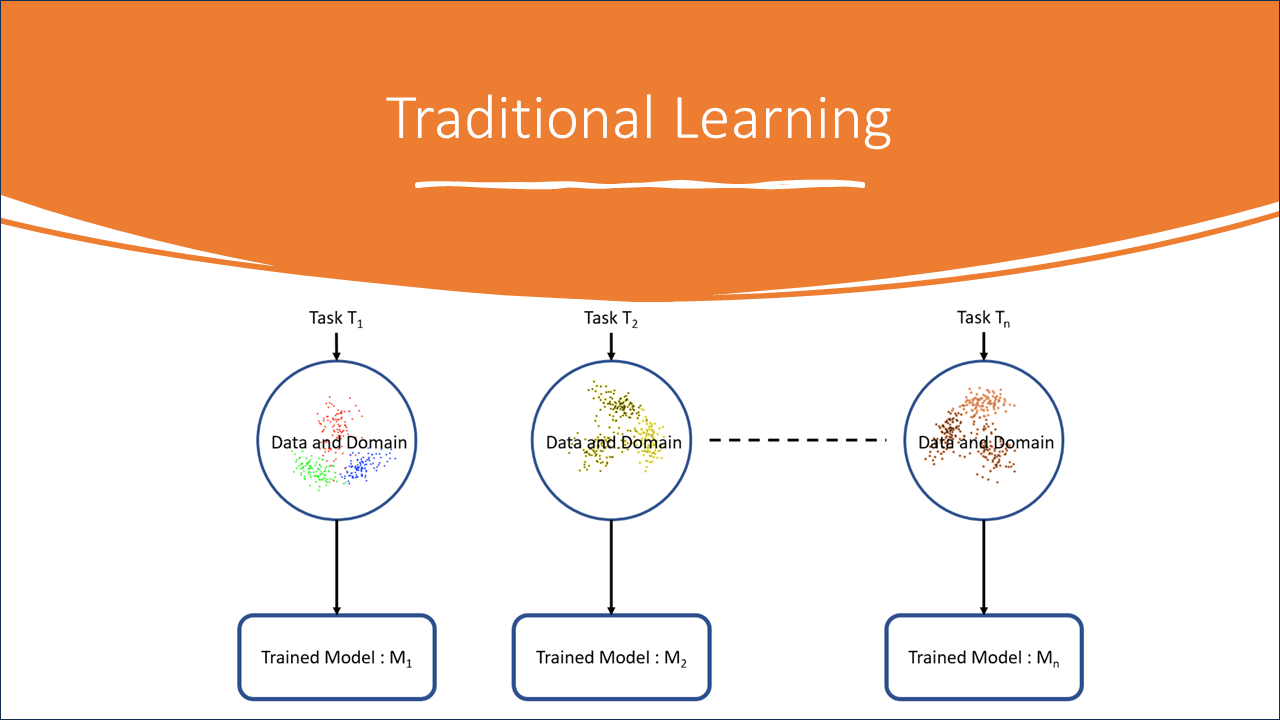

Transfer Learning Fine Tuning Vs Fixed Feature Extraction Using Deep In this tutorial, you will learn how to train a convolutional neural network for image classification using transfer learning. you can read more about the transfer learning at cs231n notes. There are two different transfer learning techniques: fine tuning and feature extraction. this article describes the two techniques by carrying out an analysis of the different ways in. Specifically, we will use the 18 layer architecture of resnet. now we will setup the environment for finetuning a resnet18 architecture using timm. we import the libraries that we'll use:. Learn transfer learning: reusing pre trained cnns like resnet for custom tasks with limited data, using feature extraction or fine tuning.

Transfer Learning Fine Tuning Vs Fixed Feature Extraction Using Deep Specifically, we will use the 18 layer architecture of resnet. now we will setup the environment for finetuning a resnet18 architecture using timm. we import the libraries that we'll use:. Learn transfer learning: reusing pre trained cnns like resnet for custom tasks with limited data, using feature extraction or fine tuning. Transfer learning based image classification using pytorch and resnet18. implemented feature extraction with a frozen backbone and fine tuning of the final residual block. tracked training vs validation accuracy to analyze convergence and generalization on a real world cats vs dogs dataset. Feature extraction: we use the pre trained model as a fixed feature extractor and only train a new classifier on top of it. fine tuning: we not only train the new classifier but also update the weights of the pre trained model to adapt it to the new task. Two primary strategies within transfer learning are fine tuning and feature extraction, and understanding the nuances between fine tuning vs feature extraction is crucial for effectively applying them in different scenarios. Transfer learning in pytorch offers a powerful way to leverage pre trained models for new tasks, reducing training time and improving performance. by understanding the key features, implementation steps, and practical tips, you can effectively apply transfer learning to a variety of projects.

Comments are closed.