Properties Of Maximum Likelihood Estimation

Maximum Likelihood Estimation Pdf Errors And Residuals Least Squares Maximum likelihood estimation (mle) is a widely used statistical estimation method. in this lecture, we will study its properties: efficiency, consistency and asymptotic normality. Learn the theory of maximum likelihood estimation. discover the assumptions needed to prove properties such as consistency and asymptotic normality.

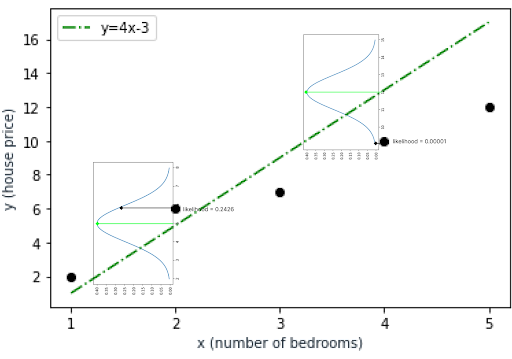

How To Find Maximum Likelihood Estimation In Excel In statistics, maximum likelihood estimation (mle) is a method of estimating the parameters of an assumed probability distribution, given some observed data. this is achieved by maximizing a likelihood function so that, under the assumed statistical model, the observed data is most probable. Parameter estimation story so far at this point: if you are provided with a model and all the necessary probabilities, you can make predictions! but how do we infer the probabilities for a given model? ~poi 5. Learn what maximum likelihood estimation (mle) is, understand its mathematical foundations, see practical examples, and discover how to implement mle in python. The properties of mle, including consistency, efficiency, and asymptotic normality, are examined to highlight its theoretical strengths.

Understanding Maximum Likelihood Estimation Mle Built In Learn what maximum likelihood estimation (mle) is, understand its mathematical foundations, see practical examples, and discover how to implement mle in python. The properties of mle, including consistency, efficiency, and asymptotic normality, are examined to highlight its theoretical strengths. Learn the principles of mle, its properties, and how to apply it to estimate parameters in various statistical models. “the maximum likelihood estimation is a method that determines parameter values in such a way that they maximise the likelihood that the process described by the model produced the data that were actually observed.”. They have key properties that make them reliable as sample sizes grow, including consistency, asymptotic normality, and efficiency. mles converge to true parameter values and become normally distributed with large samples. they're also efficient, reaching the cramér rao lower bound asymptotically. We’re going to use all of the principles from maximum likelihood estimation but first, we need to point out a subtle difference that can cause some confusion both here and when we get to more complicated probabilistic models later.

Comments are closed.