Prompt Engineering For Optimal Llm Performance Catalogo

Prompt Engineering For Optimal Llm Performance Catálogo In this in depth masterclass, data and ai specialist valentina alto unveils the art and science behind crafting effective prompts. you’ll learn proven techniques to optimize prompting, control model behavior, reduce risks like hallucination, and overcome limitations. Optimizing llm performance is no longer a “nice‑to‑have” afterthought; it is a core engineering discipline that directly influences cost, latency, and user satisfaction.

Prompt Engineering For Optimal Llm Performance Video Data Video Prompt engineering is key to harnessing the immense capabilities of large language models. in this in depth masterclass, data and ai specialist valentina alto unveils the art and science behind crafting effective prompts. Prompt engineering is key to harnessing the immense capabilities of large language models. in this in depth masterclass, data and ai specialist valentina alto unveils the art and science behind crafting effective prompts. Ai ml engineering pack optimize llm prompts for reduced token usage, lower costs, and improved output quality by identifying redundancies, simplifying instructions, and restructuring for clarity. Prompt engineering has emerged as an indispensable technique for extending the capabilities of large language models (llms) and vision language models (vlms). this approach leverages task specific instructions, known as prompts, to enhance model efficacy without modifying the core model parameters.

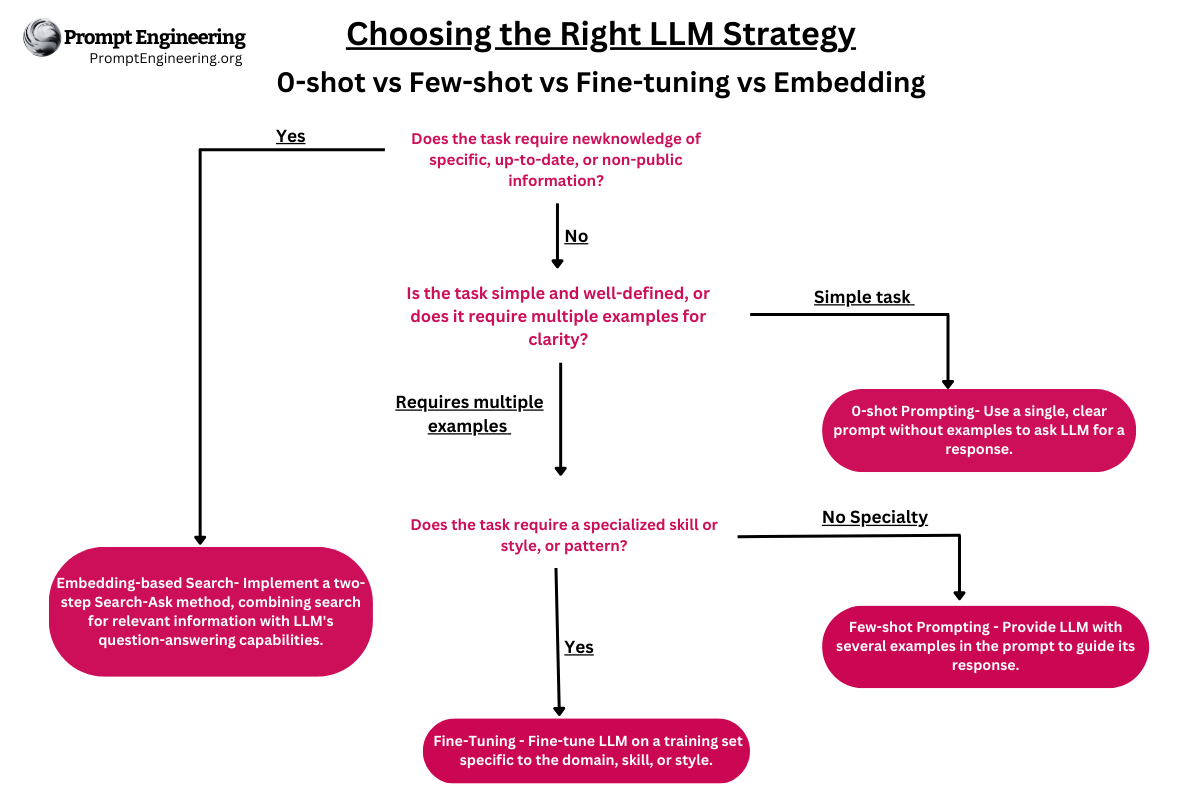

Master Prompt Engineering Llm Embedding And Fine Tuning Ai ml engineering pack optimize llm prompts for reduced token usage, lower costs, and improved output quality by identifying redundancies, simplifying instructions, and restructuring for clarity. Prompt engineering has emerged as an indispensable technique for extending the capabilities of large language models (llms) and vision language models (vlms). this approach leverages task specific instructions, known as prompts, to enhance model efficacy without modifying the core model parameters. The research reviews upcoming trends in ai driven prompt enhancement along with mixed prompt techniques and specialized frameworks for prompt optimization that define llm interaction. This telescopic approach will allow you to test a single prompt for multiple intents, identify performance gaps, and refine the prompts until you achieve your desired performance. This taxonomy categorizes prompt engineering into four distinct aspects: profile and instruction, knowledge, reasoning and planning, and reliability. by providing a structured framework for understanding its various dimensions, we aim to facilitate the systematic design of prompts. Our contribution advances the understanding of how prompt engineering impacts llm performance and provides actionable guidelines for practitioners to maximize model utility across diverse applications.

Comments are closed.