Prompt Compression In Large Language Models Llms Making Every Token

Prompt Compression In Large Language Models Llms Making Every Token In the world of large language models (llms), every token counts — literally. whether you’re crafting prompts for chatbots, generating code snippets, or conducting data driven. To mitigate these challenges, multiple efficient methods have been proposed, with prompt compression gaining significant research interest. this survey provides an overview of prompt compression techniques, categorized into hard prompt methods and soft prompt methods.

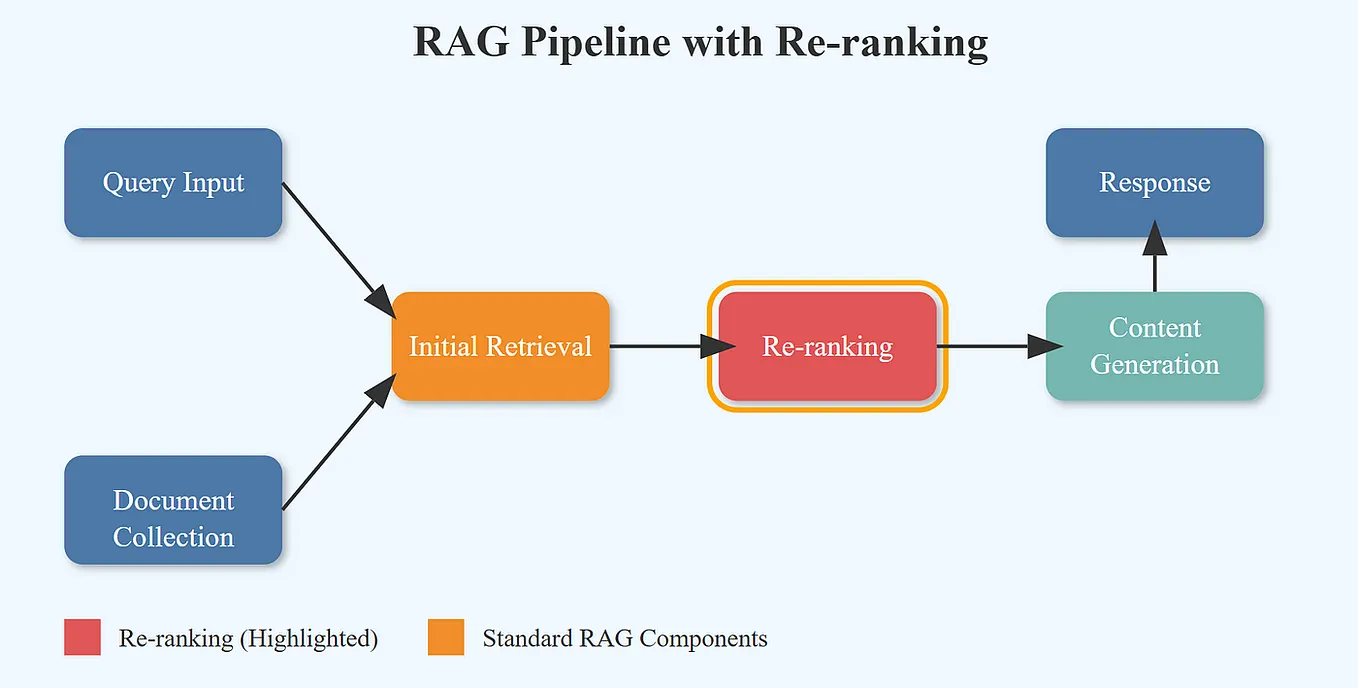

Prompt Compression In Large Language Models Llms Making Every Token Prompt compression reduces llm input tokens while preserving task accuracy. this guide covers 8 techniques (llmlingua, selective context, recomp, verbatim compaction), real benchmarks, compression vs performance curves, and why code requires different compression than prose. Tokens with diagonal stripes represent the output tokens processed by the language models. different from hard prompt methods, the bottom llms in soft prompt methods process the input tokens, and their outputs (tokens with diagonal stripes) serve as input for the llms above. In this tutorial, we’ll look at how to use llmlingua to optimze your prompts and make them more efficient while saving costs. when an llm processes a prompt, every token counts toward your cost and the model’s attention limit. In this article, you will learn five practical prompt compression techniques that reduce tokens and speed up large language model (llm) generation without sacrificing task quality.

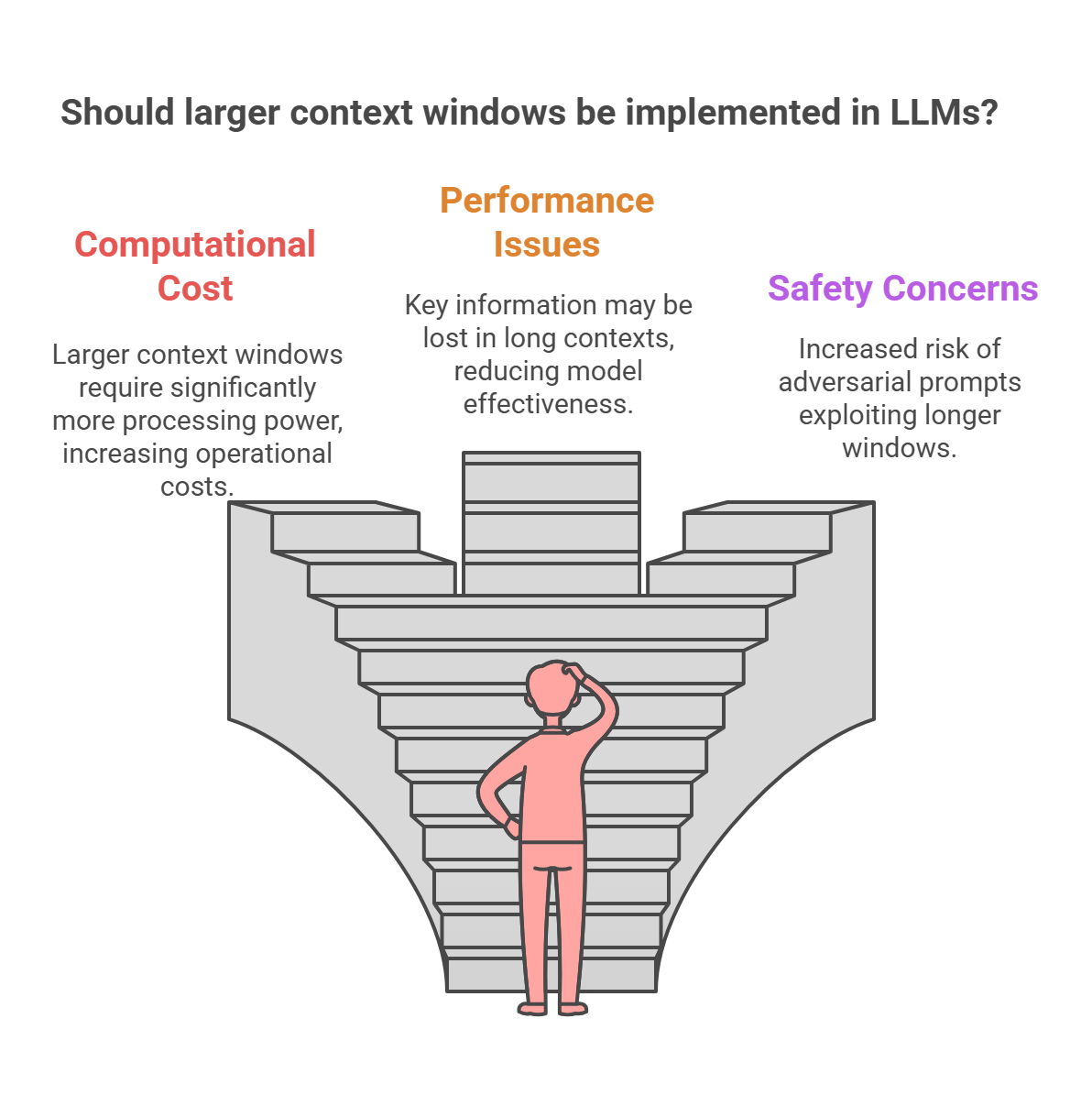

Prompt Compression In Large Language Models Llms Making Every Token In this tutorial, we’ll look at how to use llmlingua to optimze your prompts and make them more efficient while saving costs. when an llm processes a prompt, every token counts toward your cost and the model’s attention limit. In this article, you will learn five practical prompt compression techniques that reduce tokens and speed up large language model (llm) generation without sacrificing task quality. Tools like llmlingua (by microsoft) use language models to compress prompts by learning which parts can be dropped while preserving meaning. it’s powerful — but also relies on another llm to optimize prompts for the llm. The paper presents an empirical study on prompt compression methods for language models. it examines six distinct methods using three popular llms across 13 datasets. Prompt compression is a class of algorithmic strategies designed to reduce the length of input prompts for llms while retaining the information necessary to drive accurate downstream behavior. this reduction addresses the computational, latency, and cost overhead resulting from long prompts, especially in settings where llms process complex tasks requiring large or multi document contexts. In this deep dive, we'll explore what prompt compression is, how it works, the common methods and models used to achieve it, and why it's become essential for anyone building with llms.

Prompt Compression In Large Language Models Llms Making Every Token Tools like llmlingua (by microsoft) use language models to compress prompts by learning which parts can be dropped while preserving meaning. it’s powerful — but also relies on another llm to optimize prompts for the llm. The paper presents an empirical study on prompt compression methods for language models. it examines six distinct methods using three popular llms across 13 datasets. Prompt compression is a class of algorithmic strategies designed to reduce the length of input prompts for llms while retaining the information necessary to drive accurate downstream behavior. this reduction addresses the computational, latency, and cost overhead resulting from long prompts, especially in settings where llms process complex tasks requiring large or multi document contexts. In this deep dive, we'll explore what prompt compression is, how it works, the common methods and models used to achieve it, and why it's become essential for anyone building with llms.

Comments are closed.