Probabilistic Inference Scaling

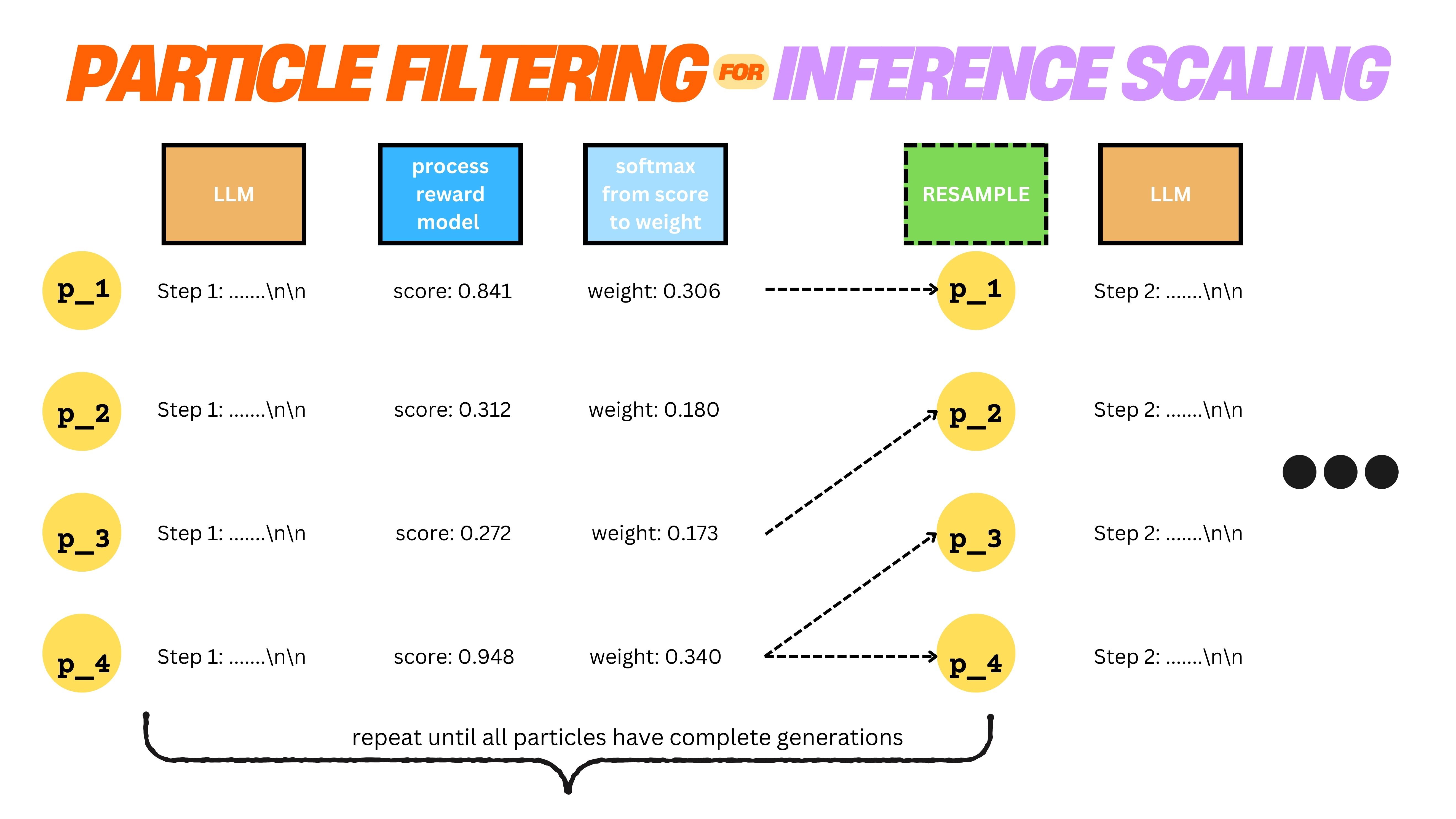

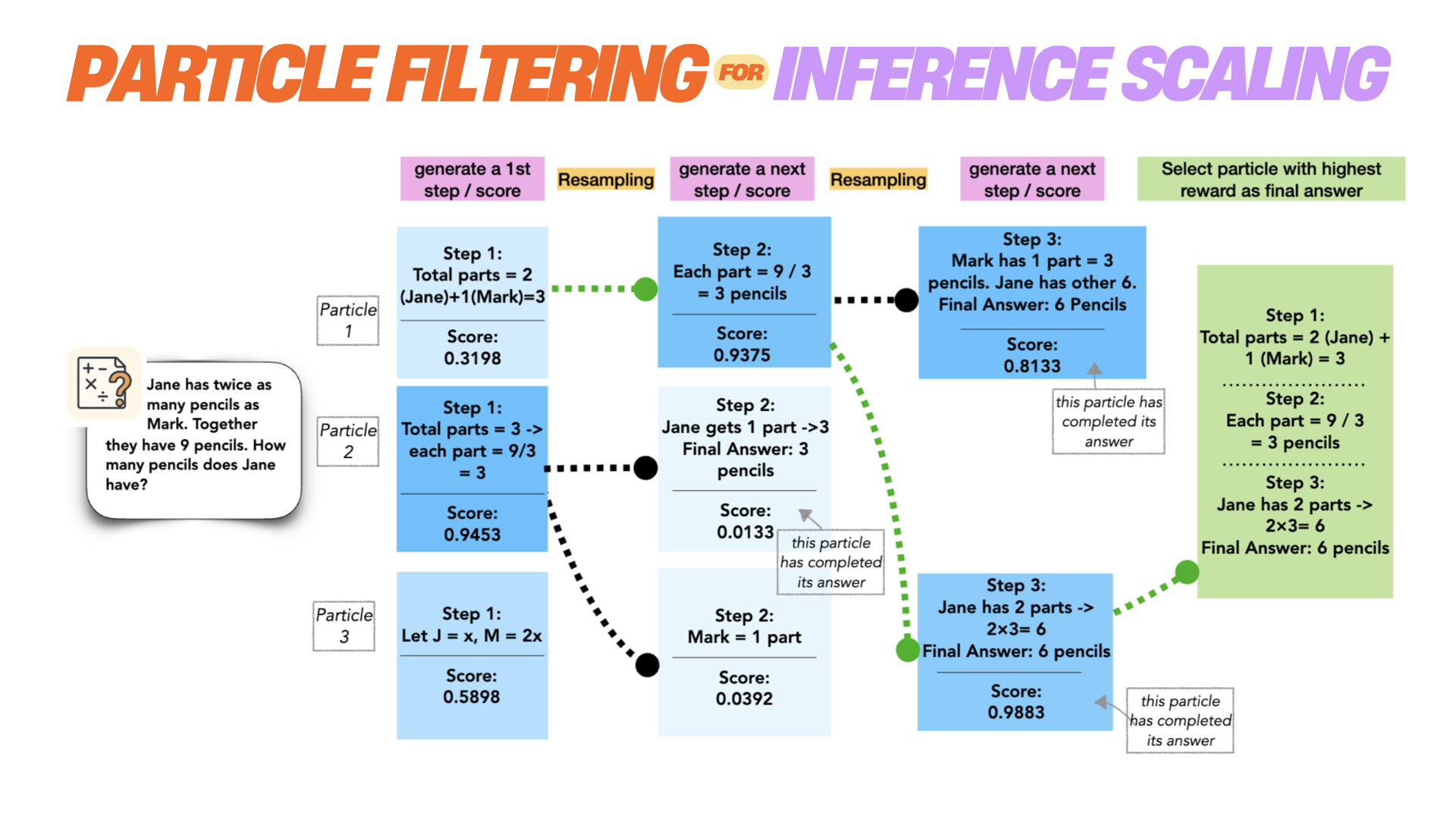

Probabilistic Inference Scaling In this paper, we instead cast inference time scaling as a probabilistic inference task and leverage sampling based techniques to explore the typical set of the state distribution of a state space model with an approximate likelihood, rather than optimize for its mode directly. Contribute to probabilistic inference scaling probabilistic inference scaling development by creating an account on github.

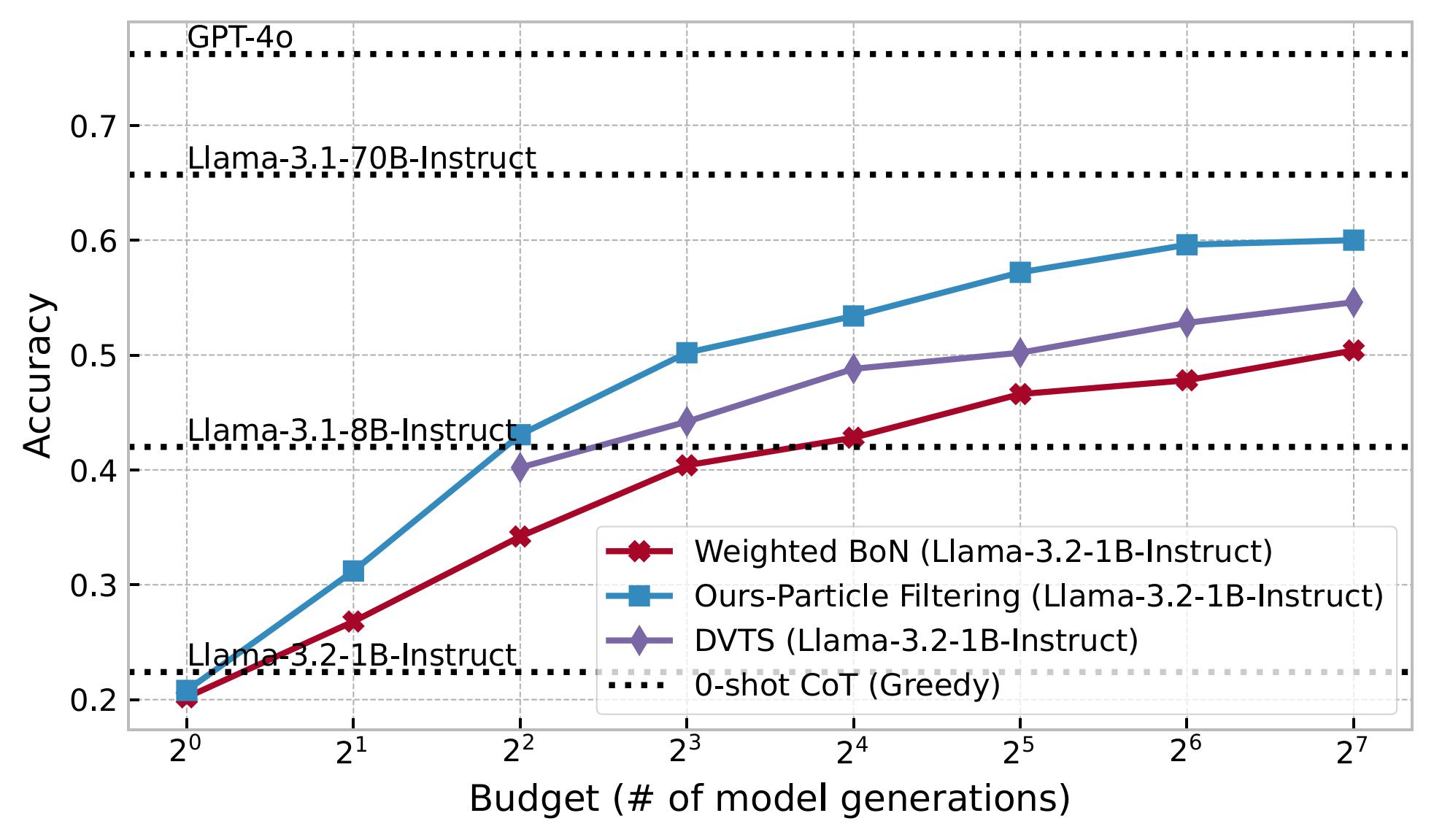

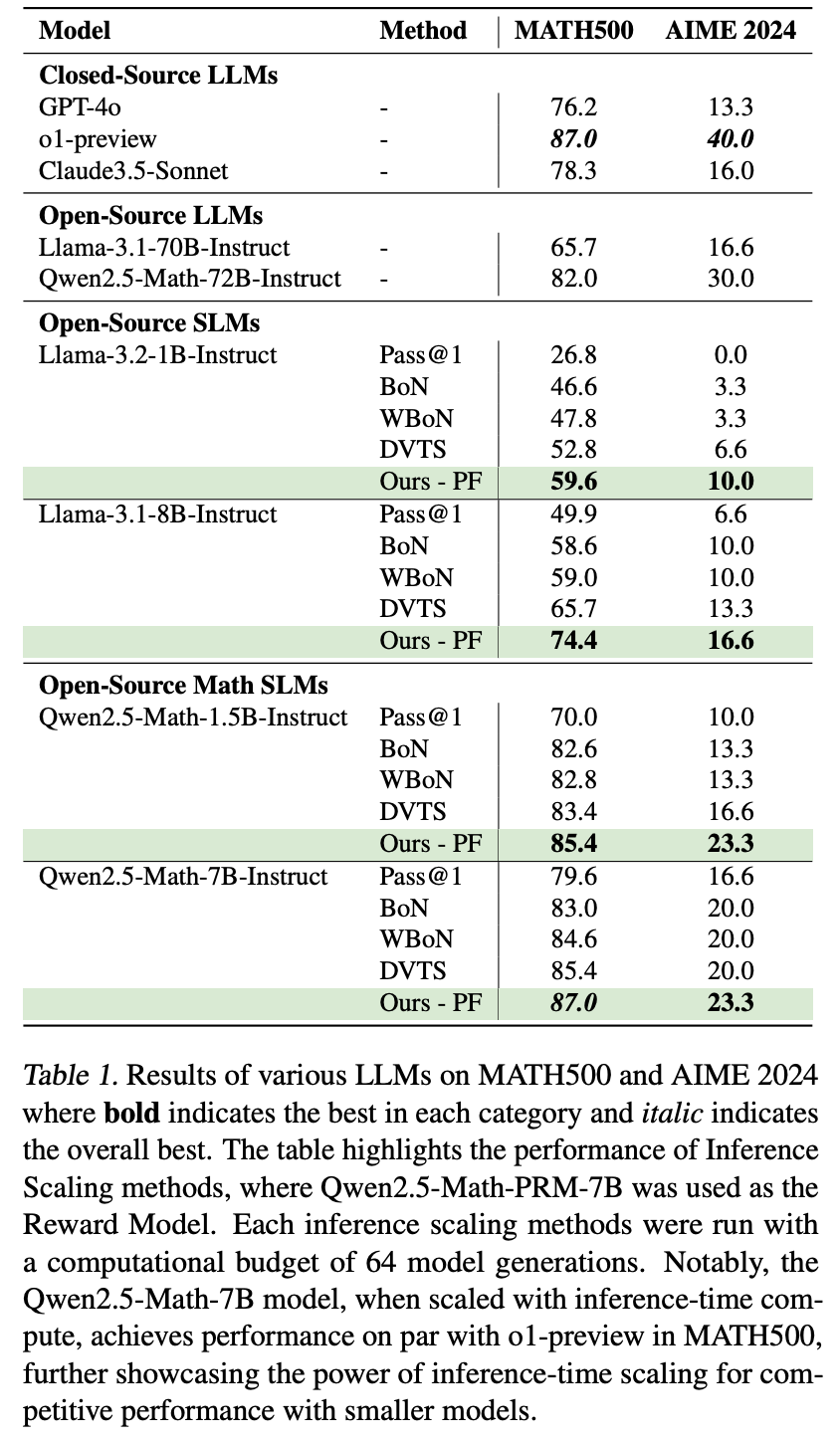

Probabilistic Inference Scaling In this section, we introduce an inference scaling theory to model and explain how accuracy changes in multi round self correction. first, we formally define the multi round self correction process and provide mathematical notations in §2.1. Our work not only presents an effective method to inference time scaling, but also con nects rich literature in probabilistic inference with inference time scaling of llms to develop more robust algorithms in future work. Building on this principle, we introduce a novel approach to inference time scaling by adapting particle based monte carlo algorithms from probabilistic inference. To fill this gap, we propose a probabilistic theory to model the dynamics of accuracy change and explain the performance improvements observed in multi round self correction.

Probabilistic Inference Scaling Building on this principle, we introduce a novel approach to inference time scaling by adapting particle based monte carlo algorithms from probabilistic inference. To fill this gap, we propose a probabilistic theory to model the dynamics of accuracy change and explain the performance improvements observed in multi round self correction. The computational challenge of probabilistic inference remains the primary roadblock for applying ppls in practice. inference is fundamentally hard, so there is no one size fits all solution. Our work offers both a theoretical foundation and a practical solution for principled inference time scaling, addressing a critical gap in the efficient deployment of llms for complex reasoning. We propose a novel inference time scaling approach by adapting particle based monte carlo methods. our method maintains a diverse set of candidates and robustly balances exploration and exploitation. A probabilistic inference scaling theory for llm self correction. anonymous acl submission abstract. 001large language models (llms) have demon 002strated the capability to refine their generated. 003answers through self correction, enabling con 004tinuous performance improvement over multi 005ple rounds. however, the mechanisms under.

Probabilistic Inference Scaling The computational challenge of probabilistic inference remains the primary roadblock for applying ppls in practice. inference is fundamentally hard, so there is no one size fits all solution. Our work offers both a theoretical foundation and a practical solution for principled inference time scaling, addressing a critical gap in the efficient deployment of llms for complex reasoning. We propose a novel inference time scaling approach by adapting particle based monte carlo methods. our method maintains a diverse set of candidates and robustly balances exploration and exploitation. A probabilistic inference scaling theory for llm self correction. anonymous acl submission abstract. 001large language models (llms) have demon 002strated the capability to refine their generated. 003answers through self correction, enabling con 004tinuous performance improvement over multi 005ple rounds. however, the mechanisms under.

Comments are closed.