Principled Approaches For Learning Latent Variable Models

Principled Approaches For Learning Latent Variable Models Microsoft We show that higher order relationships among observed variables have a low rank representation under natural statistical constraints such as conditional independence relationships. we also present efficient computational methods for finding these low rank representations. Explore what latent variable modeling is, how it can benefit you, and how to choose the right model based on your research question and data types.

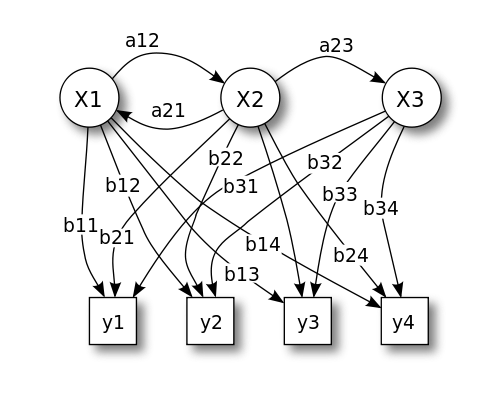

Latent Variable Models So far we have focused mostly on supervised learning, we now cover an important class of unsupervised (or sometimes semi–supervised) approaches: latent variable models. In fact, the unobserved variables make learning much more difficult; in this chapter, we will look at how to use and how to learn models that involve latent variables. To address this gap in the literature, we introduce a bayesian active learning framework for discrete latent variable models. we develop methods based on both mcmc sampling and variational inference to eficiently compute information gain and select informative inputs in adaptive experiments. Latent variables are simply random variables that we posit to exist underlying our data. we could also refer to such models as doubly stochastic, because they involve two stages of noise: noise in the latent variable and then noise in the mapping from latent variable to observed variable.

Latent Variable Modeling Using R A Step By Step Guide To address this gap in the literature, we introduce a bayesian active learning framework for discrete latent variable models. we develop methods based on both mcmc sampling and variational inference to eficiently compute information gain and select informative inputs in adaptive experiments. Latent variables are simply random variables that we posit to exist underlying our data. we could also refer to such models as doubly stochastic, because they involve two stages of noise: noise in the latent variable and then noise in the mapping from latent variable to observed variable. This thesis develops techniques for fitting latent variable models where the dependencies between variables are parameterized by nonlinear functions such as deep neural networks or nonlinear differential equations. This is part 1 of a two part series of articles about latent variable models. part 1 covers the expectation maximization (em) algorithm and its application to gaussian mixture models. These results suggest that a modernized, scale appropriate vae remains competitive for molecular generation when paired with principled conditioning and parameter efficient finetuning. I will present a broad framework for unsupervised learning of latent variable models, addressing both statistical and computational concerns.

Modeling Latent Variable For Self Supervised Learning Open Research This thesis develops techniques for fitting latent variable models where the dependencies between variables are parameterized by nonlinear functions such as deep neural networks or nonlinear differential equations. This is part 1 of a two part series of articles about latent variable models. part 1 covers the expectation maximization (em) algorithm and its application to gaussian mixture models. These results suggest that a modernized, scale appropriate vae remains competitive for molecular generation when paired with principled conditioning and parameter efficient finetuning. I will present a broad framework for unsupervised learning of latent variable models, addressing both statistical and computational concerns.

Comments are closed.