Preprocessing 1 Feature Normalisation And Scaling

Feature Scaling And Mean Normalization Pdf Normalization and scaling are two fundamental preprocessing techniques when you perform data analysis and machine learning. they are useful when you want to rescale, standardize or normalize the features (values) through distribution and scaling of existing data that make your machine learning models have better performance and accuracy. In machine learning, data preprocessing is often the make or break factor that determines model performance. among the most critical preprocessing techniques are feature scaling and normalization—two approaches that, while related, serve distinct purposes and are often confused with one another.

A Simple View On Data Normalisation And Scaling In Data Preprocessing Common feature scaling techniques include — normalization and standardization. in data preprocessing, normalization scales data to a specific range, typically between 0 and 1, whereas. Normalization and scaling are both essential techniques in data preprocessing, but they have distinct characteristics and applications. normalization is more suitable for algorithms that rely on distance calculations and require features to be on a similar scale. In general, many learning algorithms such as linear models benefit from standardization of the data set (see importance of feature scaling). if some outliers are present in the set, robust scalers or other transformers can be more appropriate. In machine learning preprocessing, feature scaling is the process of transforming numerical features so they share a similar scale. this prevents models from being biased toward features with larger numerical ranges and ensures fair weight distribution during training.

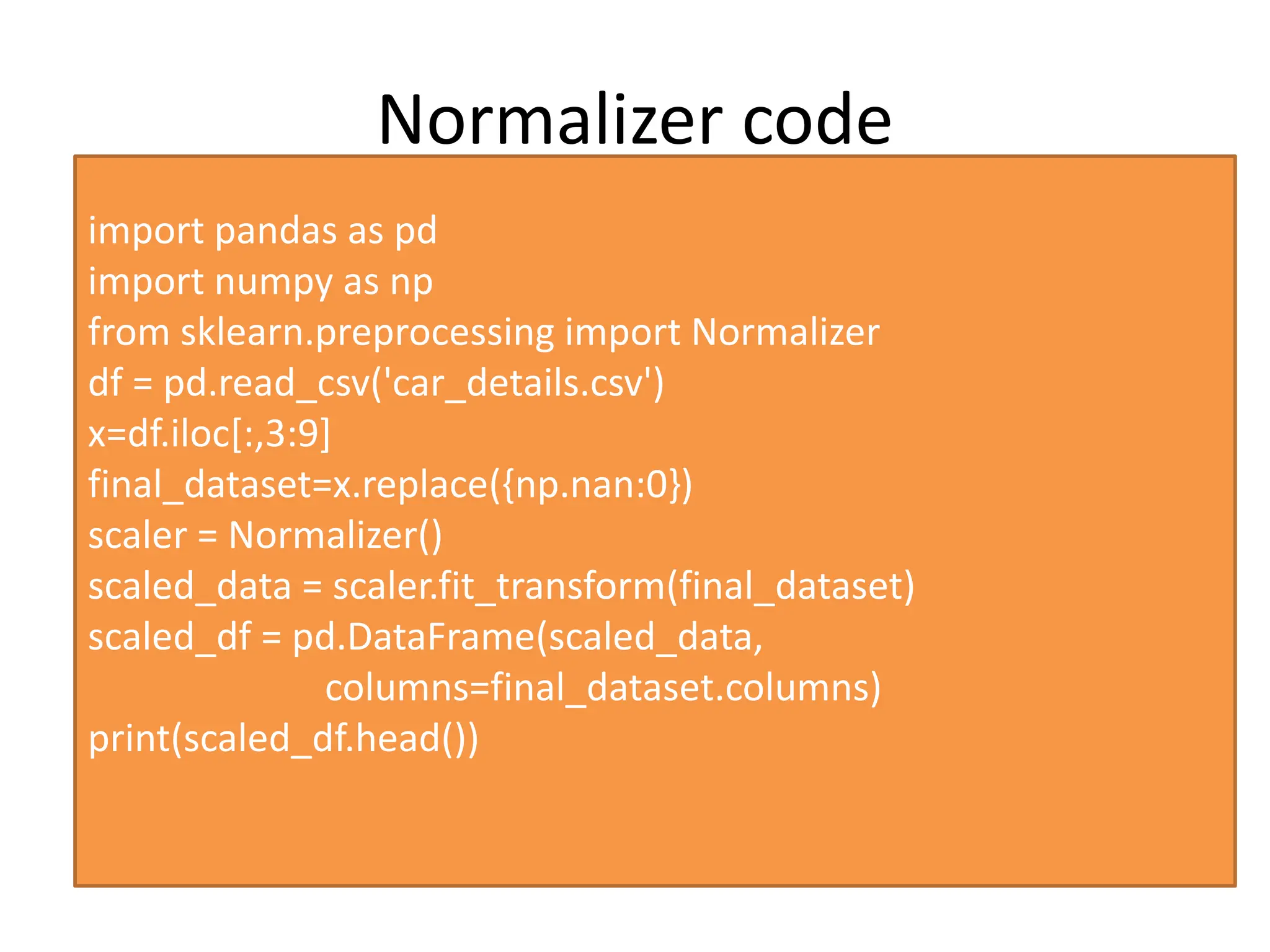

Data Preprocessing Feature Scaling Methods Pptx In general, many learning algorithms such as linear models benefit from standardization of the data set (see importance of feature scaling). if some outliers are present in the set, robust scalers or other transformers can be more appropriate. In machine learning preprocessing, feature scaling is the process of transforming numerical features so they share a similar scale. this prevents models from being biased toward features with larger numerical ranges and ensures fair weight distribution during training. Learn what feature scaling and normalization are in machine learning with real life examples, python code, and beginner friendly explanations. understand why scaling matters and how to apply it using scikit learn. Sklearn.preprocessing # methods for scaling, centering, normalization, binarization, and more. user guide. see the preprocessing data section for further details. Data normalization is important if your statistical technique or algorithm requires your data to follow a standard distribution. knowing how to transform your data and when to do it is important to have a working data science project. Feature scaling and normalization are crucial preprocessing steps in machine learning. these techniques ensure that different features contribute proportionally to a model, improving accuracy and convergence speed.

Data Preprocessing Feature Scaling Methods Pptx Learn what feature scaling and normalization are in machine learning with real life examples, python code, and beginner friendly explanations. understand why scaling matters and how to apply it using scikit learn. Sklearn.preprocessing # methods for scaling, centering, normalization, binarization, and more. user guide. see the preprocessing data section for further details. Data normalization is important if your statistical technique or algorithm requires your data to follow a standard distribution. knowing how to transform your data and when to do it is important to have a working data science project. Feature scaling and normalization are crucial preprocessing steps in machine learning. these techniques ensure that different features contribute proportionally to a model, improving accuracy and convergence speed.

Comments are closed.