Practical Quantization In Pytorch Pytorch

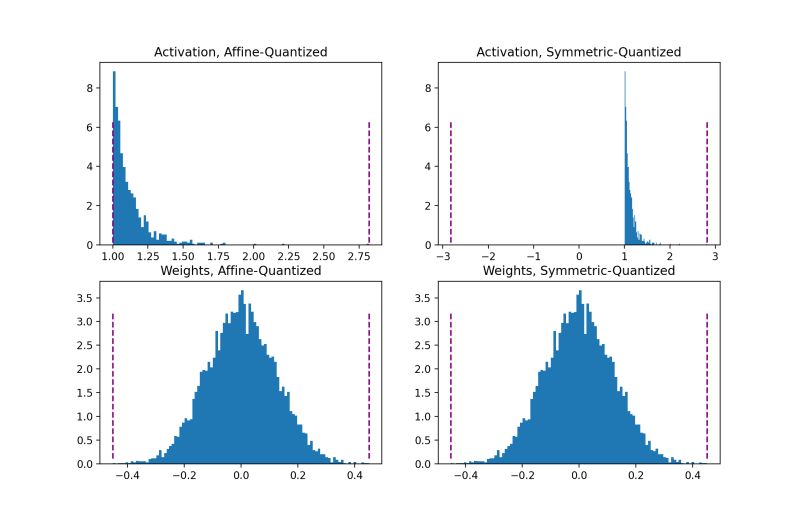

Github Satya15july Quantization Model Quantization With Pytorch Quantization is a cheap and easy way to make your dnn run faster and with lower memory requirements. pytorch offers a few different approaches to quantize your model. in this blog post, we’ll lay a (quick) foundation of quantization in deep learning, and then take a look at how each technique looks like in practice. Introduction this tutorial provides an introduction to quantization in pytorch, covering both theory and practice. we’ll explore the different types of quantization, and apply both post training quantization (ptq) and quantization aware training (qat) on a simple example using cifar 10 and resnet18.

Github Xingyueye Pytorch Quantization Quantization is a core method for deploying large neural networks such as llama 2 efficiently on constrained hardware, especially embedded systems and edge devices. For a brief introduction to model quantization, and the recommendations on quantization configs, check out this pytorch blog post: practical quantization in pytorch. Discover how to optimize ai models with pytorch quantization. learn use cases, challenges, tools, and best practices to scale efficiently and effectively. A practical deep dive into quantization aware training, covering how it works, why it matters, and how to implement it end to end.

Practical Quantization In Pytorch Mike Tamir Phd Discover how to optimize ai models with pytorch quantization. learn use cases, challenges, tools, and best practices to scale efficiently and effectively. A practical deep dive into quantization aware training, covering how it works, why it matters, and how to implement it end to end. A decision guide for selecting a pytorch quantization strategy based on requirements like ease of use, data availability, performance needs, and accuracy tolerance. On the model optimization side, quantization is the technique to decrease the memory and computation requirements of these models while at the same time decreasing their latency on the hardware. The detailed explanation and practical implementation of the w8a16linearlayer class, along with the examples of replacing and quantizing pytorch layers, demonstrate the practical utility and potential of quantization in various ai applications, including language models and object detection models. Learn how to reduce model size and boost inference speed using dynamic, static, and qat quantization in pytorch.

Practical Quantization In Pytorch Ai Training A decision guide for selecting a pytorch quantization strategy based on requirements like ease of use, data availability, performance needs, and accuracy tolerance. On the model optimization side, quantization is the technique to decrease the memory and computation requirements of these models while at the same time decreasing their latency on the hardware. The detailed explanation and practical implementation of the w8a16linearlayer class, along with the examples of replacing and quantizing pytorch layers, demonstrate the practical utility and potential of quantization in various ai applications, including language models and object detection models. Learn how to reduce model size and boost inference speed using dynamic, static, and qat quantization in pytorch.

Comments are closed.