Practical Adversarial Attack Against Speech Recognition Platforms

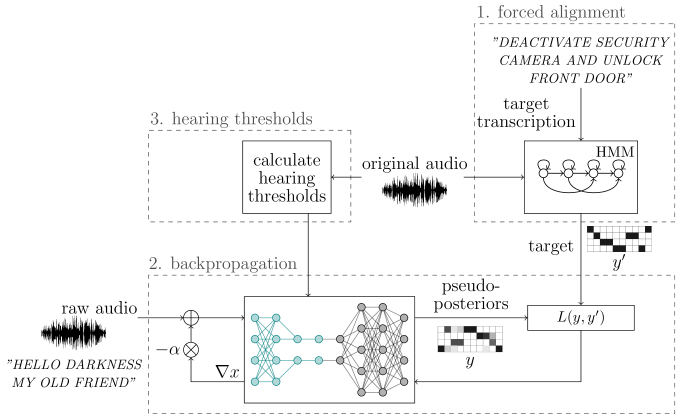

Free Video Practical Adversarial Attack Against Speech Recognition In this paper, we explore the possibility of conducting an over the air adversarial attack in practical scenarios, in which the adver sarial examples are played through a loudspeaker to compromise speaker recognition devices. We first introduce the basics of srss and concepts related to adversarial attacks. then, we propose two sets of criteria to evaluate the performance of attack methods and defense methods in srss, respectively.

Pdf Adversarial Attack On Speech To Text Recognition Models In this work, we conduct a comprehensive survey to fill this gap, which includes the development of srss, adversarial attacks and defenses against srss. specifically, we first introduce the mainstream frameworks of srss and some commonly used datasets. In this paper, we propose two novel adversarial attacks in more practical and rigorous scenarios. for commercial cloud speech apis, we propose occam, a decision only black box adversarial attack, where only final decisions are available to the adversary. In this paper, we propose a staged perturbation generation method that constructs commanderuap, which achieves a high success rate of universal adversarial attack against speech recognition models. This is a collection of adversarial attacks for automatic speech recognition (asr) systems. the attacks in this 'main' branch are specifically designed for the wav2vec2 model from the torchaudio hub.

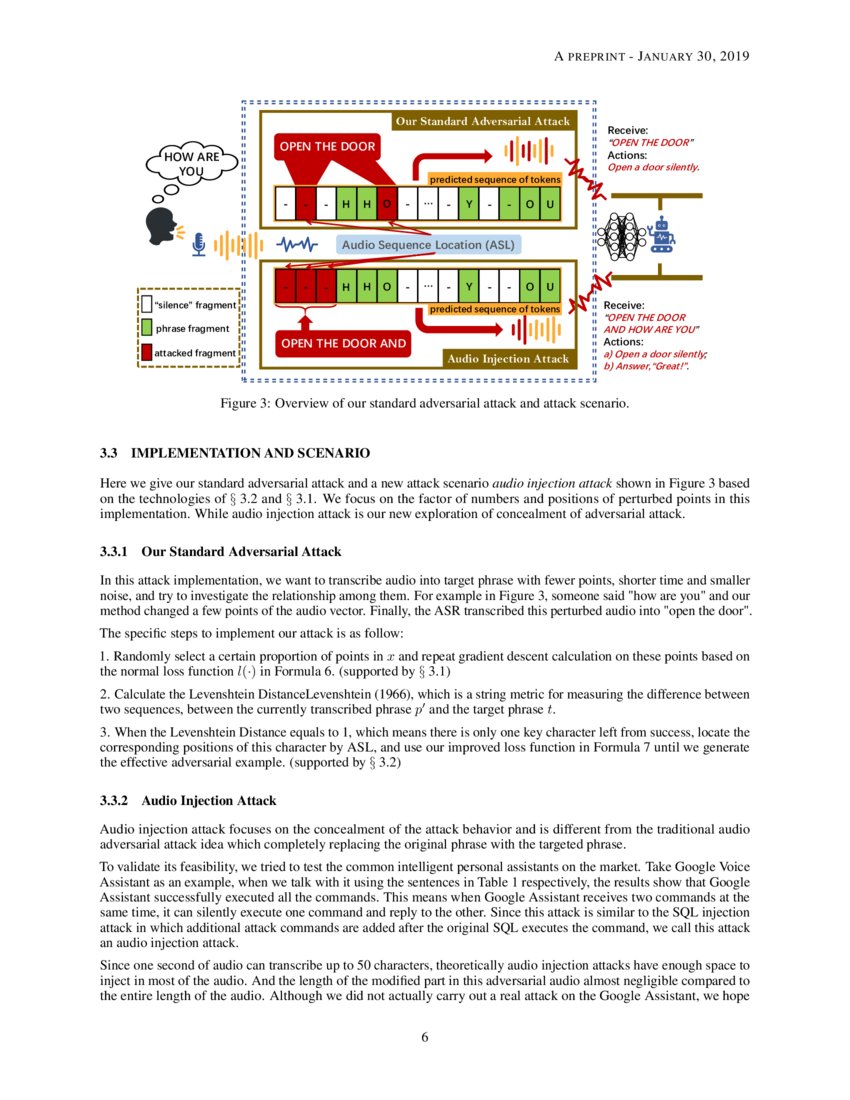

Adversarial Attacks Against Automatic Speech Recognition Systems Via In this paper, we propose a staged perturbation generation method that constructs commanderuap, which achieves a high success rate of universal adversarial attack against speech recognition models. This is a collection of adversarial attacks for automatic speech recognition (asr) systems. the attacks in this 'main' branch are specifically designed for the wav2vec2 model from the torchaudio hub. We present an expository paper that considers several adversarial attacks to a deep speaker recognition system, employs strong defense methods as countermeasures, and reports a comprehensive set of ablation studies to better understand the problem. We develop four classes of perturbations that create unintelligible audio and test them against 12 machine learning models, that include 7 proprietary models (e.g., google speech api, bing speech api, ibm speech api, azure speaker api, etc), and demonstrate successful attacks against all targets. In this work, we conduct a comprehensive survey to fill this gap, which includes the development of srss, adversarial attacks and defenses against srss. specifically, we first introduce the mainstream frameworks of srss and some commonly used datasets. In this paper, we propose a practical black box attack, named fakebob, which is able to overcome these challenges. specifically, we formulate the adversarial sample generation as an optimization problem.

Audioguard Speech Recognition System Robust Against Optimized Audio We present an expository paper that considers several adversarial attacks to a deep speaker recognition system, employs strong defense methods as countermeasures, and reports a comprehensive set of ablation studies to better understand the problem. We develop four classes of perturbations that create unintelligible audio and test them against 12 machine learning models, that include 7 proprietary models (e.g., google speech api, bing speech api, ibm speech api, azure speaker api, etc), and demonstrate successful attacks against all targets. In this work, we conduct a comprehensive survey to fill this gap, which includes the development of srss, adversarial attacks and defenses against srss. specifically, we first introduce the mainstream frameworks of srss and some commonly used datasets. In this paper, we propose a practical black box attack, named fakebob, which is able to overcome these challenges. specifically, we formulate the adversarial sample generation as an optimization problem.

Adversarial Attacks Challenge The Integrity Of Speech Language Models In this work, we conduct a comprehensive survey to fill this gap, which includes the development of srss, adversarial attacks and defenses against srss. specifically, we first introduce the mainstream frameworks of srss and some commonly used datasets. In this paper, we propose a practical black box attack, named fakebob, which is able to overcome these challenges. specifically, we formulate the adversarial sample generation as an optimization problem.

Adversarial Attack On Speech To Text Recognition Models Deepai

Comments are closed.