Ppt Maximum Likelihood And Bayesian Parameter Estimation Powerpoint

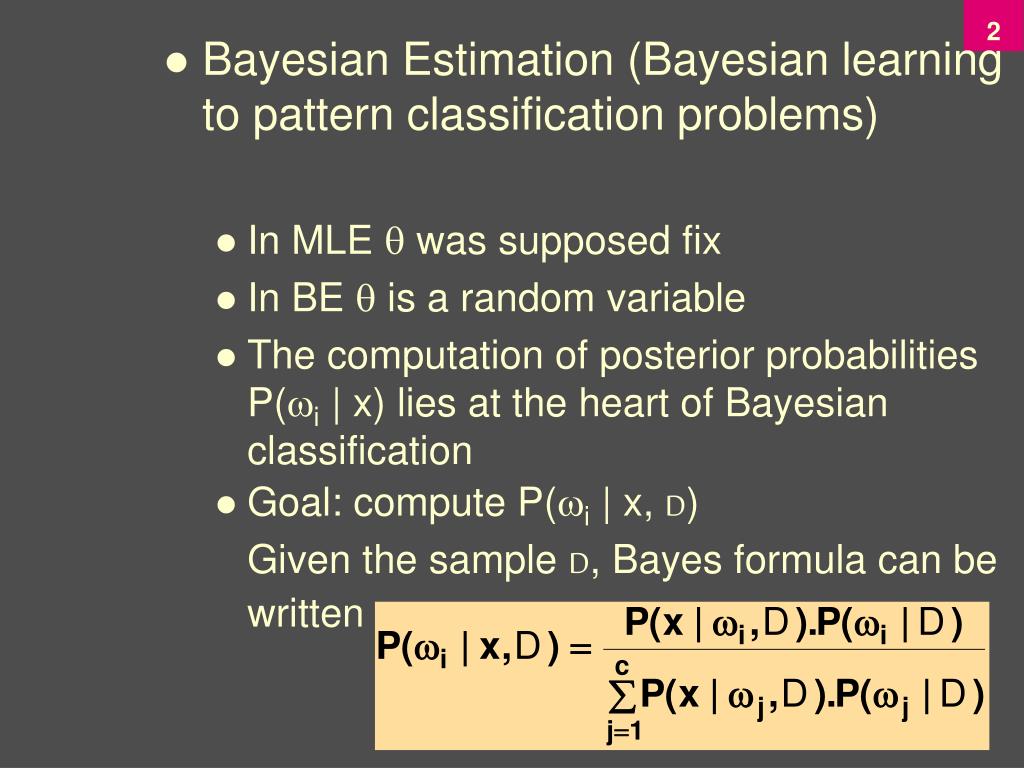

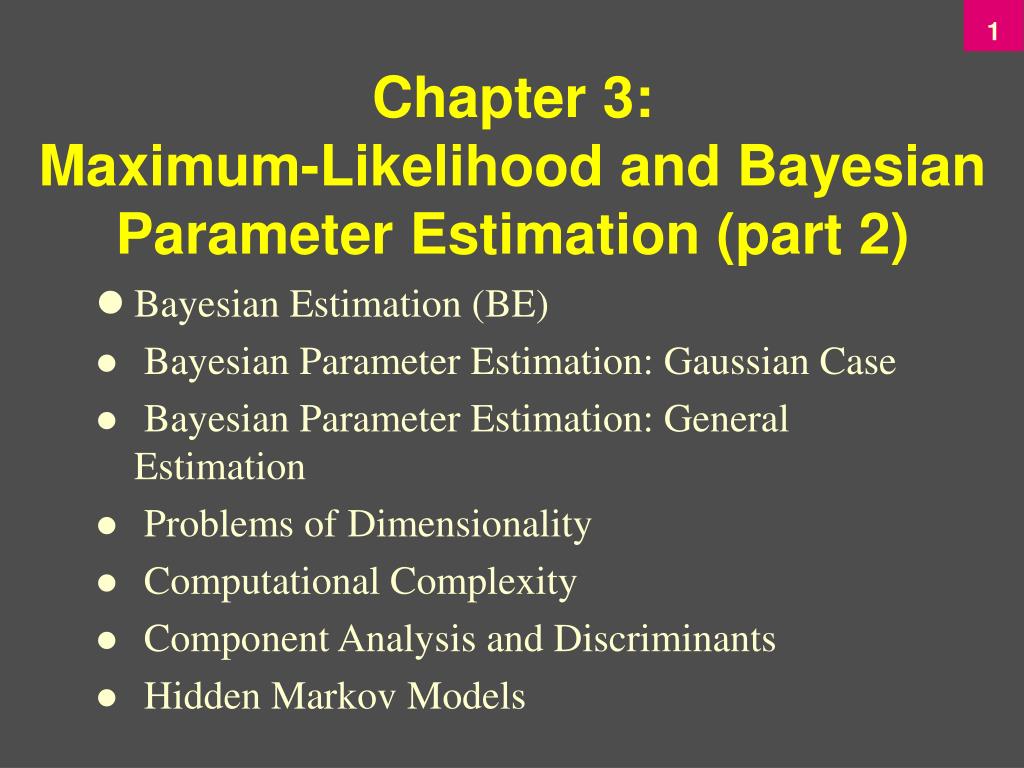

Maximum Likelihood And Bayesian Parameter Estimation Presentation Pattern classification, chapter 1 19 bayesian parameter estimation: gaussian case goal: estimate using the a posteriori density p ( | d) the univariate gaussian case: p ( | d) is the only unknown parameter 0 and 0 are known!. Summary • review of maximum likelihood parameter estimation in the gaussian case, with an emphasis on convergence and bias of the estimates. • introduction of bayesian parameter estimation.

Ppt Chapter 3 Maximum Likelihood And Bayesian Parameter Estimation Bayesian methods for cosmological parameter estimation from cosmic microwave background measurements. 22 my conclusion they are classic parameter estimation techniques and their feature is using prior distribution information to determine unknown parameters in problems with known function form. in practice many problems will give us general. Maximum likelihood estimation general gaussian case: maximum likelihood estimates for gaussian parameters are simply their empirical estimates over the samples: gaussian mean is the sample mean gaussian covariance matrix is the mean of the sample covariances maximum likelihood and bayesian parameter estimation. Bayesian learning of gaussians why we should care maximum likelihood estimation is a very very very very fundamental part of data analysis. “mle for gaussians” is training wheels for our future techniques learning gaussians is more useful than you might guess…. Design a classifier from a training sample easy to estimate prior samples are often too small to estimate class conditional (large dimension of feature space!).

Ppt Chapter 3 Maximum Likelihood And Bayesian Parameter Estimation Bayesian learning of gaussians why we should care maximum likelihood estimation is a very very very very fundamental part of data analysis. “mle for gaussians” is training wheels for our future techniques learning gaussians is more useful than you might guess…. Design a classifier from a training sample easy to estimate prior samples are often too small to estimate class conditional (large dimension of feature space!). Three test procedures. to construct the basic test we need an estimate of the likelihood value at the unrestricted point and the restricted point and we compare these two. there are three ways of deriving this. the likelihood ratio test we simply estimate the model twice, once unrestricted and once restricted and compare the two. Step 1: parameter estimation. model as a random variable with a known distribution but unknown parameter. guess the unknown parameter. step 2: decision making. use guess about unknown parameter to find probability of event of interest. decide based on the probability. frequentist estimation problem. Today’s presentation addresses the parametric density estimation case where the full probability structure underlying the categories(data) is not known, but the general forms of their distributions are known (or assumed a priori) — i.e., the models. Bayesian parameter estimation procedure, by its nature, utilizes whatever prior information is available about the unknown parameter mle: best parameters are obtained by maximizing the probability of obtaining the samples observed bayesian methods view the parameters as random variables having some known prior distribution; how do we know the.

Comments are closed.