Please Stop Explaining Black Box Models And Use Interpretable Models Instead

Stop Explaining Black Box Models And Use Interpretable Models Instead This perspective clarifies the chasm between explaining black boxes and using inherently interpretable models, outlines several key reasons why explainable black boxes should be. This manuscript clarifies the chasm between explaining black boxes and using inherently interpretable models, outlines several key reasons why explainable black boxes should be avoided in high stakes decisions, identifies challenges to interpretable machine learning, and provides several example applications where interpretable models could.

Cynthia Rudin Stop Explaining Black Box Machine Learning Models High This manuscript clarifies the chasm between explaining black boxes and using inherently interpretable models, outlines several key reasons why explainable black boxes should be avoided in high stakes decisions, identifies challenges to interpretable machine learning, and provides several example applications where interpretable models could. The chasm between explaining black boxes and adopting inherently interpretable models is clarified and it is demonstrated how interpretable hybrid models could potentially supplant black box ones in different domains. Recent work on the explainability of black boxes—rather than the interpretability of models—contains and perpetuates critical misconceptions that have generally gone unnoticed, but that can have a lasting negative impact on the widespread use of ml models in society. This perspective clarifies the chasm between explaining black boxes and using inherently interpretable models, outlines several key reasons why explainable black boxes should be.

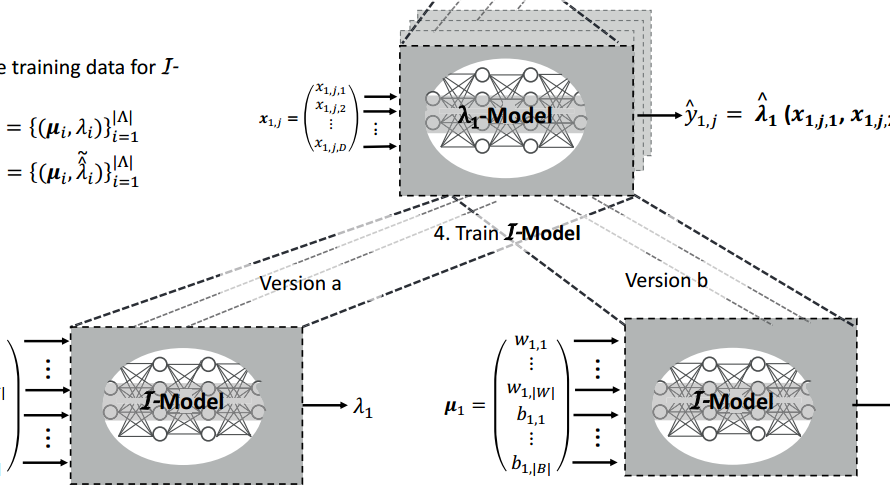

Stop Explaining Black Box Machine Learning Models For High Stakes Recent work on the explainability of black boxes—rather than the interpretability of models—contains and perpetuates critical misconceptions that have generally gone unnoticed, but that can have a lasting negative impact on the widespread use of ml models in society. This perspective clarifies the chasm between explaining black boxes and using inherently interpretable models, outlines several key reasons why explainable black boxes should be. Instead of trying to develop tools that explain the machine learning models, we should all be working towards developing models that are inherently explainable. The two main takeaways from this paper: first, it underscores the difference between explainability and interpretability and presents why the former may be problematic. second, it provides some great pointers for creating truly interpretable models. In explainable ml we make predictions using a complicated black box model (e.g., a dnn), and use a second (posthoc) model created to explain what the first model is doing. a classic example here is lime, which explores a local area of a complex model to uncover decision boundaries. It can be argued that instead efforts should be directed at building inherently interpretable models in the first place, in particular where they are applied in applications that directly affect human lives, such as in healthcare and criminal justice.

Machine Learning For Converting Black Box Models To Interpretable Instead of trying to develop tools that explain the machine learning models, we should all be working towards developing models that are inherently explainable. The two main takeaways from this paper: first, it underscores the difference between explainability and interpretability and presents why the former may be problematic. second, it provides some great pointers for creating truly interpretable models. In explainable ml we make predictions using a complicated black box model (e.g., a dnn), and use a second (posthoc) model created to explain what the first model is doing. a classic example here is lime, which explores a local area of a complex model to uncover decision boundaries. It can be argued that instead efforts should be directed at building inherently interpretable models in the first place, in particular where they are applied in applications that directly affect human lives, such as in healthcare and criminal justice.

White Box Vs Black Box Models Importance Of Interpretable Model In In explainable ml we make predictions using a complicated black box model (e.g., a dnn), and use a second (posthoc) model created to explain what the first model is doing. a classic example here is lime, which explores a local area of a complex model to uncover decision boundaries. It can be argued that instead efforts should be directed at building inherently interpretable models in the first place, in particular where they are applied in applications that directly affect human lives, such as in healthcare and criminal justice.

Interpretable Machine Learning Explaining Black Box Models For Better

Comments are closed.